How to Increase Daily Active Users (DAU) in Mobile Apps

Daily active users (DAU) is the single hardest mobile app metric to move, and the one most tightly correlated with long-term success. If your DAU is trending up at a steady rate, almost everything else (revenue, retention, word of mouth) usually follows. If it's flat, no amount of acquisition spend will save the unit economics.

I've worked with dozens of mobile product teams trying to raise DAU, and the ones that succeed do three things that the ones who don't do not. They measure DAU against the right benchmark for their category. They invest in first-session activation before they invest in re-engagement. And they watch what their most-active users actually do, instead of copying engagement tactics from generic blog posts.

Daily active users (DAU) is the count of unique users who opened a mobile app and triggered at least one qualifying event in a given day. Weekly (WAU) and monthly (MAU) follow the same logic over longer windows. The DAU/MAU ratio is one of the clearest signals of engagement quality: a ratio above 20% is healthy for most B2C apps, above 50% for social apps, 10-15% for ecommerce. The number alone matters less than the trend for the cohorts you care about.

Key takeaways

DAU is lagging. By the time DAU falls, the causes (bad first session, broken onboarding, missing re-engagement) have usually been in place for weeks or months. The fastest way to raise DAU next quarter is to fix first-session activation this quarter.

The DAU/MAU ratio is more useful than absolute DAU for diagnosing engagement quality. Social apps above 50%, productivity 25-35%, ecommerce 10-20%, fintech 15-25%. Trend matters more than absolute number.

Measuring "opened the app" as engagement is a mistake. An app open is a visit, not engagement. Define a qualifying event that means the user got some value (logged a workout, added a contact, completed a core action), and count only those.

The highest-leverage DAU lever for most apps is the first session. Users who complete a meaningful action on day one retain at multiples of the rate of those who don't, and the DAU impact compounds over time.

Tara, UXCam's AI analyst, is particularly useful for DAU diagnosis because it can tell you what specific behaviors your highest-retention users have in common, which often reveals a non-obvious activation path worth making more prominent.

What is an active user?

An active user is a user who performed a qualifying action in your mobile app during a given time window. What counts as a qualifying action is up to you, but the choice matters enormously.

Qualifying events, not opens

Most mobile analytics tools default to "app open" as the signal for a DAU count. That's too loose. A user who opens the app, sees a notification, and closes it doesn't count as engaged in any meaningful sense. Define a minimum qualifying event that indicates real interaction: a search, a scroll past the first screen, a tap on a feature, anything that distinguishes "visited" from "used."

Duolingo counts a user as active when they start a lesson. Strava counts them when they start a recorded activity. Headspace counts them when they start a meditation session. Each of these is a deliberate choice that ties DAU to actual product value, not just app opens.

Common types of active user metrics

| Metric | Window | What it tells you |

|---|---|---|

| DAU | 1 day | Immediate engagement, daily habit formation |

| WAU | 7 days | Weekly cycle, week-over-week growth trend |

| MAU | 30 days | Total monthly reach, revenue-relevant scale |

| DAU/MAU ratio | 30 days | Engagement quality, "stickiness" |

| L7 | Trailing 7 days | Proportion of active-on-this-day cohort over 7 days |

| L28 | Trailing 28 days | Mature retention signal, less volatile than DAU |

L7 and L28 (popularized by Facebook and Snapchat) are more informative than raw DAU for most consumer apps because they describe distributed activity rather than single-day spikes.

How to track active users

Instrument the right events

Before you can grow DAU, you need to measure it correctly. Start by defining your qualifying event in a shared doc. Debate it until the team agrees. Then instrument it consistently across iOS, Android, and (if applicable) web.

Common qualifying events by category:

Fitness apps (think Strava, MyFitnessPal): workout started, goal logged

Ecommerce apps: product viewed, item added to cart

Fintech apps: balance checked, transaction viewed, transfer initiated

Streaming apps: content started, 3+ minutes of playback

Pick the right tool

Most teams track active users through their product analytics platform (Mixpanel, Amplitude, or UXCam). The differences that matter for active-user tracking: how easy it is to define "qualifying" events, how cleanly the tool handles sessionization (especially across backgrounded apps), and whether you can segment actives by cohort, platform, and acquisition source out of the box.

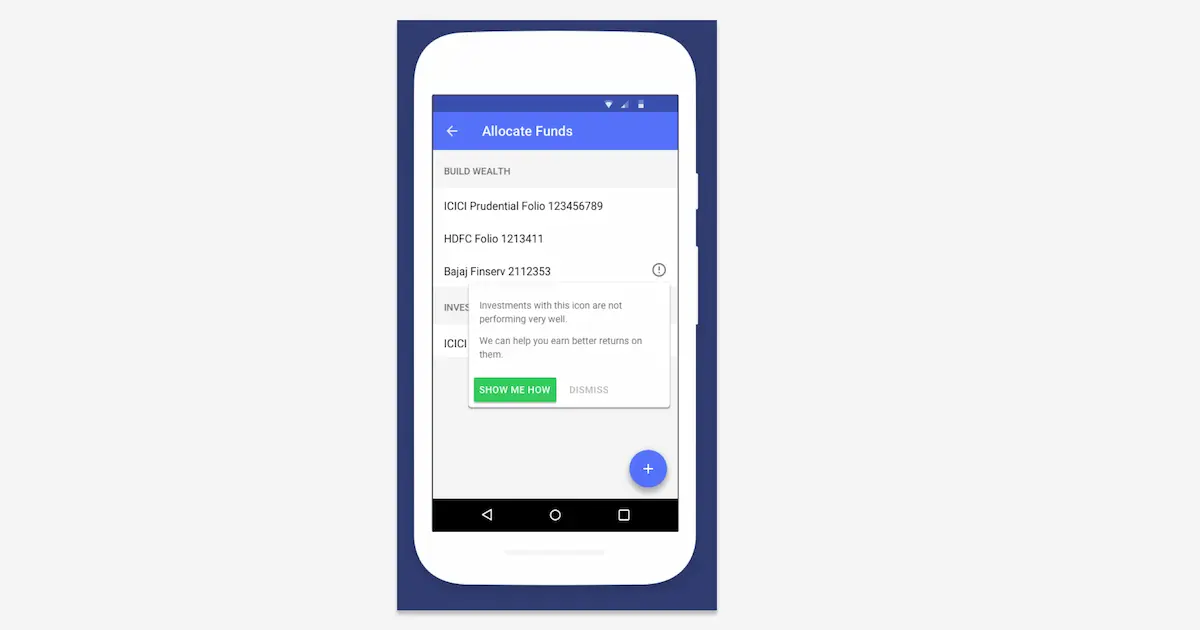

UXCam adds one capability most alternatives don't: every DAU count is backed by real session replays. When DAU drops, you click into the drop, filter to the users who churned that day, and watch what they did in their last session. That's the diagnostic step most teams skip because it takes too long without the tool. Tara AI takes it a step further by summarizing patterns across thousands of replays automatically.

How to increase daily active users

Here are the ten strategies I'd rank as highest-impact for mobile DAU growth in 2026, in priority order.

1. Nail the first session (biggest lever)

Users who take a meaningful action in their first session retain at multiples of the rate of users who open the app, scroll, and close. That multiplier compounds over the retention curve, which is why first-session activation is the single biggest DAU lever.

What to do:

Define your "activation event" (the action that predicts long-term retention)

Measure how many users hit it in session one

Watch session replays of users who don't, looking for the specific friction points

Remove barriers before the activation event: permission prompts, account setup, tutorial walls

Housing.com used this approach to grow multi-area search adoption from 20% to 40%. They watched 50-100 session replays daily to understand why users weren't discovering a feature, then redesigned the entry point. That's the pattern.

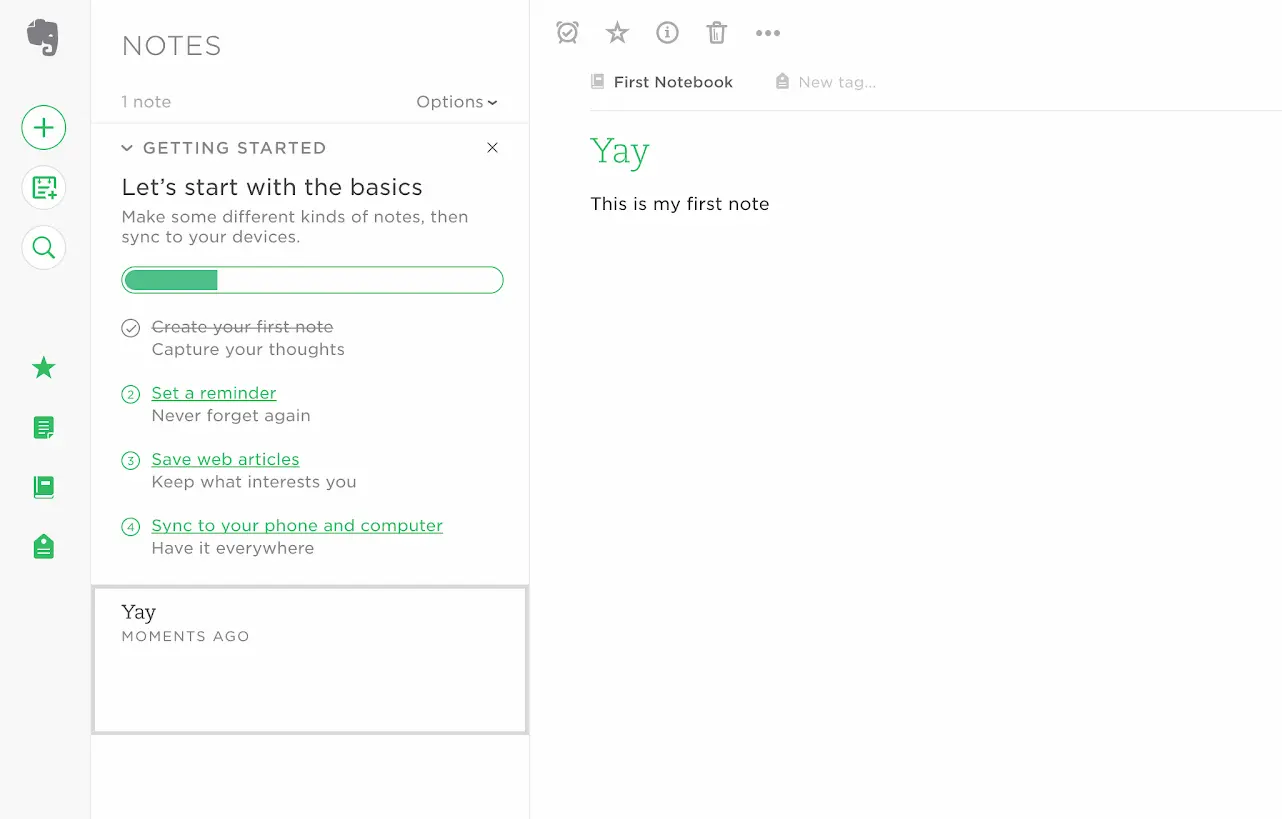

2. Build an efficient onboarding process

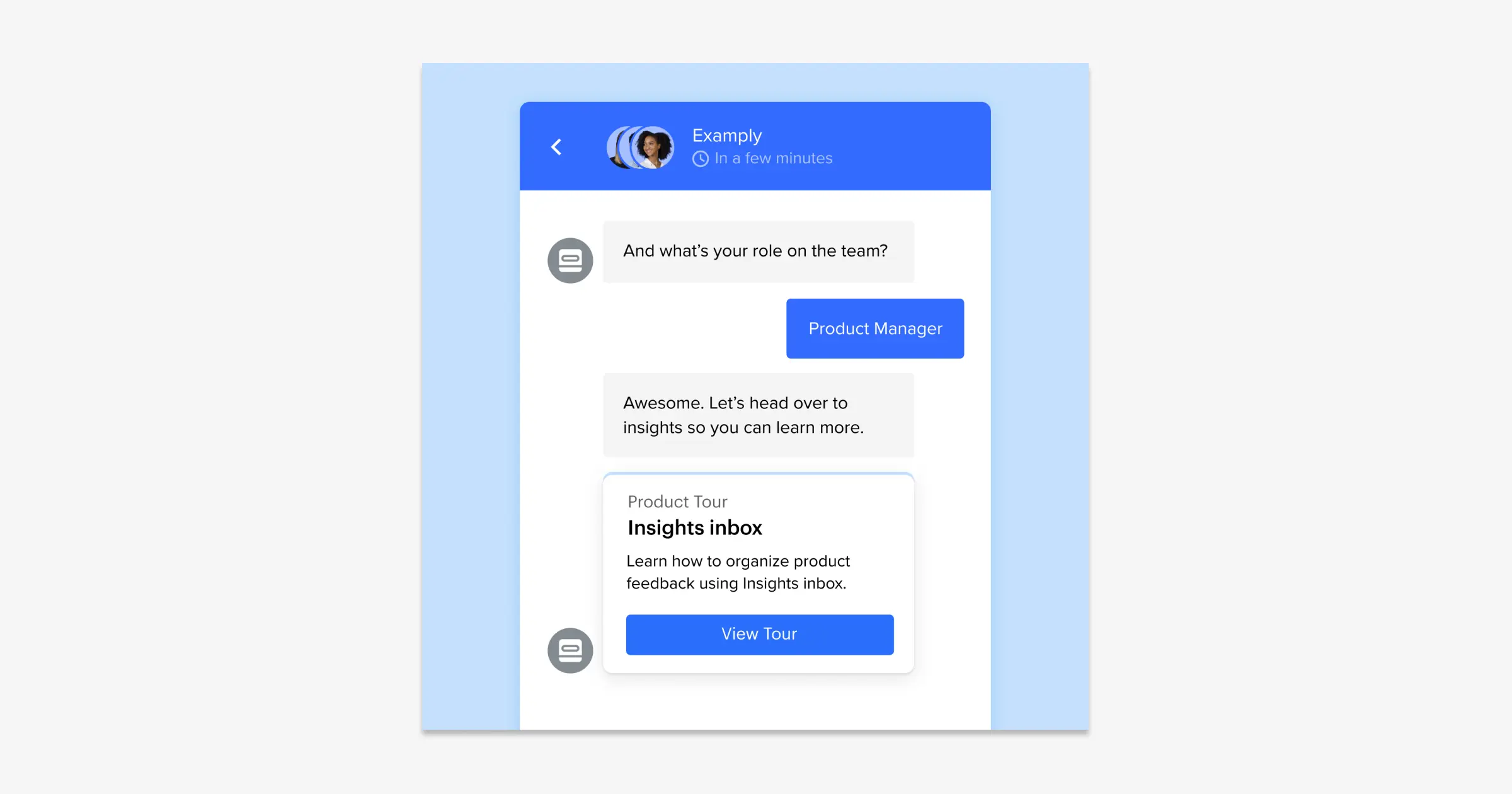

Onboarding isn't tutorial screens. Good onboarding is a sequence of small wins that show the user the value of the app through use, not explanation. The best onboarding flows I've worked with have three properties: short (three to four screens or fewer), personalized (based on one or two quick questions), and interrupted by the first meaningful action (don't front-load everything).

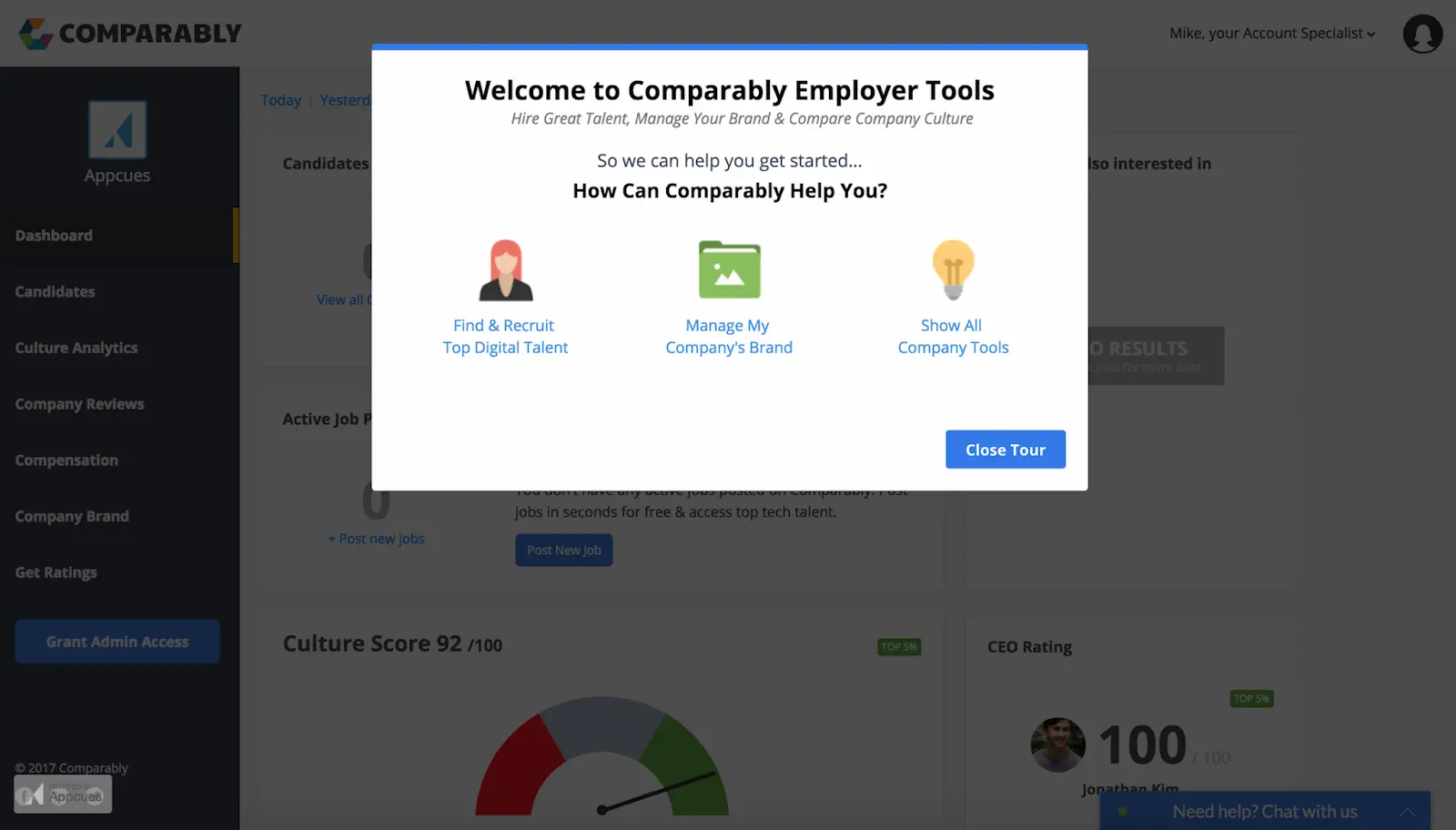

3. Use product tours and tooltips sparingly

Product tours are useful for surfacing new features to existing users, but overused for onboarding. A seven-step walkthrough of your app's main screens doesn't help new users retain. It makes them skip.

Where tours work well: pointing existing users at a new feature they haven't discovered. Where they fail: using them in place of a genuinely intuitive UI. If users need a tour to find your core action, fix the UI instead.

4. Personalize based on behavior, not demographics

Personalization based on demographics (age, gender, location) has weaker predictive power than personalization based on observed behavior. Two users in the same demographic segment often behave completely differently in-app.

What I recommend: segment your users by what they've actually done (first feature used, primary use case, session frequency), then customize the home screen, notifications, and re-engagement based on those segments. This is data-driven personalization, not guesswork.

Inspire Fitness used behavior-based personalization plus session-replay diagnostics to boost time-in-app by 460% and cut rage taps by 56%. The pattern: watch what users do, segment accordingly, adjust the experience per segment.

5. Optimize UX writing

Copy does more work in mobile than most teams credit. A clearer error message, a better CTA label, or a less aggressive empty-state headline can lift engagement measurably on its own.

What to audit: every error message (do they tell the user what to do next?), every button label (does it describe the action or is it generic?), every empty state (does it motivate the next action or just confirm emptiness?). I've seen teams lift conversion rates by 10-15% on single-screen copy rewrites.

6. Build a re-engagement loop

A re-engagement loop is a recurring trigger that brings users back before they churn. The most common: push notifications, email, and in-app reminders. Pick one channel and use it well rather than spreading across three at low intensity. I keep push frequency below three per week for most categories and personalize based on observed behavior (which feature the user last used) rather than demographics.

The re-engagement loop should trigger based on a signal, not on a timer. "User hasn't opened the app in 3 days" is a timer. "User started a task and didn't complete it within 48 hours" is a signal. Signal-based triggers open at materially higher rates.

7. Give users a reason to return within 24 hours

Streak mechanics, daily content drops, scheduled reminders, time-gated rewards. The specific pattern varies by category, but every app with above-median DAU has some intentional mechanic that creates a reason for users to come back within 24 hours of their last session. Duolingo's streaks are the most famous example. Strava's activity feed is another (your friends' workouts show up and you want to match or beat them).

8. Reduce friction across core flows

Run a rage-tap audit on your three most-used screens. Rage taps (four or more taps in a second on the same spot) are a clear signal that something users expect to work doesn't work. UXCam's Issue Analytics surfaces these automatically. Every rage-tap fix I've shipped has moved session completion rate, which feeds directly into DAU.

9. Add a community layer (where it fits)

An in-app community can lift DAU by giving users a reason to open the app that has nothing to do with your core product. Flo's community threads drive daily opens beyond the period-tracking use case. Strava's social feed keeps users opening the app on rest days. This only works when community fits the category. Budgeting apps and single-player productivity tools don't benefit.

10. Measure activation rate and iterate weekly

Define your activation event (the in-app action that best predicts day-30 retention). Measure the percentage of new users who hit it in session one. Review the number weekly. Run session replays on the cohort that didn't activate. Fix the top friction point. Measure again. This flywheel is the one that compounds DAU over time, and it never stops being useful.

Unlock sustainable DAU growth with UXCam

UXCam is a product intelligence platform that automatically captures every user interaction on mobile apps and websites, with no manual event tagging. For DAU growth, the combination of quantitative analytics (retention cohorts, funnels) and qualitative analytics (session replay, issue detection) in one platform is what makes it actionable. Dashboards tell you DAU dropped. Session replay tells you why. Tara, UXCam's AI analyst, ranks which fixes will move DAU the most, so teams get answers without waiting on analysts.

Installed in 37,000+ products, mobile-first, web-ready. Request a demo to see it for your app.

Frequently asked questions

What is a daily active user (DAU)?

A daily active user is a unique user who opened your mobile app and performed a qualifying action in a single day. The qualifying action should be meaningful (a logged workout, a posted message, a completed transaction), not just an app open. Counting opens as DAU inflates the number without reflecting real engagement.

How do you calculate DAU, WAU, and MAU?

DAU is the count of unique users active in a single day. WAU is unique users active in any day of a 7-day window. MAU is unique users active in any day of a 30-day window. Use your product analytics tool to dedupe correctly: a user active on multiple days in the window should be counted once, not once per day.

What is a good DAU/MAU ratio?

DAU/MAU ratio reference ranges by category: social apps 50% or higher, productivity apps 25-35%, ecommerce 10-20%, fintech 15-25%. The ratio matters less than the trend. Is it trending up or down for your cohorts? That's the real question.

How do you increase daily active users?

The highest-leverage move is improving first-session activation. Users who complete a meaningful action on day one retain at multiples of the rate of those who don't, so the DAU benefit compounds. After that, a well-designed onboarding flow, behavior-based personalization, and one well-run re-engagement channel (email or push, not both at low intensity) cover most of the gains most teams need.

Why are my DAU numbers dropping?

Common causes I see in session replays: a recent release introduced a bug in the core flow (often only on specific device classes), onboarding steps are losing users before they activate, push notification frequency is too high and users have muted or disabled them, or the app isn't giving users a clear reason to return after session one. Watch replays of users who churned in the last 7 days to find the specific cause.

What's the difference between DAU and retention?

DAU measures today's engagement. Retention measures whether users from a past cohort come back over time. You can have stable DAU while retention leaks (new users replacing churning old users), which is an unstable pattern. Always look at both. Retention curves for specific install cohorts tell you whether the product is getting stickier or not.

How many days of DAU data do I need to see trends?

For weekly cycles: at least four weeks, so you can compare week-over-week without being fooled by a single bad week. For campaign-level DAU lifts: at least two weeks post-launch, because early DAU bumps often regress. For cohort retention trends: at least three cohorts and 30 days each, before you make conclusions about whether retention is improving.

AUTHOR

Silvanus Alt, PhD

Founder & CEO | UXCam

Silvanus Alt, PhD, is the Co-Founder & CEO of UXCam and a expert in AI-powered product intelligence. Trained at the Max Planck Institute for the Physics of Complex Systems, he built Tara, the AI Product Analyst that not only analyzes user behavior but recommends clear next steps for better products.

Related articles

Conversion Analysis

Top 51 Mobile App KPIs: The Complete List for 2026

The 51 mobile app KPIs worth knowing in 2026, organized by category, with the 10 that actually matter for most product teams...

Silvanus Alt, PhD

Founder & CEO | UXCam

Conversion Analysis

Cómo Medir el Rendimiento de una App Móvil: 18 Métricas Que Importan (2026)

Las métricas de rendimiento de apps móviles miden qué tan rápida, confiable y atractiva se siente una aplicación para los...

Silvanus Alt, PhD

Founder & CEO | UXCam

Conversion Analysis

Como Medir a Performance de Aplicativos Móveis: 18 Métricas Que Importam (2026)

Métricas de performance de aplicativos móveis medem o quão rápido, confiável e envolvente um app parece ser para os...

Silvanus Alt, PhD

Founder & CEO | UXCam