10 Best A/B Testing Tools for Mobile Apps in 2026

TABLE OF CONTENTS

- How we evaluated these tools (methodology)

- Key takeaways

- The 10 best A/B testing tools for mobile apps

- Comparison table

- 1. Statsig

- 2. Firebase A/B Testing

- 3. Optimizely

- 4. LaunchDarkly

- 5. Split

- 6. Apptimize

- 7. AB Tasty

- 8. Amplitude Experiment

- 9. VWO

- 10. UXCam (behavioral analytics layer)

- Tools I evaluated and excluded (and why)

- What is A/B testing for mobile apps?

- How to form a good A/B testing hypothesis

- Community discussions worth reading

- Improve your experimentation with UXCam

A/B testing tools let mobile product teams serve two or more variants of an in-app experience to different user cohorts, measure which variant moves a chosen metric, and decide which one ships. The tool you pick matters less than the discipline around what to test, but the wrong choice can slow a disciplined team down by weeks.

I evaluated 15+ mobile experimentation tools for this list and narrowed to 10 based on four criteria (detailed in the methodology below). The landscape shifted meaningfully in the last year: feature-flag-first platforms have eaten a big part of the market that used to belong to Optimizely, and Statsig in particular has pulled ahead on price, UX, and mobile SDK quality for small-to-mid teams.

How we evaluated these tools (methodology)

Every tool on this list was assessed on four criteria, weighted by how much each matters to a mobile product team:

Mobile SDK quality (30% weight): native iOS, Android, React Native, and Flutter support. SDK size, initialization speed, offline behavior, and App Store review compatibility.

Statistical rigor (25% weight): the experimentation engine's ability to calculate significance correctly, support sequential testing, handle multiple metrics, and avoid common statistical pitfalls (peeking, underpowered tests).

Pricing accessibility (25% weight): cost relative to the value for the team size. Free tiers, pricing transparency, and whether the tool requires an enterprise commitment to be useful.

Ecosystem fit (20% weight): integrations with analytics tools, crash reporting, CI/CD pipelines, and the broader mobile development workflow.

I also factored in G2 and Capterra ratings as external validation signals, Reddit community sentiment (r/ProductManagement, r/ExperienceOptimization), and direct conversations with mobile PMs using these tools in production.

Tools are ranked in my recommended order for a mobile team starting fresh in 2026. Your ranking may differ depending on your existing stack, team size, and experiment volume.

Key takeaways

For most small-to-mid mobile teams in 2026, Statsig or Firebase A/B Testing covers the use case at a price that makes sense. Statsig if you care about experiment velocity and analytics depth, Firebase if you're already deep in the Google ecosystem.

Optimizely remains the enterprise default, but pricing has pushed many teams toward feature-flag-first alternatives like Statsig, Split, and LaunchDarkly.

The best setup pairs an experimentation platform with a behavioral analytics tool (like UXCam) so you understand not just which variant won, but why.

Don't start with the tool. Start with a hypothesis that names the metric, the target cohort, and the expected effect size. Without those three, no tool will save the experiment.

Watch out for mobile-specific gotchas: App Store review cycles, iOS App Tracking Transparency (ATT), and offline-first apps where experiments can't rely on live network calls.

The 10 best A/B testing tools for mobile apps

Statsig

Firebase A/B Testing

Optimizely

LaunchDarkly

Split

Apptimize

AB Tasty

Amplitude Experiment

VWO

UXCam (behavioral analytics layer)

Comparison table

| # | Tool | G2 Rating | Best for | Pricing |

|---|---|---|---|---|

| 1 | Statsig | 4.8/5 (120+ reviews) | Small-to-mid teams wanting experimentation + analytics | Free up to 1M events, then usage-based |

| 2 | Firebase A/B Testing | 4.5/5 (as part of Firebase) | Teams already in the Google/Firebase ecosystem | Free |

| 3 | Optimizely | 4.3/5 (500+ reviews) | Enterprise experimentation programs (20+ concurrent tests) | ~$30K-50K/yr |

| 4 | LaunchDarkly | 4.7/5 (250+ reviews) | Feature-flag-first teams adding experiments on top | ~$10/mo per seat |

| 5 | Split | 4.5/5 (90+ reviews) | Data-heavy teams where engineers run experiments | ~$8K/yr |

| 6 | Apptimize | 4.3/5 (30+ reviews) | Mobile-only teams needing visual editor changes | Contact for quote |

| 7 | AB Tasty | 4.5/5 (200+ reviews) | EU-based companies with data hosting requirements | ~$2K/mo |

| 8 | Amplitude Experiment | 4.5/5 (as part of Amplitude) | Teams already using Amplitude for product analytics | Add-on to Amplitude |

| 9 | VWO | 4.3/5 (400+ reviews) | Web+mobile teams wanting one vendor | ~$200/mo |

| 10 | UXCam | 4.7/5 (200+ reviews) | Understanding why experiment variants win or lose | Free up to 3K sessions |

G2 ratings retrieved April 2026. Pricing is approximate and changes frequently; always confirm with the vendor.

1. Statsig

G2 rating: 4.8/5 (120+ reviews) | Best for: small-to-mid mobile teams | Pricing: free up to 1M events/mo, then usage-based

My top pick for most mobile teams starting fresh in 2026. Statsig combines feature flags, A/B testing, and product analytics in one platform with pricing that makes sense below enterprise scale. The mobile SDK support is broad (iOS, Android, React Native, Flutter), the statistical engine handles sequential testing well, and the free tier covers most early-stage teams.

Pros:

Generous free tier (1M events/month) that many small teams never outgrow

Sequential testing engine lets you stop experiments early when results are clear, saving time and traffic

Built-in product analytics means you don't need a separate analytics tool for basic experiments

Developer-friendly SDKs with strong documentation and responsive community Slack

Cons:

Free tier is event-based, so high-volume apps can outgrow it faster than expected

If you already own Amplitude or Mixpanel, there's some analytics overlap

The brand is newer than Optimizely or LaunchDarkly, so enterprise procurement teams may push back

Why it's #1: best combination of mobile SDK quality, statistical rigor, pricing, and iteration speed for teams that don't need (or want) an enterprise contract.

2. Firebase A/B Testing

G2 rating: 4.5/5 (as part of Firebase) | Best for: Firebase-native teams | Pricing: free

Google's built-in mobile A/B testing, running on top of Remote Config. Free at any scale, deep integration with the rest of Firebase (Analytics, Crashlytics, Cloud Messaging, Remote Config), and the cleanest iOS/Android SDK integration if you're already in the Firebase ecosystem.

Pros:

Completely free, no event limits for experimentation

Tight integration with Firebase Analytics, Crashlytics, and Remote Config

Simple setup if you're already using Firebase for analytics and push

Bayesian inference is available alongside frequentist

Cons:

Statistical analysis is basic compared to Statsig or Optimizely

The experiment management UI is clunky for managing more than 5-10 concurrent experiments

Limited audience targeting compared to dedicated experimentation tools

No built-in multivariate testing support

Why it's #2: unbeatable for teams already in the Google ecosystem who need basic experimentation at zero cost.

3. Optimizely

G2 rating: 4.3/5 (500+ reviews) | Best for: enterprise experimentation programs | Pricing: ~$30K-50K/yr

The enterprise default. Broad feature set, strong statistical rigor, mature mobile SDKs, and solid support for multivariate testing and personalization. If you run 20+ concurrent experiments and have a dedicated experimentation team, Optimizely still holds up.

Pros:

The most mature mobile experimentation platform on the market

Advanced multivariate and personalization capabilities

Strong statistical engine with stats accelerator for faster results

Well-documented APIs and SDKs with long track record

Cons:

Pricing starts at ~$30K-50K/yr, which rules out most non-enterprise teams

Mobile-specific features haven't seen as much investment in the last 18 months as the web side

The UI can feel heavy for teams running fewer than 10 experiments at a time

Why it's #3: the right choice for enterprise programs where budget exists and the experimentation volume justifies the investment. Not cost-effective for smaller teams.

4. LaunchDarkly

G2 rating: 4.7/5 (250+ reviews) | Best for: feature-flag-first teams | Pricing: ~$10/mo per seat

Feature-flag-first platform that added A/B testing as an extension of its core product. Most companies using LaunchDarkly chose it for release management and flagging, then layered experiments on top.

Pros:

Excellent feature flag management (the best in the category)

Natural integration between "which users see this feature" and "does this feature perform better"

Strong mobile SDKs with server-side rendering fallback

Active developer community and good documentation

Cons:

As a pure experimentation tool, weaker than Statsig or Optimizely

Analytics depth is limited; you'll need a separate product analytics tool

Per-seat pricing adds up fast for larger product teams

Why it's #4: ideal when feature flags drive your deployment process and experimentation is the natural next step.

5. Split

G2 rating: 4.5/5 (90+ reviews) | Best for: data-heavy teams | Pricing: ~$8K/yr

Feature-flag-first platform with a slightly stronger data-science-friendly analytics layer than LaunchDarkly. Good mobile SDK coverage. Positions itself as the developer-oriented alternative to LaunchDarkly.

Pros:

Strong treatment of statistical significance for data-literate teams

Good attribution tracking across experiments

Supports feature flags, kill switches, and progressive rollouts natively

Cons:

The product-manager-facing experience is less polished than Statsig or Optimizely

Brand is less well-known, which makes procurement harder at some companies

Pricing opacity (most plans require a call with sales)

Why it's #5: a solid pick when your engineering team leads experimentation and cares about data rigor.

6. Apptimize

G2 rating: 4.3/5 (30+ reviews) | Best for: mobile-only teams | Pricing: contact for quote

Purely mobile-focused A/B testing platform. Historically strong iOS and Android SDK quality, handles App Store constraints well, and supports visual editor changes without resubmission. Part of the Airship group since 2019.

Pros:

Purpose-built for mobile, not a web tool with mobile bolted on

Visual editor for making changes without code deploys or App Store review cycles

Handles offline/caching scenarios well for mobile-first apps

Cons:

The product has received less investment than the Airship messaging side

The experimentation UX feels a generation behind Statsig

Opaque pricing (enterprise-only)

Smaller community means fewer shared learnings and integrations

Why it's #6: worth considering for mobile-only teams that need visual editing (no App Store resubmission), but the lack of modern UX and transparent pricing push it below the top 5.

7. AB Tasty

G2 rating: 4.5/5 (200+ reviews) | Best for: EU-based companies | Pricing: ~$2K/mo

European experimentation platform with strong mobile SDK support and more accessible pricing than Optimizely. Popular in retail and media.

Pros:

EU data hosting (required for some GDPR compliance interpretations)

Mature visual editor for web experiments

More accessible pricing than Optimizely for mid-market teams

Decent analytics and segmentation built in

Cons:

Less developer mindshare in the US means fewer integrations and community resources

Mobile SDK is fine but not the best part of the product

Smaller ecosystem than Statsig or Optimizely

Why it's #7: the natural pick for EU teams that need local data hosting and don't want to pay Optimizely prices.

8. Amplitude Experiment

G2 rating: 4.5/5 (as part of Amplitude) | Best for: existing Amplitude customers | Pricing: add-on to Amplitude

Amplitude's experimentation product, tightly coupled to their product analytics platform. If you already run Amplitude for analytics, Experiment is the natural add-on that uses the same events and cohorts.

Pros:

Tightest integration between cohort analysis and experiment targeting in the category

Uses Amplitude's existing event taxonomy (no duplicate instrumentation)

Good for teams that want to segment and experiment from the same interface

Cons:

Requires an Amplitude contract; not standalone

Pricing compounds when you add Experiment to an existing Amplitude plan

Not a reason to switch to Amplitude if you're on another analytics platform

Why it's #8: excellent for existing Amplitude customers, not compelling for anyone else.

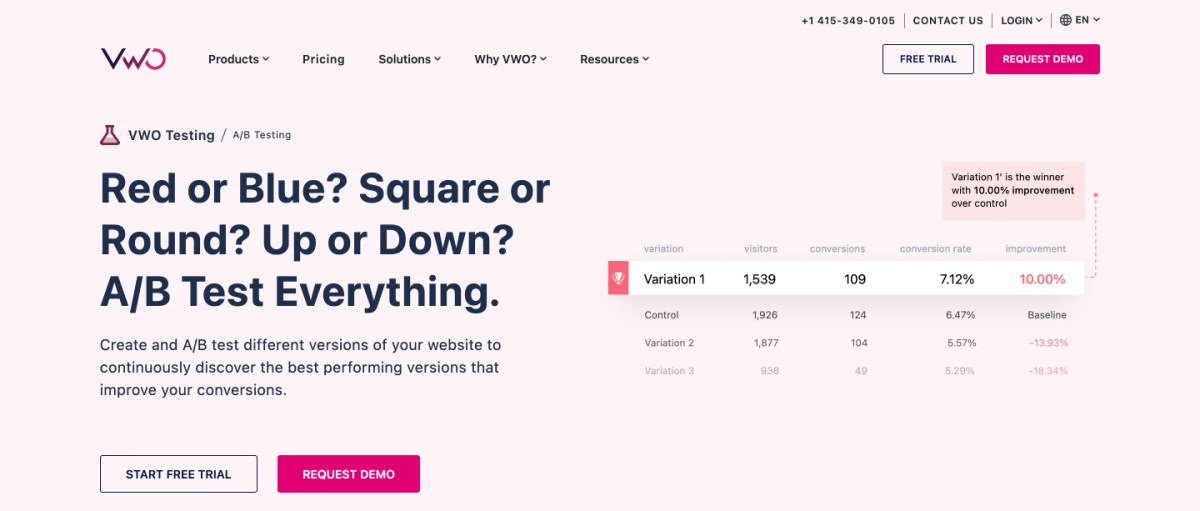

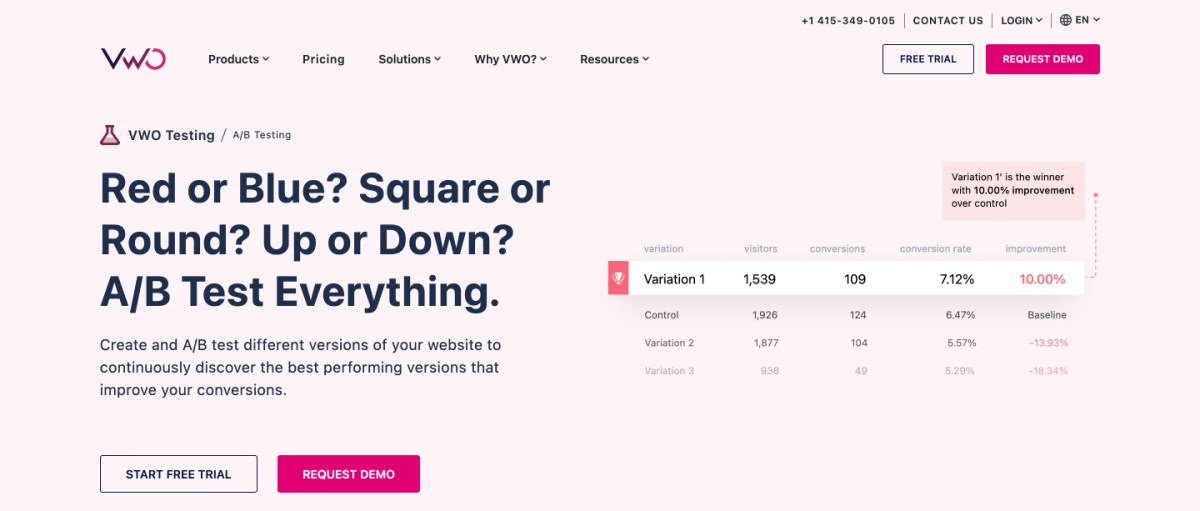

9. VWO

G2 rating: 4.3/5 (400+ reviews) | Best for: web+mobile teams wanting one vendor | Pricing: ~$200/mo

Web-first experimentation platform with mobile SDK support, visual editing, form analytics, and session recording built in. Good value for the price compared to Optimizely.

Pros:

Broad feature set in one tool (experiments, heatmaps, session recording, form analytics)

Visual editor is well-liked for landing-page tests

Accessible pricing compared to enterprise alternatives

Cons:

Mobile SDK is less mature than the web product

If mobile is your primary use case, mobile-first tools are better fits

Session recording quality is below dedicated tools like UXCam or FullStory

Why it's #9: a reasonable choice for teams that split time between web and mobile and want one vendor. Not ideal if mobile is the primary platform.

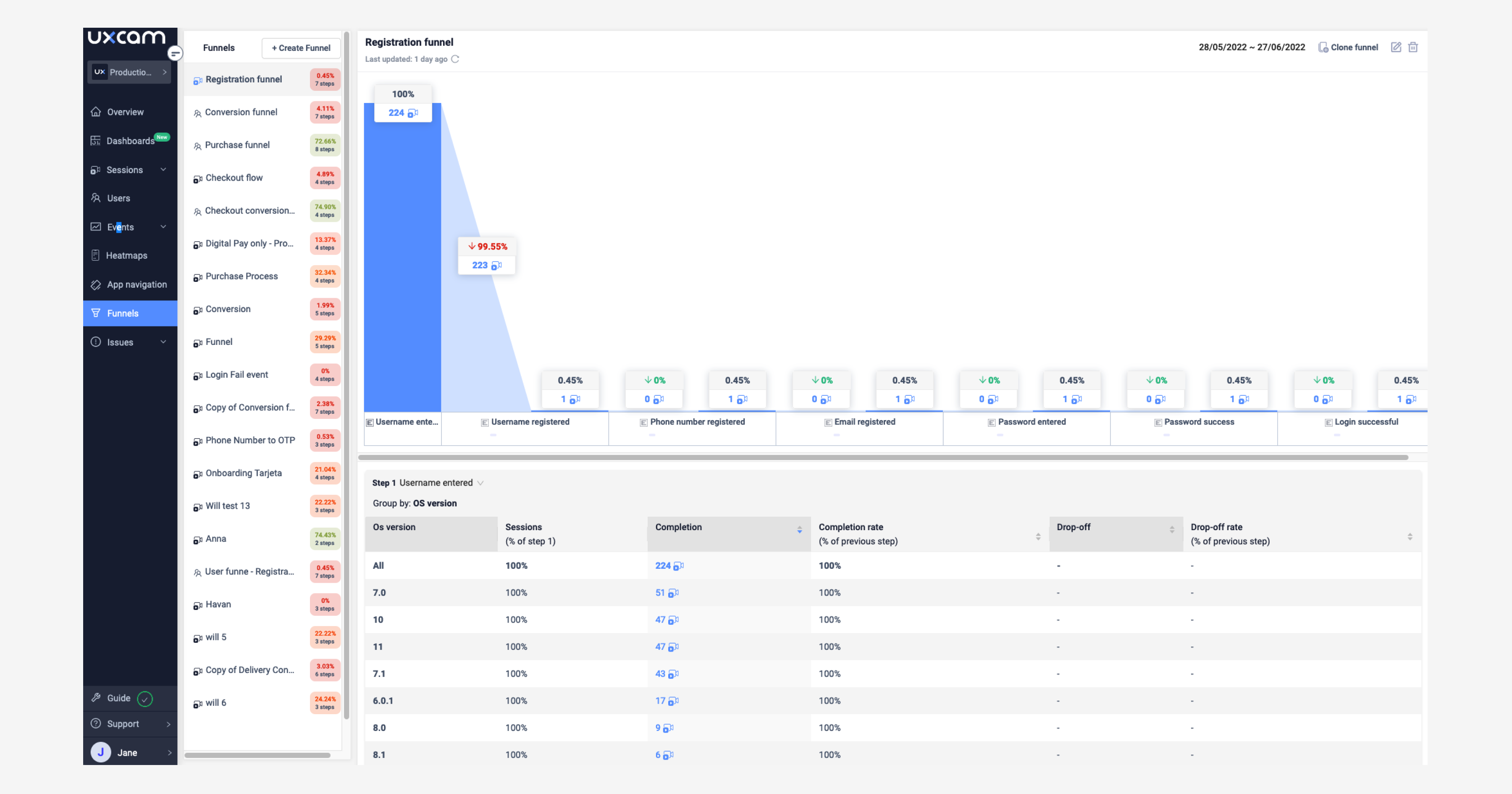

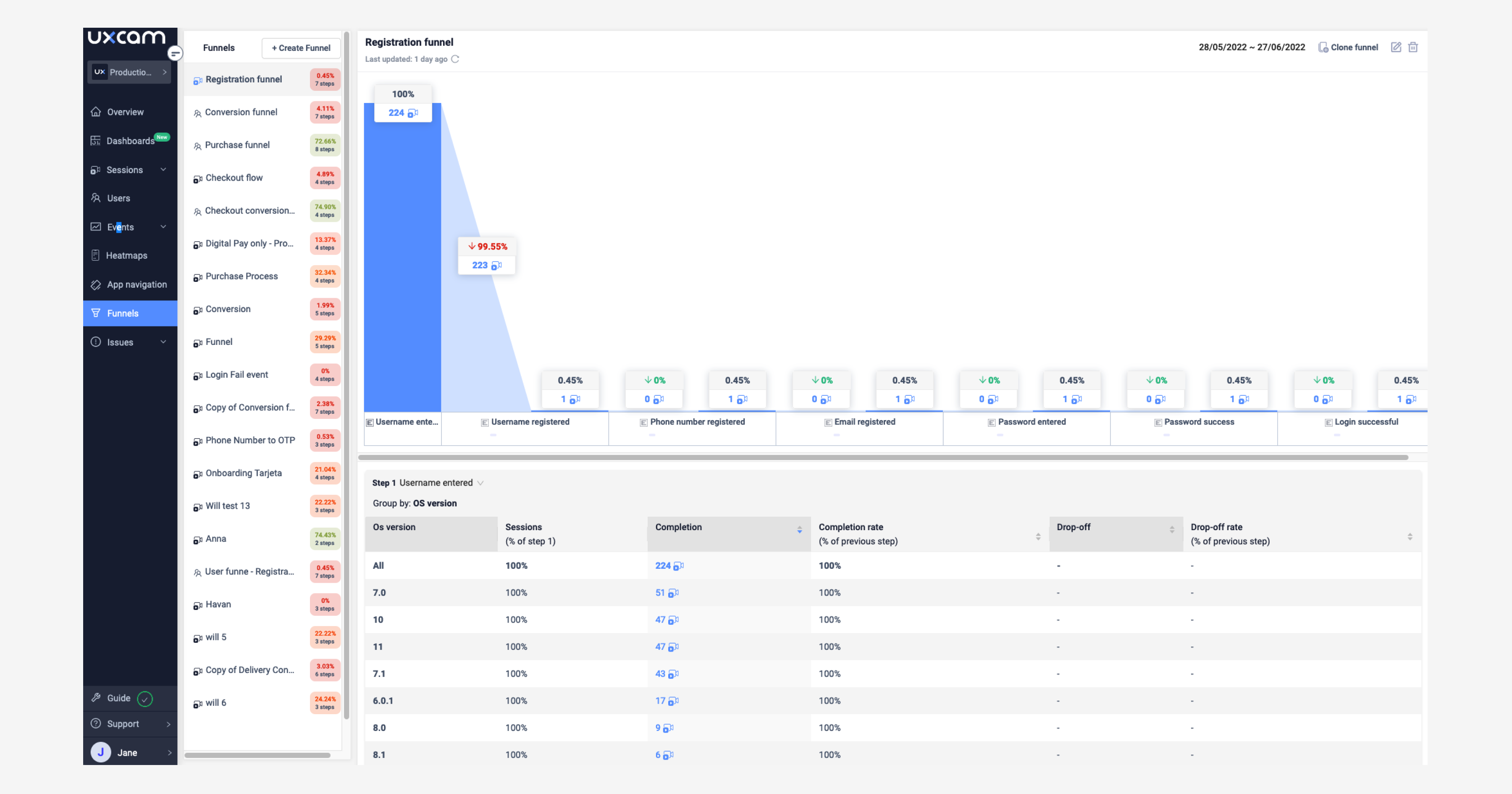

10. UXCam (behavioral analytics layer)

UXCam sits one layer below A/B testing: the behavioral analytics that explains your experiment results. I recommend running it alongside any experimentation platform, because reading the winner of an A/B test is only half the job. The other half is understanding why users in each variant behaved differently.

G2 rating: 4.7/5 (200+ reviews) | Best for: understanding experiment results | Pricing: free up to 3K sessions

When an experiment shows a lift, I want to know what specifically changed in user behavior. When it shows a regression, I need to see which part of the new flow confused users. UXCam's session replay and Tara AI analyst let me watch sessions filtered by experiment variant and surface the behavioral patterns behind the statistical result.

Pros:

Session replay filtered by experiment variant reveals the "why" behind A/B test results

Tara AI summarizes behavioral patterns across thousands of sessions automatically

Works alongside any of the 9 tools above (not a replacement, a complement)

Free tier (3K sessions) covers initial diagnostic work

Cons:

Doesn't serve variants or calculate statistical significance; you still need a separate experimentation tool

Session replay adds SDK weight (~300KB) to your app binary

Why it's #10: not an experimentation tool, but the diagnostic layer that makes every experiment more useful. The combination of experimentation + behavioral analytics is what separates teams that iterate fast from teams that run experiments and learn nothing.

Tools I evaluated and excluded (and why)

Hotjar: strong for qualitative research on web. Mobile support is limited and A/B testing is not its core competency. Use it for surveys and heatmaps, not experiments.

Crazy Egg: similar to Hotjar. Good for landing-page heatmaps. A/B testing module is too basic for serious experimentation.

Google Optimize: shut down by Google in September 2023. If your stack still references it, migrate to Statsig or Firebase.

Convert: the product works, but the community and integration ecosystem are thin enough that the switching cost isn't justified for most teams.

What is A/B testing for mobile apps?

A/B testing is a method of comparing two or more variants of a mobile app experience by serving each variant to a randomly assigned cohort of users and measuring the effect on a chosen metric. Variants can be anything: button copy, onboarding flow, paywall layout, pricing, feature availability. The purpose is to replace guesswork with a measured signal before shipping a change to everyone.

The statistical piece: you need enough users in each variant to see a real effect above random noise. A typical mobile experiment needs thousands of sessions per variant. Tools that calculate statistical significance prevent you from shipping a "winner" that was really just variance.

How to form a good A/B testing hypothesis

A good hypothesis has three parts: a specific change, a named cohort, and an expected effect on a measurable metric. The template I use:

"If we change [specific thing] for [target cohort], we expect [metric] to [move in direction] by [magnitude] because [user-behavior reason]."

Example: "If we remove phone verification from the signup flow for first-time iOS users, we expect signup completion rate to rise by 15% because session replays show 22% of users abandoning on that step."

The "because" part is the hardest and the most important. Without a reason grounded in observed behavior, the experiment is a guess with a spreadsheet. I generate most of my hypotheses by watching session replays of users who didn't convert, then naming the specific friction each replay reveals.

Community discussions worth reading

r/ProductManagement, r/marketing, and the Statsig community Slack are the active communities for A/B testing tool discussions. Google is increasingly surfacing Reddit threads for tool-comparison queries, so staying aligned with real user sentiment matters.

Brands that Reddit users mention frequently for mobile A/B testing (as of early 2026): Statsig, Firebase, LaunchDarkly, and Amplitude Experiment. Optimizely gets mentioned in enterprise threads. Apptimize and AB Tasty have less organic discussion presence.

Improve your experimentation with UXCam

UXCam is a product intelligence platform that automatically captures every user interaction, with no manual tagging. It doesn't run A/B tests, but it tells you why one variant won and another didn't, which is the step most experimentation programs skip. Tara, UXCam's AI analyst, can filter session replays by experiment variant and surface the behavioral patterns that explain your statistical results, giving product teams evidence-based insights to share with stakeholders.

Pair any experimentation platform above with UXCam's session replay, and you close the loop between "what won" and "why it won." Installed in 37,000+ products, mobile-first and now web-ready.

Request a demo to see the workflow for your app.

Frequently asked questions

What is A/B testing in mobile apps?

A/B testing in mobile apps is the practice of serving two or more variants of an in-app experience to randomly assigned user cohorts and measuring which variant moves a chosen metric more. It's the standard method for making data-backed product decisions before rolling a change out to all users.

Which A/B testing tool is best for a small mobile team?

Statsig or Firebase A/B Testing. Both have meaningful free tiers. Statsig is the better pick if you care about experiment velocity and want built-in analytics. Firebase is the better pick if you're already using other Firebase products and want zero additional vendor cost.

How many users do I need to run a mobile A/B test?

At least 1,000 to 2,000 users per variant to detect a 5% or larger effect. For smaller effects (2-3%), you'll need 5,000+ per variant. Apps with under 10K MAU should focus on larger, more obvious tests where effect sizes are bigger rather than trying to detect subtle changes.

What's the difference between A/B testing and feature flags?

Feature flags toggle a feature on or off for specific users. A/B tests use feature flags as the delivery mechanism but add randomized variant assignment and statistical analysis to determine which variant performs better. Most modern platforms (Statsig, LaunchDarkly, Split) offer both capabilities together.

Can you A/B test on iOS with App Tracking Transparency?

Yes. The key: assign variants based on a stable first-party identifier (Firebase Installation ID or your own user ID after signup), not IDFA. Measure conversion events within the app, not cross-network attribution. ATT limits cross-app tracking, not within-app experimentation.

How long should a mobile A/B test run?

At least a full week (to cover weekly behavioral patterns) and long enough to hit statistical significance. For most mobile apps, that means 1 to 3 weeks per test. Shorter than a week risks weekly-cycle bias. Longer than 4 weeks risks confounding from seasonal changes or app updates.

What's the biggest mistake teams make with A/B testing?

Running experiments without a behavioral hypothesis. I watch teams ship A/B tests where the prediction is "we think this will be better" with no observed reason to think so. Most of those experiments fail to produce significant results, wasting traffic and time. Start with session replay and funnels to find the specific friction worth testing, then run the experiment.

AUTHOR

Silvanus Alt, PhD

Founder & CEO | UXCam

Silvanus Alt, PhD, is the Co-Founder & CEO of UXCam and a expert in AI-powered product intelligence. Trained at the Max Planck Institute for the Physics of Complex Systems, he built Tara, the AI Product Analyst that not only analyzes user behavior but recommends clear next steps for better products.

TABLE OF CONTENTS

- How we evaluated these tools (methodology)

- Key takeaways

- The 10 best A/B testing tools for mobile apps

- Comparison table

- 1. Statsig

- 2. Firebase A/B Testing

- 3. Optimizely

- 4. LaunchDarkly

- 5. Split

- 6. Apptimize

- 7. AB Tasty

- 8. Amplitude Experiment

- 9. VWO

- 10. UXCam (behavioral analytics layer)

- Tools I evaluated and excluded (and why)

- What is A/B testing for mobile apps?

- How to form a good A/B testing hypothesis

- Community discussions worth reading

- Improve your experimentation with UXCam

Related articles

Curated List

The 15 Best Web Analytics Tools in 2026

Discover the best web analytics tools. Want to enhance your site's user experience? Learn what's a must-have for insightful web...

Jonas Kurzweg

Product Analytics Expert

Curated List

Customer Behavior Analysis: The Framework, Methods, and What's Actionable in 2026

Customer behavior analysis is the systematic study of what customers actually do in your...

Silvanus Alt, PhD

Founder & CEO | UXCam

Curated List

10 Best A/B Testing Tools for Mobile Apps in 2026

The 10 best A/B testing tools for mobile apps in 2026, evaluated on mobile SDK quality, statistical rigor, pricing, and ecosystem...

Silvanus Alt, PhD

Founder & CEO | UXCam