App Conversion Rate Optimization: A Practitioner's Guide to CRO for Mobile

TABLE OF CONTENTS

- Key takeaways

- What is app conversion rate optimization?

- Micro vs. macro conversions: instrument both

- Benefits of doing this well

- The 7-step CRO mobile optimization framework

- 14 mobile CRO patterns and pitfalls I see in every audit

- Industry-specific CRO considerations

- Mobile CRO tools by category

- 10 common mobile CRO mistakes

- A CRO maturity model: where are you and what to do next

- Getting started: your first 30 days

- What I see working in 2026

- Related reading

App conversion rate optimization is the discipline of turning more of your existing traffic and installs into users who complete the actions your business depends on: signups, subscriptions, purchases, reactivations. I've reviewed hundreds of mobile funnels over the last few years, and the pattern I see most often is the same. Teams copy web CRO playbooks onto a phone screen, wonder why their lift tests go flat, and blame "low intent" users. The real issue is almost always that mobile CRO needs its own model, its own instrumentation, and its own evidence loop.

This guide lays out how I approach CRO when I audit a product, across mobile apps and the web. How to define the conversion, how to find the leaks, and how to close them with changes that compound.

Key takeaways

App conversion rate optimization is different from generic web CRO. Thumb zones, fragmented devices, app store friction, and opaque session paths mean you can't lift-and-shift desktop tactics.

Macro and micro conversions both matter. Track the signup or purchase, but instrument the 6-10 micro-events that predict it so you know where the funnel is actually breaking.

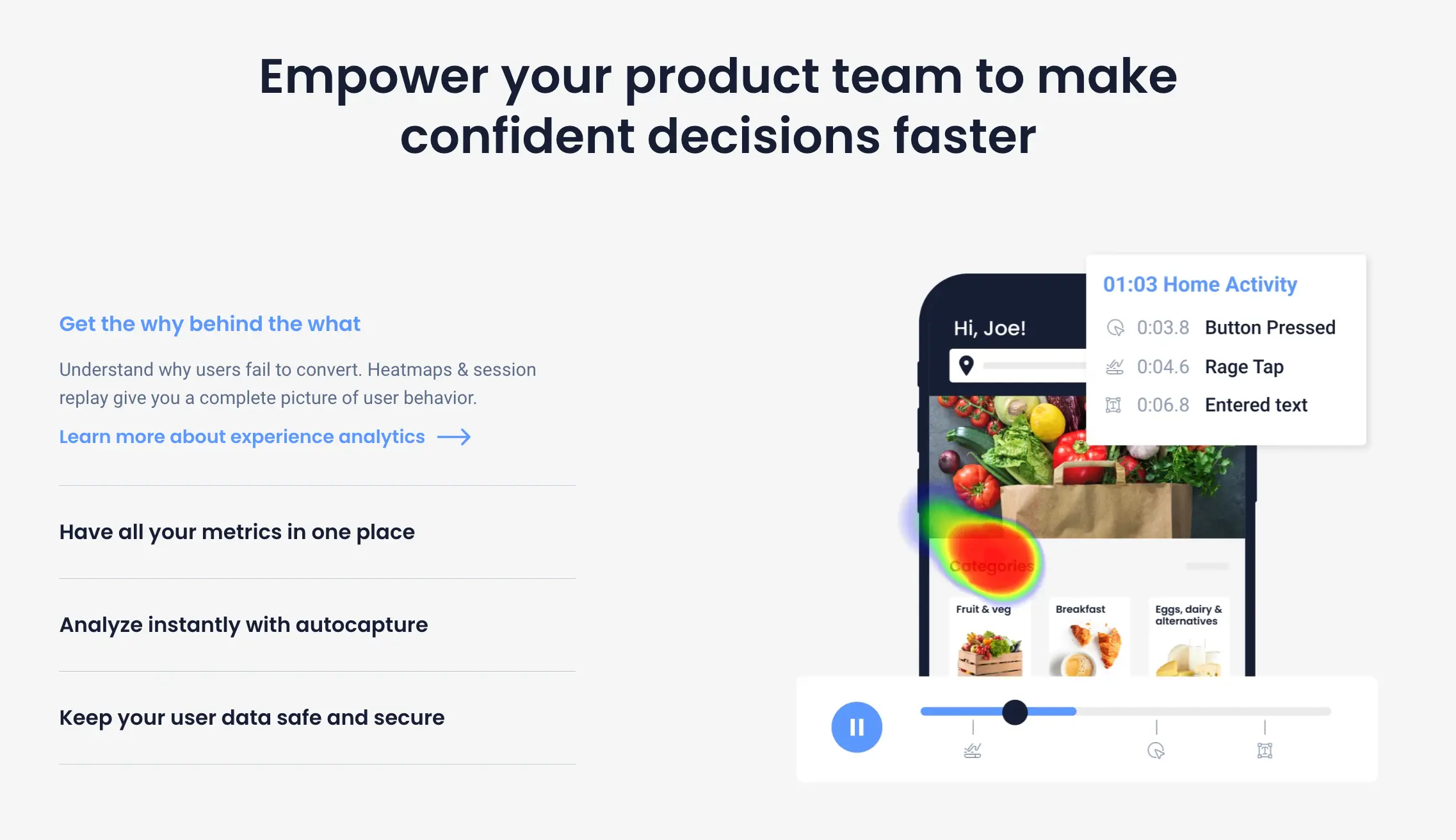

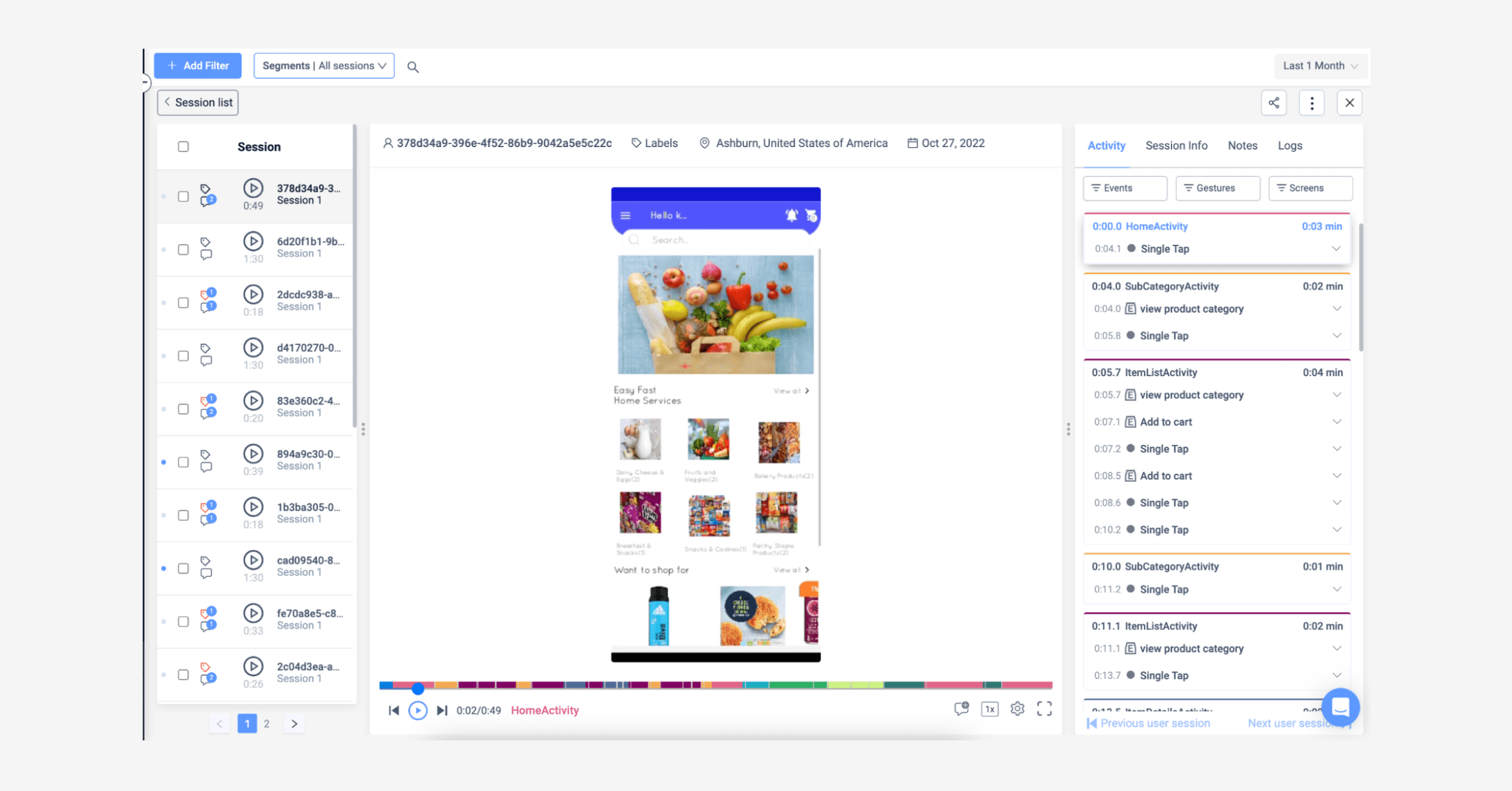

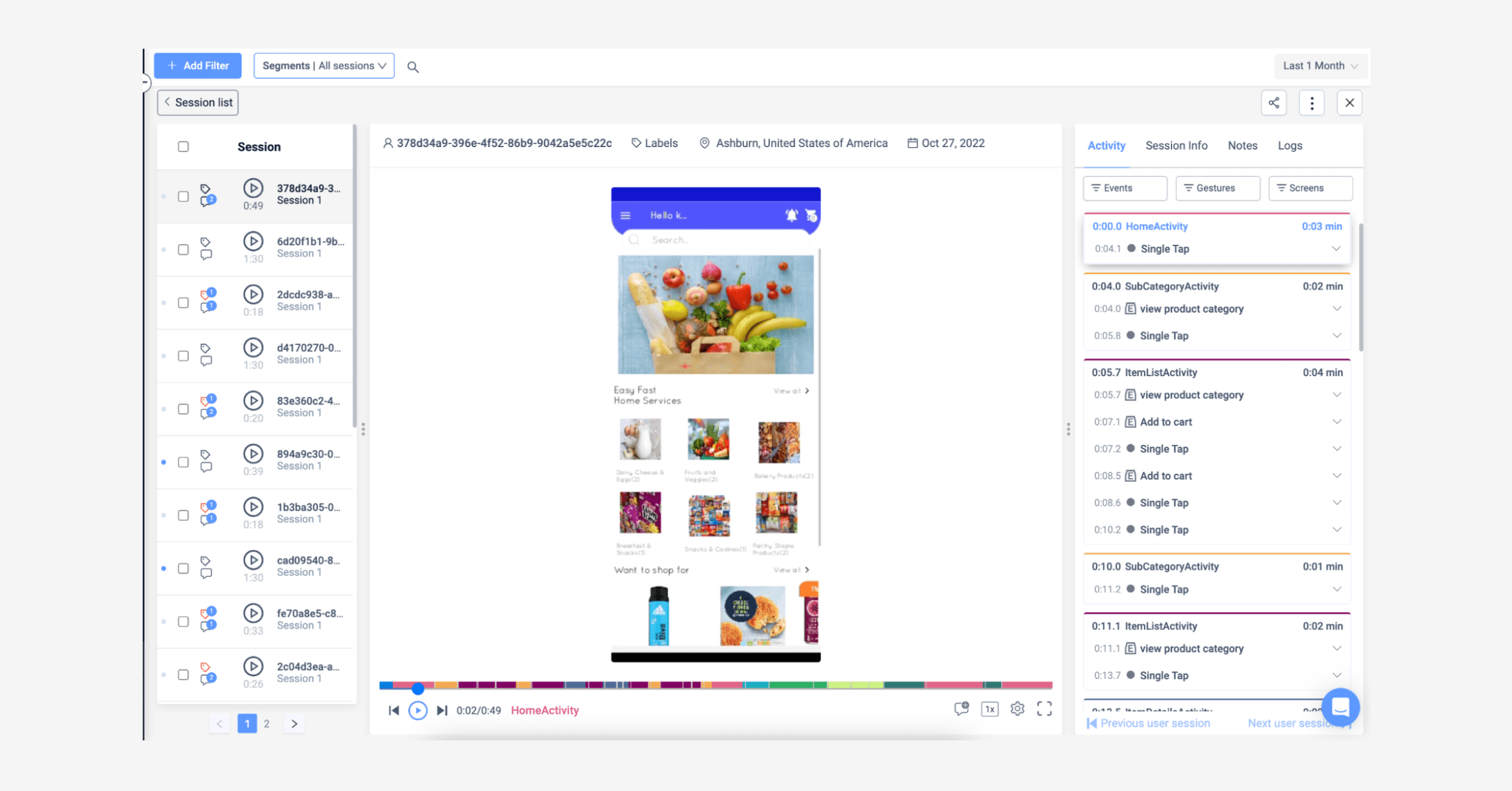

Session replay is the fastest path to a CRO hypothesis. Watching 20 drop-off sessions usually beats a week of dashboard staring.

Speed, form length, and payment friction remain the three highest-yield fixes. A one-second load improvement alone is worth about 27% in conversion rate on mobile.

UXCam's Tara AI, session replay, heatmaps, and issue analytics give you the qualitative layer most teams are missing, the reason Recora cut support tickets by 142% and Inspire Fitness lifted time-in-app by 460%.

What is app conversion rate optimization?

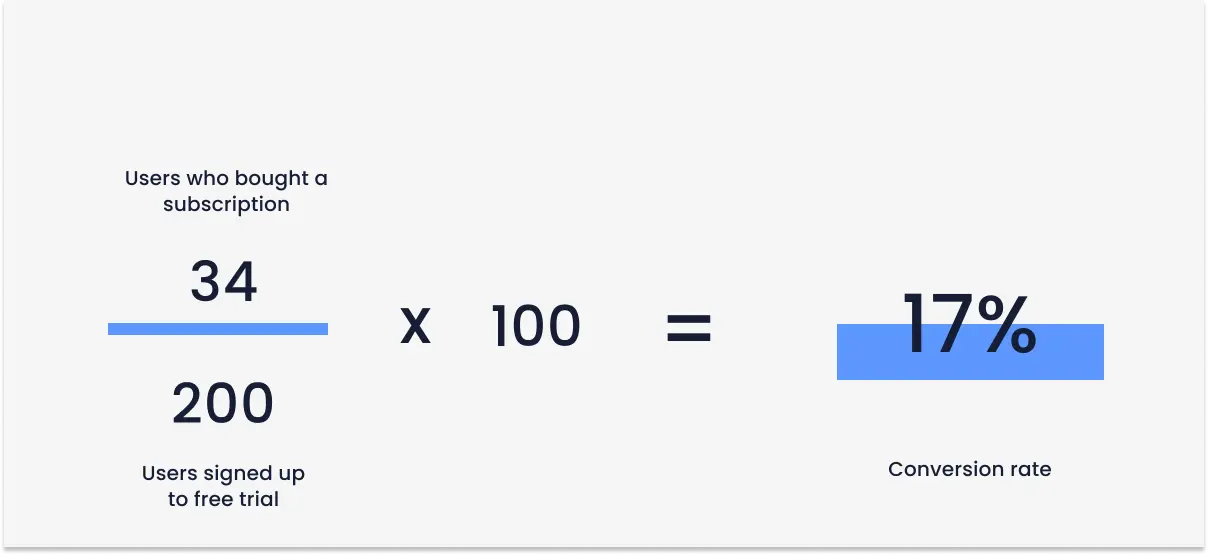

App conversion rate optimization (mobile CRO) is the ongoing process of increasing the share of users who complete a defined, revenue-linked action inside your mobile app or web product. That action might be activation after signup, an in-app purchase, a subscription upgrade, a booking, or reaching a specific engagement threshold that correlates with retention.

The math is simple. The work is not.

What makes mobile CRO harder than desktop CRO:

No URL-level visibility. You can't just plug in a heatmap script and read scroll depth. You need an SDK that captures screens, gestures, and events automatically.

Device fragmentation. A checkout button that works on a Pixel 8 can be clipped on a budget Android. Without replay, you won't know.

App store friction sits outside your app. Install-to-open rates, permission prompts, and store reviews all affect conversion but live outside your product analytics stack.

Session context is shorter and more interrupted. Users convert in 30-second windows between other apps, calls, and notifications.

This is why the CRO stack I recommend is built around a product intelligence platform, not a repurposed marketing tool. UXCam is installed in more than 37,000 products and was built for mobile apps and the web, which is what makes the rest of this framework possible.

Micro vs. macro conversions: instrument both

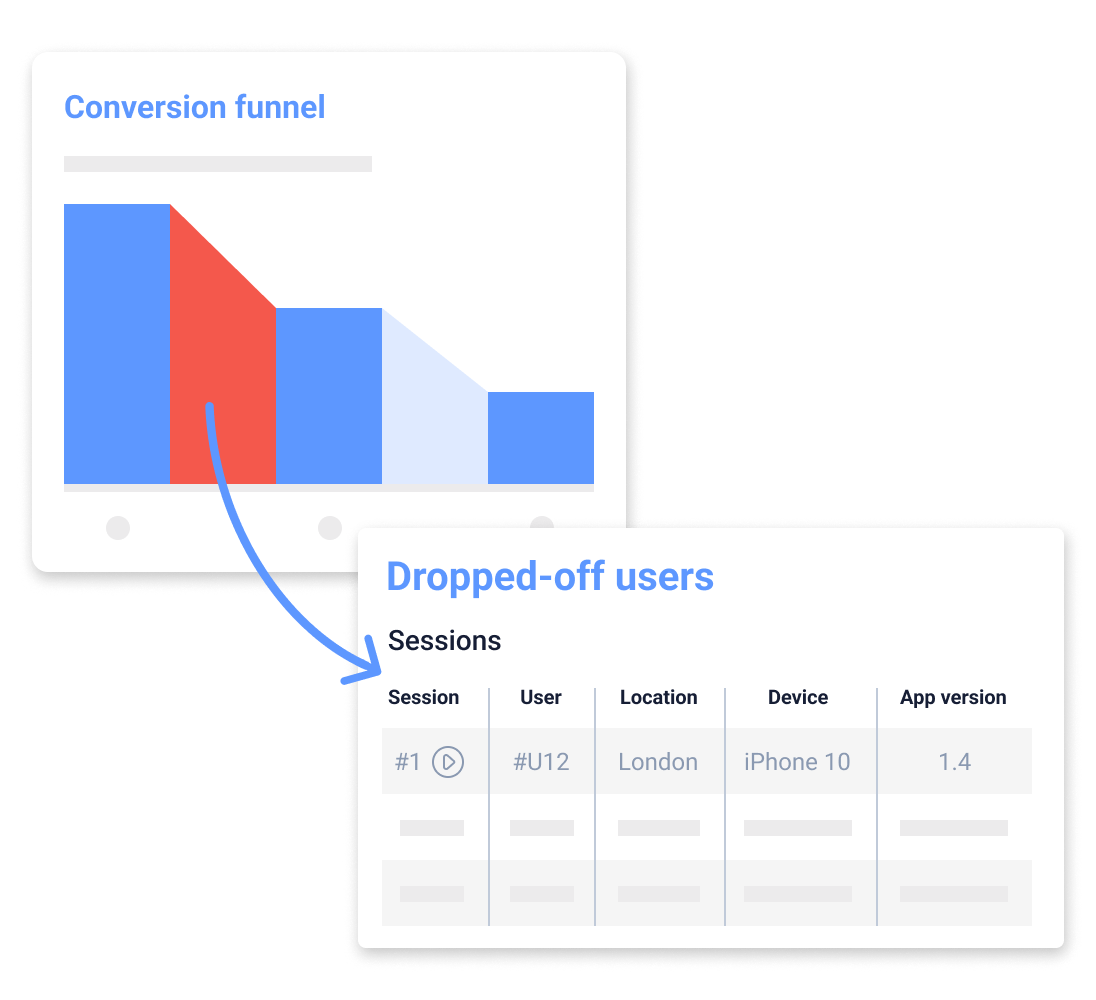

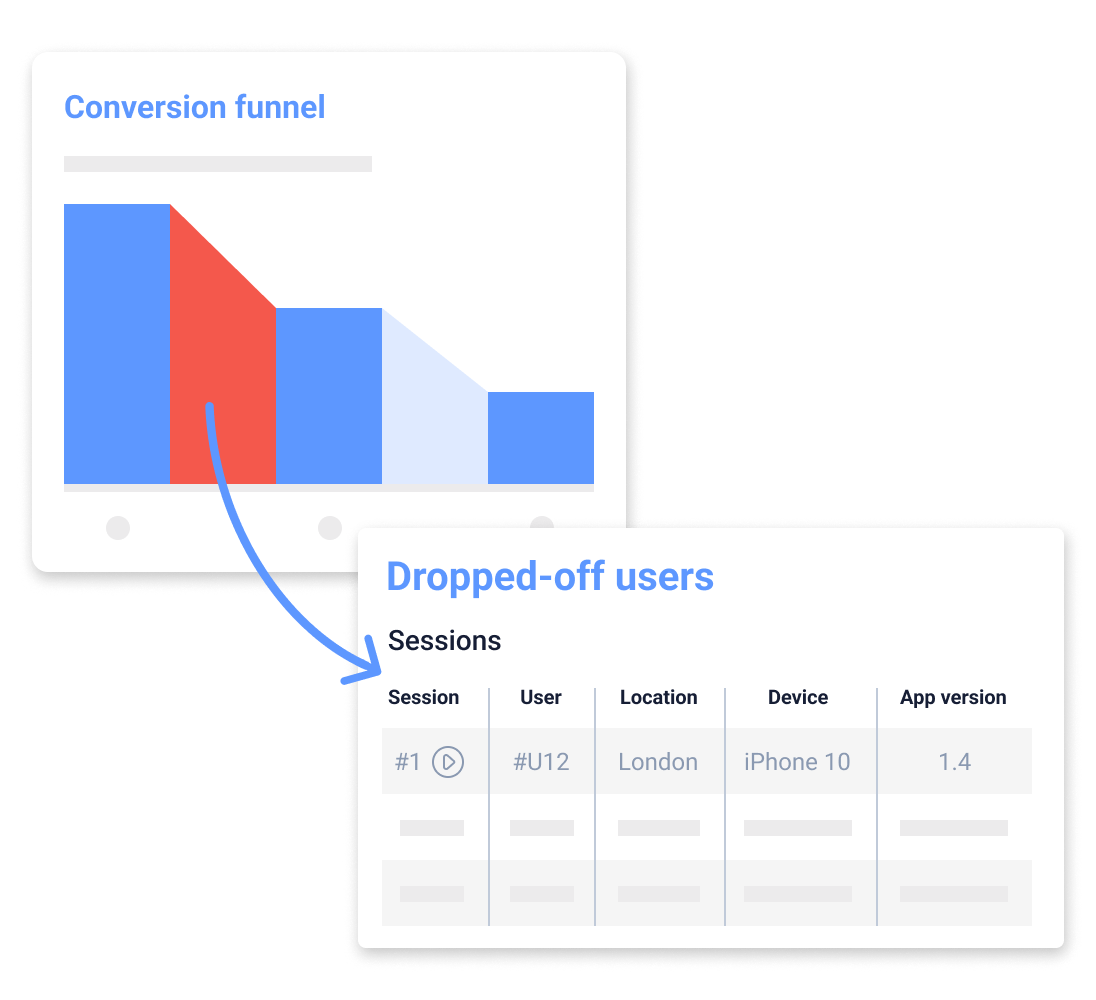

Most teams I audit track one macro conversion and call it done. Then when the number dips, they have no idea why. Micro conversions give you the diagnostic layer.

Macro conversions

Macro conversions are the primary milestones tied to revenue or the core product promise. Think:

App install → first open

Signup complete

First purchase or subscription

Upgrade to paid plan

Booking or order confirmed

Micro conversions

Micro conversions are the steps and signals on the way to the macro. Two flavors worth separating:

Process milestones are the explicit steps in the funnel: email verified, profile completed, payment method added, first item added to cart. These tell you where the funnel breaks.

Secondary actions are engagement signals that predict future macro conversions: content shared, notification enabled, favorite added, onboarding tooltip completed. These tell you who is likely to convert later.

The rule I give teams: for every macro conversion, define at least six micro events across the journey. That is the instrumentation you need before you run a single A/B test.

Benefits of doing this well

You actually understand your users

Not everyone in your app has the same intent. Some arrived from a TikTok ad expecting a 10-second win. Some are returning power users. A proper CRO practice segments these cohorts and lets you see where each one gets stuck. User behavior analysis is the foundation here, not a nice-to-have.

You extend customer lifetime value

Acquisition gets more expensive every quarter. Increasing the value of the users you already paid for is the cheapest growth lever available. Housing.com used this approach with UXCam to grow feature adoption from 20% to 40%, a direct LTV lift without a single extra dollar of ad spend.

You reduce support cost

When you find and fix the friction that causes rage taps and UI freezes, tickets drop. Recora used UXCam's issue analytics to spot that users were press-and-holding a button that should have been tapped. Fixing the affordance cut support tickets by 142%.

The 7-step CRO mobile optimization framework

Here is the sequence I run with teams. The order matters. Skipping steps 1-2 is why most CRO programs stall.

Step 1. Install an analytics stack

Without honest instrumentation, the rest is guesswork. At minimum you need:

Autocaptured screens and gestures so you don't have to tag every tap manually.

Session replay so you can watch drop-offs, not just count them.

Heatmaps tied to screens, with tap, scroll, and dead-zone views.

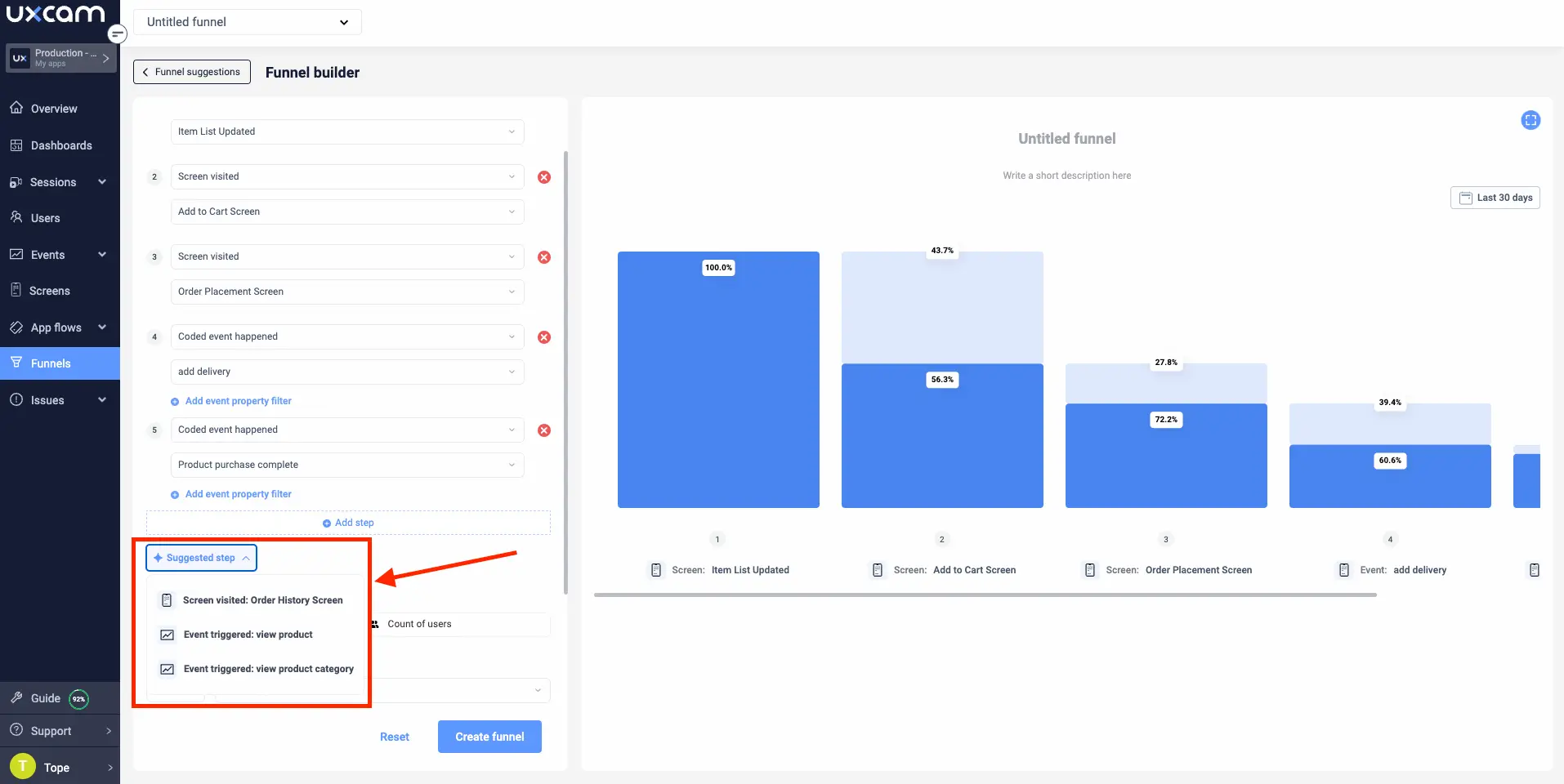

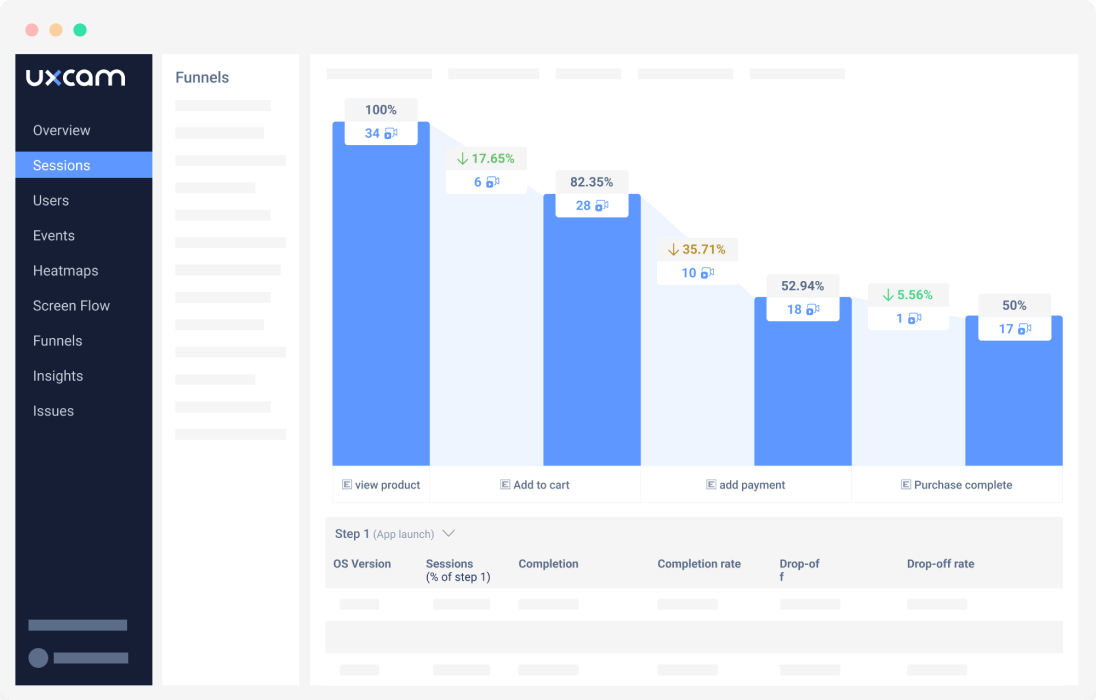

Funnels you can reconfigure without redeploying the app.

Issue analytics that surface rage taps, UI freezes, and crashes in the context of the user session.

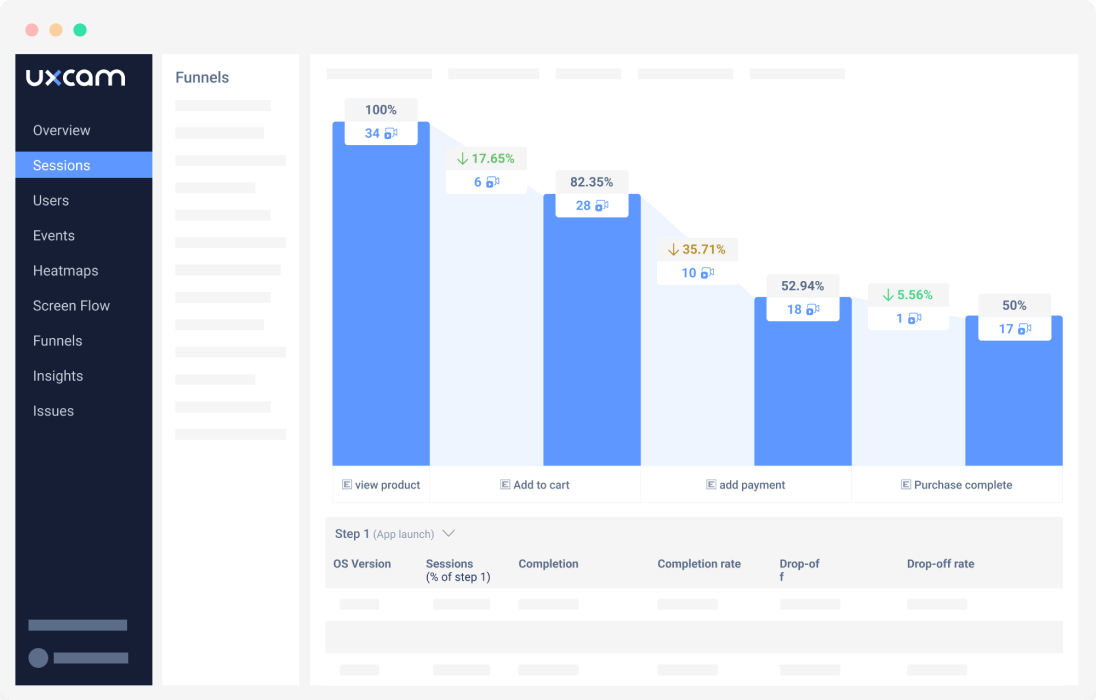

UXCam's session replay and heatmaps ship as part of a single lightweight SDK, and Tara, the AI analyst, reads the captured sessions and recommends where to focus. For most teams I work with, that combination replaces three or four separate tools.

The UXCam CRO workflow I actually use

Define the conversion. Pin one macro event and 6-10 micro events around it.

Set up events and screens. Use Smart Events so you can add events without an engineering ticket, plus autocaptured screens and gestures for everything you forgot to plan for.

Map the user paths. Screen Flows show the real routes. Funnel Suggestions surface probable paths you hadn't considered.

Validate the funnel. Watch 6-7 replays at each stage to confirm events fire correctly. This step catches more instrumentation bugs than any QA process.

Analyze drop-offs. Use funnel analytics to see where the bleed happens.

Segment the drop-offs. New vs. returning, device class, country, traffic source. The aggregate number hides the real story every time.

Investigate with replays. Filter sessions by drop-off stage and watch. Ten minutes of watching beats an hour of dashboarding.

Confirm with heatmaps and issue analytics. Rage taps, dead taps, and UI freezes on the broken screen will tell you exactly what to fix.

Monitor the impact. Set up dashboards for the funnel and watch it recover. Share with stakeholders weekly.

Step 2. Do mobile-specific research

Too many teams run the same user interviews they ran for their web funnel and call it mobile research. A real mobile CRO research pass includes:

Device-class segmentation (flagship vs. budget Android is a real gap)

Network-condition testing (3G, flaky wifi)

Orientation and reachability checks (can the CTA be tapped one-handed?)

In-context diary studies where users report on sessions that happened in line, on the bus, etc.

Survey results should be segmented by device type so you can tell whether "the app feels slow" is universal or isolated to a hardware tier.

Step 3. Double down on your highest-converting flows

There is usually one path through your app that converts three or four times better than the average. Find it with user journey analytics and protect it ruthlessly.

Practical moves:

One primary CTA per screen. Mobile real estate punishes indecision. Heatmap data usually shows the secondary CTA eats taps from the primary.

Place CTAs in the thumb zone on the bottom third of the screen, not the top nav.

A/B test the copy. "Start free trial" vs. "Try it free" vs. "Get started" will often swing conversion 10-20% without any design work.

Use onboarding to deliver immediate value. Let users interact with a real feature before hitting the paywall. Inspire Fitness used UXCam's session insights to redesign onboarding flow friction and boosted time-in-app by 460% while cutting rage taps by 56%.

Step 4. Fix performance before anything else

Users notice delays as short as 100 milliseconds. A one-second improvement in load time lifts conversion by roughly 27% on mobile. Google's own Core Web Vitals research shows that sites meeting LCP, INP, and CLS thresholds see materially lower abandonment. Performance isn't an engineering concern separate from CRO, it is the single highest-leverage CRO lever for most apps I audit.

What to check first:

Image optimization (AVIF or WebP, proper sizing per device)

Cold start time

API response time on the critical path screens

Animation jank and dropped frames on budget devices

UI freezes, which UXCam's issue analytics surfaces automatically

Step 5. Simplify checkout and forms

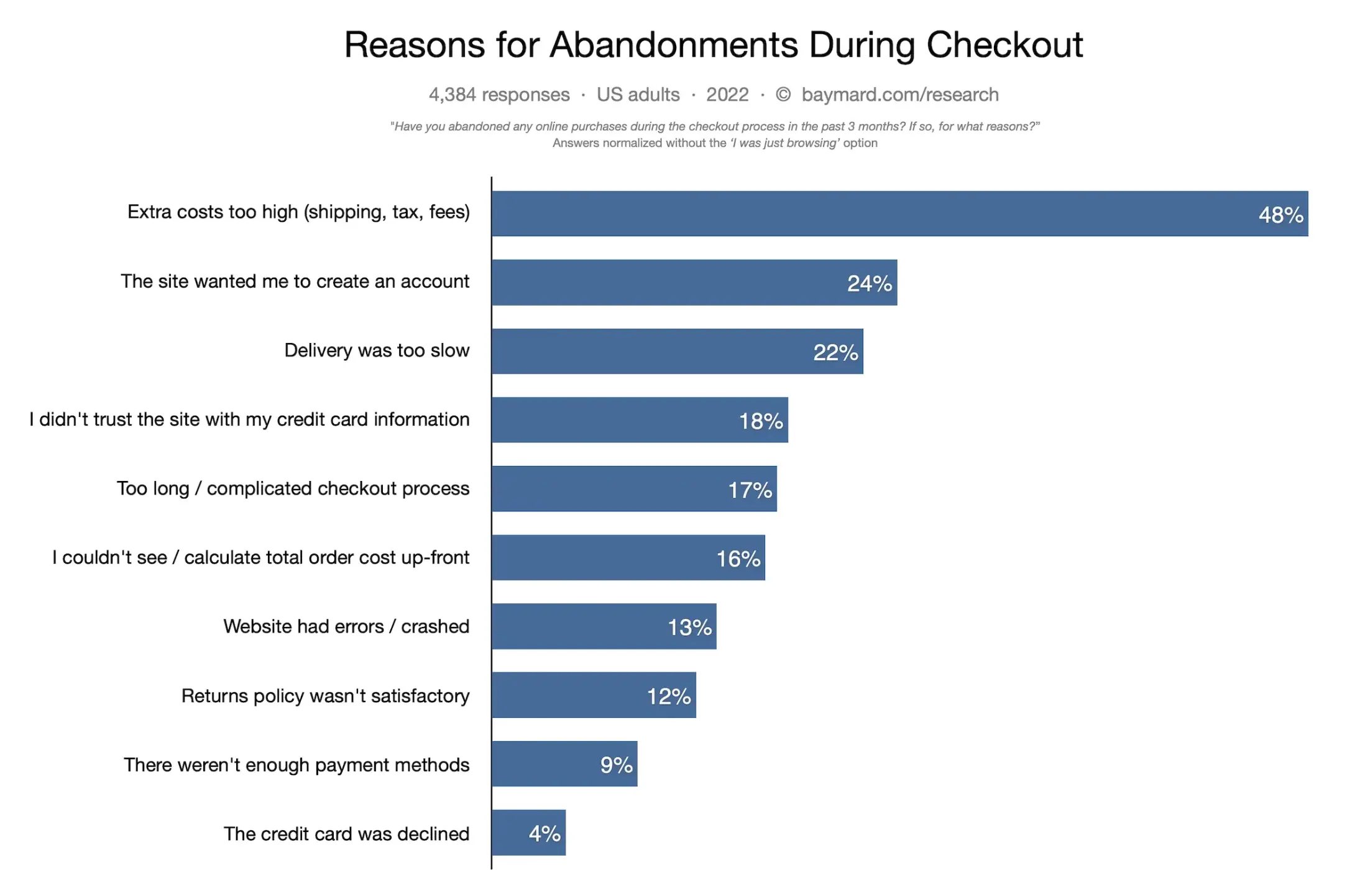

17% of shoppers abandon carts specifically because the checkout is too long or complicated. On mobile that number is usually worse, with the Baymard Institute reporting average documented mobile cart abandonment above 85% across sectors.

The short list of checkout fixes that consistently lift conversion:

Remove every field you don't strictly need. If you don't need a last name, don't ask.

Collapse the checkout into as few steps as the payment processor allows.

Show a progress bar. Users tolerate length when they can see the end.

Offer Apple Pay, Google Pay, and at least one local wallet per region.

Allow guest checkout. Forcing signup before purchase is the single most common conversion killer I see.

Costa Coffee used UXCam to identify registration friction and redesigned the signup step, raising registrations by 15%.

Step 6. Earn trust visibly

Users convert when they feel safe. On mobile, trust signals need to be compressed and unmistakable:

Clear SSL and payment security badges near the pay button

Real reviews and testimonials, ideally with names and photos

A visible privacy summary on permission prompts

Transparent pricing, including what is free and what is charged after a trial

Step 7. Benchmark, then benchmark again

You can't optimize what you don't measure consistently. Set a weekly rhythm:

Compare this week's macro conversion rate to last week, last month, and the rolling 90-day baseline.

Track the top three micro conversion rates that feed the macro.

Compare to public industry benchmarks where available. Adjust and AppsFlyer both publish sector-specific mobile benchmarks quarterly, and data.ai's State of Mobile is the cross-sector reference I check once a quarter.

14 mobile CRO patterns and pitfalls I see in every audit

These are the specific findings that come up repeatedly when I walk through a product. Each one is worth a dedicated review pass.

1. The permission prompt timed wrong

Apps that ask for push, location, or tracking permission on first launch see denial rates north of 60%. Delay the prompt until the user has seen the value, ideally tied to a feature that needs the permission, following Apple's Human Interface Guidelines. For ATT specifically, Adjust's ATT benchmark data shows opt-in rates double or triple when the pre-prompt explains the value before the system dialog fires.

2. The secondary CTA stealing taps from the primary

Heatmaps consistently show that when a "Skip" or "Learn more" link sits near the primary CTA, it siphons 15-30% of intended taps. Move it to a different zone or shrink its visual weight. The fastest fix I've watched ship was demoting the "Maybe later" link to a small gray link tucked below the fold, which lifted trial starts by 11% in a week.

3. Dead taps on non-interactive elements

Users tap images, icons, and card backgrounds expecting them to be links. UXCam's dead tap detection shows exactly where this happens. Either make the element tappable or redesign it so it doesn't look interactive. Nielsen Norman Group's tap target research is the reference worth keeping open during design reviews.

4. Keyboard covering the input field

On Android especially, a form field that gets obscured by the keyboard kills completion rate. Test with all major keyboard heights including Gboard with autocomplete and SwiftKey. The Android keyboard adjustment docs cover the fix.

5. Forcing account creation before value

Letting users experience the product before signup routinely lifts activation 20-40%. Duolingo's famous placement of signup at lesson three, not lesson zero, is the canonical example. Reforge's growth model work describes why delayed signup compounds across the acquisition loop, not just the first session.

6. Long-form signup with no social login

Adding Apple, Google, and one regional social login option shortens signup by roughly 40 seconds on average. That matters more than any field reordering. Apple's Sign in with Apple is now mandatory on iOS if you offer any other third-party auth, so there is no reason not to add it.

7. Error states that don't recover the user

A failed payment that dumps the user back to an empty cart is a conversion killer. Preserve the cart, show the specific reason for failure, and offer an alternative payment method inline. Stripe's error handling guide is a solid baseline for the specific codes worth surfacing versus hiding.

8. Onboarding that teaches instead of activating

Tooltips that explain every icon are a tell that the UI isn't self-evident. Replace them with a single activation task that delivers real value in under 60 seconds. NN/g's onboarding research is the best reference here.

9. No progress indication in multi-step flows

Users who can't see the end of a flow abandon it. A simple "Step 2 of 4" indicator lifts completion meaningfully. The effect is strongest on flows longer than three steps where users can't mentally estimate how much work remains.

10. Paywall hit before aha moment

If your paywall appears before the user has felt the core value, you are optimizing for bounce, not revenue. Move the paywall to trigger after the user completes the one action that predicts day-7 retention. RevenueCat's subscription benchmarks show that apps with behavior-triggered paywalls convert 40-60% better than time-triggered ones.

11. Generic push notifications

Batch-and-blast push notifications train users to disable them. Triggered, behavior-based pushes convert 3-5x better according to Airship's benchmark data. Start with three behavioral triggers (abandoned action, milestone reached, personalized recommendation) before building out the full messaging matrix.

12. In-app review prompt at the wrong moment

Prompting for a review after a rage-tap sequence gets you one-star reviews. Tie the prompt to a confirmed success moment: order delivered, streak milestone, successful workout. Apple's StoreKit review API caps prompts at three per year, so each one has to count.

13. Small tap targets

Apple recommends 44x44 points minimum. Google recommends 48dp. Heatmap data from apps I audit routinely shows conversion lift when targets are enlarged to 52-56dp, especially for users over 45. The WCAG 2.5.5 target size guideline gives you the accessibility justification if you need to push back against a designer who wants tighter spacing.

14. No offline state handling

Users on subways, planes, and flaky wifi bail when a screen shows a spinner forever. Cache last-good state and surface a clear "You're offline, here's what you can still do" pattern. The offline-first pattern library has concrete examples for the most common screens.

Industry-specific CRO considerations

The 7-step framework is universal. The tactics that matter most shift by vertical.

Fintech and banking

Trust and compliance are the conversion levers, not speed alone. KYC drop-off is usually the biggest leak, and the fix is almost always breaking the identity verification into progressive disclosure: ask for what you need to unlock the next value step, not everything up front. Plaid's research on account linking consistently shows completion rates below 50% for first-time flows, so instrument every handoff to the identity provider and watch the replays.

Ecommerce and retail

Cart abandonment is the dominant metric. Apple Pay and Google Pay typically lift mobile conversion 20-30% on first purchase. Product page performance on 3G and budget Android is non-negotiable. Build CRO around the four highest-leverage pages: category, product detail, cart, and checkout, in that order.

On-demand and marketplace

Time to first successful transaction is the north-star metric. Location permission, payment setup, and first-order promo redemption are the three micro-conversions that predict retention. Session replay on the map and search screens usually surfaces the biggest fixes because these are the screens with the most dynamic content and the most ways to fail silently.

Health, fitness, and wellness

Aha moment usually sits 3-7 sessions in, not the first session, so CRO targets need to be retention-weighted. Inspire Fitness's 460% time-in-app lift came from rebuilding onboarding around a real workout, not a tutorial. Paywall timing is the single most tested variable in this vertical.

SaaS and B2B

Activation, not signup, is the macro conversion that matters. A completed first project, invited teammate, or integration connected predicts LTV far better than email verification. Instrument activation as a multi-event funnel and segment by company size and role.

Media and streaming

Play rate and second-session return are the paired conversion metrics. Autoplay settings, network-aware video quality, and personalized home screens are the top three variables. Watch for rage taps on seek bars and buffer spinners, which correlate tightly with session abandonment.

Mobile CRO tools by category

No single tool covers everything. Here is the stack I see working in 2026.

Product intelligence and analytics: UXCam for session replay, heatmaps, funnels, issue analytics, and Tara AI. Mixpanel and Amplitude for event-based funnel and cohort analysis.

A/B testing and feature flags: Statsig, Optimizely, LaunchDarkly, and Firebase Remote Config for experimentation and staged rollouts.

Attribution and MMP: Adjust, AppsFlyer, and Branch for paid acquisition measurement and deep linking.

Crash and performance monitoring: Firebase Crashlytics, Sentry, and Instabug for crash and ANR detection, paired with UXCam's issue analytics for UX-layer friction.

Customer messaging and engagement: Braze, Iterable, and OneSignal for lifecycle and push. Intercom for in-app support.

Survey and qualitative: UserTesting, Maze, and Survicate for moderated and unmoderated research.

Subscription and revenue: RevenueCat and Adapty for subscription management, paywall testing, and revenue analytics on iOS and Android.

10 common mobile CRO mistakes

Optimizing for signup instead of activation. You end up with vanity numbers and a leaky retention curve.

Running A/B tests without enough traffic for significance. Low-traffic apps should lean on qualitative evidence and ship based on directional signal, not wait months for p<0.05.

Trusting aggregate funnels. The average hides the cohort that is breaking. Always segment by device, OS, country, and source.

Ignoring Android budget-tier devices. If 40% of your users are on devices with 2-4GB RAM, that is where your next 10% of conversion lift lives.

Testing visual polish before fixing broken flows. Rounded corners never saved a funnel. Fix the dead taps first.

Treating rage taps as an engineering problem. They are the single clearest signal of lost conversion. Put them on the growth backlog.

Copying competitors without instrumentation. Their paywall placement worked for their retention curve, not yours.

Using web analytics tools on mobile. URL-based tracking misses screens, gestures, and in-app states where most friction lives.

Shipping the test without a rollback plan. Staged rollouts with feature flags prevent a bad variant from torching your weekly numbers.

Not closing the loop. The experiment shipped, the metric moved, and nobody watched replays to confirm the user experience actually improved. You need both numbers and evidence.

A CRO maturity model: where are you and what to do next

Use this as a diagnostic. Most teams I audit sit at level 2 and think they're at level 4.

Level 1: Blind

You track installs and one macro event. No segmentation, no replay, no funnels. Next step: install an autocapture SDK like UXCam, define your macro conversion, and instrument six micro events around it. Expect two weeks of setup.

Level 2: Counting

You have funnels and know where drop-off happens. You can't explain why. Next step: add session replay and heatmaps. Commit to watching 20 drop-off sessions per week and writing up the top three friction patterns.

Level 3: Diagnosing

You watch replays, segment drop-offs, and have a backlog of friction hypotheses. Experimentation is ad hoc. Next step: install a feature flag and A/B tool, define test guardrails (sample size, duration, guardrail metrics), and run one test at a time with documented hypotheses.

Level 4: Experimenting

You ship three or more tests per month, measure primary and guardrail metrics, and have a retention-weighted definition of win. Next step: tie CRO targets to LTV and day-30 retention, not signup. Bring issue analytics into the same backlog as growth experiments.

Level 5: Compounding

CRO is a weekly operating rhythm. Tara AI flags the patterns, replays validate the hypotheses, experiments ship, and retention curves lift quarter over quarter. Next step: cross-train product, engineering, and marketing on the same dashboards, and start publishing learnings externally. Teams at this level tend to recruit better because they talk about evidence in public.

Getting started: your first 30 days

If you're starting from Level 1 or 2, here is the cadence that gets you to a working CRO practice inside a month.

Week 1: Install UXCam, agree on one macro conversion, and ship a draft event schema covering six to ten micro events. Validate the events by watching five replays per stage to confirm they fire at the right moment. Write down the current baseline rate for the macro and each micro.

Week 2: Segment the funnel by device class, OS, country, and traffic source. Identify the single worst-performing segment. Watch 20 drop-off replays in that segment and cluster what you see into three or four friction patterns. Write each one up as a one-line hypothesis.

Week 3: Pick the highest-ICE hypothesis and ship the simplest possible fix behind a feature flag. For most teams this is a copy change, a CTA reposition, or removing a form field. Measure the conversion delta and a guardrail metric (usually day-7 retention or revenue per user).

Week 4: Review the result with stakeholders, playing one winning and one losing replay. Document the learning in a shared doc regardless of outcome. Pick the next hypothesis. The goal at the end of month one is not a single big win. It is a repeatable loop.

What I see working in 2026

Three patterns from recent audits worth calling out:

AI-assisted session triage. Watching 50 replays used to take half a day. Tara AI reads sessions, clusters the friction patterns, and surfaces the three or four that matter. That compresses the hypothesis loop from weeks to days.

Retention-weighted CRO. Teams that optimize only for signup end up with high churn. The ones winning are tying CRO targets to day-7 and day-30 retention, not just the first purchase.

Issue analytics as a CRO input. Rage taps and UI freezes are often the actual reason behind a funnel drop. Treating them as engineering bugs separate from the CRO backlog is a mistake. They're the same work.

Related reading

Frequently asked questions

What is a good conversion rate for a mobile app?

It depends heavily on vertical and conversion definition. For install-to-signup, a healthy range is 25-40%. For signup-to-paying-user, fintech and SaaS apps commonly see 2-5%, while freemium games run under 2%. Ecommerce apps usually convert visitors to purchasers at 1-3%. More useful than the industry number is your own trend: are you improving week over week for the same traffic sources? That is the only benchmark that matters for operational decisions.

How is app conversion rate optimization different from web CRO?

Three structural differences. First, mobile has no URLs, so you need an SDK that captures screens and gestures rather than page loads. Second, mobile device fragmentation means a change that lifts conversion on iPhone can tank it on a low-end Android, so segmented testing is mandatory. Third, mobile sessions are shorter and more interrupted, so thumb reachability, one-handed use, and offline tolerance become CRO variables that simply don't exist on desktop. The underlying discipline is the same, but the tooling and playbooks have to be rebuilt.

Which metrics should I track first for CRO?

Start with one clearly defined macro conversion and six to ten micro conversions that precede it. Then layer in retention cohorts at day 1, day 7, and day 30 so you don't over-optimize for a leaky bucket. On the qualitative side, track rage taps, UI freezes, and dead taps as proxies for friction. UXCam's issue analytics and funnels give you all of these in one place, which matters because switching between tools usually means switching between conflicting numbers.

How long does it take to see results from mobile CRO?

For instrumentation and first insights, about two weeks. For shipped changes to show statistically meaningful conversion lift, typically four to eight weeks depending on your traffic volume. Low-traffic apps should expect closer to eight weeks per test. High-traffic apps with tens of thousands of daily sessions can run multiple concurrent tests and ship in two-week cycles. The biggest time sink is almost always instrumentation, which is why autocapture SDKs pay back quickly.

Can I run A/B tests inside UXCam?

UXCam is a product intelligence and product analytics platform, so the primary role is diagnosing what to test and measuring what happened after. Most teams pair it with a dedicated experimentation tool for the randomization and statistical engine. The workflow that tends to work: use UXCam session replay, heatmaps, and Tara's AI recommendations to generate the hypothesis, run the experiment in your A/B tool, then come back to UXCam funnels and replays to validate that the winning variant actually improved the user journey, not just a metric.

Does mobile CRO still matter if most of my traffic is from paid ads?

It matters more, not less. Paid traffic raises the cost of every leaky step in your funnel. If you are paying $4 per install and losing 60% of users at signup, you are paying $10 for every activated user. A 10% improvement in signup completion directly reduces your effective CAC by the same amount. The teams I see getting the most leverage from paid spend are the ones treating CRO and acquisition as a single P&L, not separate departments.

How many micro conversions should I track per macro?

Six to ten is the sweet spot. Fewer than six and you miss the diagnostic detail when the macro dips. More than ten and the signal gets diluted across events that nobody actually looks at. The discipline is to pick the micro events that predict the macro, not the ones that are easy to instrument.

What sample size do I need for a mobile A/B test?

Depends on the baseline conversion rate and the minimum detectable effect you care about. For a 10% baseline and 10% relative lift at 80% power, plan on roughly 15,000 users per variant. Tools like Evan Miller's calculator will give you the exact number. If your traffic can't support that in four to six weeks, lean on qualitative evidence and ship based on directional signal rather than waiting months.

Should I optimize iOS and Android separately?

Usually yes. Conversion rates, device capabilities, payment defaults, and user expectations diverge enough that a unified optimization approach leaves lift on the table. Instrument both platforms identically but segment every funnel and replay analysis by OS. The teams that surprise me most are the ones that find their iOS and Android funnels drop off at completely different steps.

How do I prioritize which CRO experiment to run first?

I use an ICE score (impact, confidence, ease) on every hypothesis, but the tie-breaker is always evidence strength. A hypothesis backed by ten replays of users struggling beats a hypothesis backed by a blog post about another company's win. Confidence should come from your own data, not someone else's case study.

Does session replay slow down my app?

A well-built replay SDK adds under 2% CPU overhead and a few hundred kilobytes to app size. UXCam's SDK is built for production use across tens of thousands of apps and uses on-device compression so network impact is minimal. The ROI is massive. Ten minutes of watching usually saves a week of debate.

How do I get stakeholders bought into CRO?

Show, don't tell. Play a 90-second replay of a user rage-tapping through your checkout in the next product review. Numbers convince analysts. Replays convince executives. Teams that make session footage part of the standard cadence, in weekly reviews, OKR updates, and design critiques, tend to get CRO resourcing approved faster.

What's the relationship between CRO and retention?

They are the same thing measured at different time scales. A conversion that doesn't retain is a refund waiting to happen. The operational fix is to define every CRO win as a paired metric: the conversion lift AND the day-30 retention of that cohort. If retention moves with the conversion, you won. If it doesn't, you moved a number without moving the business.

Can I do mobile CRO without engineering support?

Partially. With autocaptured screens, gestures, and Smart Events in UXCam, a PM or growth marketer can define new funnels, add events, and ship experiments through a feature flag tool without a deploy. The parts that still need engineering are SDK installation, deeper custom events, and any backend-driven variants. Most of the weekly CRO rhythm, though, runs without engineering once the instrumentation is in place.

How does privacy regulation affect mobile CRO?

GDPR in Europe, CCPA in California, and ATT on iOS all constrain what you can capture and how you measure. The practical impact on CRO is that you need consent-aware instrumentation (UXCam supports this natively), you should avoid capturing PII in event properties, and you should assume your attribution data is noisier post-ATT. None of this changes the core CRO discipline, but it does mean you rely more on in-app behavioral signal and less on cross-app attribution than you did pre-2021.

AUTHOR

Silvanus Alt, PhD

Founder & CEO | UXCam

Silvanus Alt, PhD, is the Co-Founder & CEO of UXCam and a expert in AI-powered product intelligence. Trained at the Max Planck Institute for the Physics of Complex Systems, he built Tara, the AI Product Analyst that not only analyzes user behavior but recommends clear next steps for better products.

TABLE OF CONTENTS

- Key takeaways

- What is app conversion rate optimization?

- Micro vs. macro conversions: instrument both

- Benefits of doing this well

- The 7-step CRO mobile optimization framework

- 14 mobile CRO patterns and pitfalls I see in every audit

- Industry-specific CRO considerations

- Mobile CRO tools by category

- 10 common mobile CRO mistakes

- A CRO maturity model: where are you and what to do next

- Getting started: your first 30 days

- What I see working in 2026

- Related reading

Related articles

Product best practices

Métricas de Customer Experience: Las 12 Que Vale la Pena Monitorear, Cómo Operacionalizarlas y Hacia Dónde Está Llevando la IA el Trabajo

Métricas de customer experience, las 12 que vale la pena monitorear, fórmulas, benchmarks, agrupaciones de percepción vs. comportamiento...

Silvanus Alt, PhD

Founder & CEO | UXCam

Product best practices

Métricas de Customer Experience: As 12 Que Vale a Pena Acompanhar, Como Operacionalizá-las e Para Onde a IA Está Levando o Trabalho

Métricas de customer experience, as 12 que vale a pena acompanhar, fórmulas, benchmarks, agrupamentos por percepção, comportamento e operação, e como a...

Silvanus Alt, PhD

Founder & CEO | UXCam

Product best practices

Customer Experience Metrics: The 12 Worth Tracking, How to Operationalize Them, and Where AI Is Taking the Work

Customer experience metrics — the 12 worth tracking, formulas, benchmarks, perception vs behavioral vs operational groupings, and how AI session analysis...

Silvanus Alt, PhD

Founder & CEO | UXCam