TABLE OF CONTENTS

- Key takeaways

- How we evaluated these UX tools

- Overview: the 19 best UX tools for 2026

- Best user research UX tools

- Best wireframing and prototyping UX design tools

- Best flowchart and user flow UX software

- Best collaboration and UX design handoff tools

- 13 Patterns for a Working UX Stack

- Industry-specific considerations

- UX tools by category

- 10 Common UX Stack Mistakes

- A maturity model for UX tooling

- How to choose the right UX tools for your team

I've spent the last decade watching UX teams burn budget on tool stacks that look good in a demo and fall apart in a sprint. The UX tools that actually move the needle share a few traits: they fit into a real workflow, they produce evidence the rest of the org trusts, and they close the loop between design intent and what users actually do.

This is the list I'd hand a product design lead building a 2026 stack from scratch. Nineteen tools across research, wireframing, prototyping, flowcharts, and handoff, with the trade-offs I see teams hit in practice.

Key takeaways

A modern UX stack needs four layers working together: behavioral research, wireframing, prototyping, and handoff. Skip one and you end up relitigating decisions downstream. The layer most teams still under-invest in is behavioral analytics across both mobile and web. UXCam is installed in 37,000+ products and is the only tool on this list that tells you what users actually did after launch, rather than what testers said they might do.

Figma has effectively consolidated wireframing, prototyping, and handoff for most teams. Specialized tools still win in narrow cases: Axure for complex interactions, Balsamiq for low-fidelity speed, and Sketch for macOS shops with existing ecosystem lock-in. And self-reported feedback from testing panels will always disagree with in-product behavior. Run both, and when they conflict, trust the session.

The single most under-used UX tool category is issue analytics. Rage taps, UI freezes, and crashes tied to replays are where the real friction lives, and it's the category where Recora cut support tickets by 142% after spotting a press-and-hold confusion that no panel test had caught.

How we evaluated these UX tools

I weighted four criteria, in this order. Usefulness counted for 40%: does the tool solve a concrete problem in the UX workflow, or is it a "nice to have" that gets abandoned by sprint three. Usability was 25%, measured as time-to-first-value for a designer who has never opened the tool; anything that needs a week of onboarding loses points. Collaboration was 20%, because real-time multiplayer is table stakes now and exporting PDFs for stakeholders is not a workflow. The final 15% went to integration and the evidence loop: does the tool connect to the rest of the stack (Figma, Jira, Slack) and, critically, does it let you validate decisions against real user behavior.

Pricing and G2 ratings are included for context but weren't scored. Every tool listed has been used by a team I've either worked with or interviewed.

Overview: the 19 best UX tools for 2026

For user research I'll cover UXCam, UserTesting, Applause, Lyssna, Hotjar, and Survicate. For wireframing and prototyping: Balsamiq, Adobe XD, Figma, Sketch, and Axure RP. For flowcharts and user flow: Overflow, FlowMapp, Lucidchart, Gliffy, and UXCam Screen Flow. For collaboration and handoff: FigJam, Zeplin, and Miro.

Best user research UX tools

Research tools split into two camps. Moderated panels mean you recruit people and watch them try things. Behavioral analytics means you watch everyone who actually uses the product. Serious teams run both, because panels tell you what people say and analytics tell you what they do. The Nielsen Norman Group has a good taxonomy if you want to map your current coverage against every method available.

1. UXCam

UXCam is a product intelligence and product analytics platform used by 37,000+ products. It covers both mobile apps and the web with equal depth, which matters because most "UX analytics" tools still treat one of those as a second-class citizen.

The reason UXCam sits at the top of my research list is that it gives a UX designer things the other tools on this list can't. Session replay lets you watch real users navigate your product instead of a paid tester pretending to be one, which means you see the hesitations, back-button rage, and fat-finger mistakes that a usability script will never surface. Heatmaps show touch, scroll, and unresponsive-gesture activity, revealing where users tap things that aren't buttons, which is the single most common mobile UX failure I see. Issue analytics automatically surface rage taps, UI freezes, and crashes, and link them to the exact replay; this is how Recora cut support tickets by 142% after realizing users were trying to press-and-hold a control that only accepted taps. And Tara AI processes sessions at scale and tells you what's broken, who it's affecting, and what to do next. That workflow used to be "assign a designer to watch 40 replays on Friday afternoon."

The customer numbers give a sense of the ceiling. Inspire Fitness used UXCam to boost time-in-app by 460% and cut rage taps by 56%. Housing.com grew feature adoption from 20% to 40%. Costa Coffee raised registrations by 15%. These are outcomes you won't get from a moderated testing panel.

Worth knowing: UXCam is not where you run interviews. It's where you find out which interview questions you should have asked. Pair it with a panel tool like UserTesting or Lyssna. It runs as a web dashboard with SDKs for iOS, Android, React Native, Flutter, and web. The free plan includes 10,000 monthly sessions, with paid plans on request. G2 rates it 4.7/5 and Capterra 4.6/5.

2. UserTesting

UserTesting is the default choice when you need to recruit real people to walk through a prototype or a live flow and narrate their thought process. The panel is large, the demographic targeting is solid, and the video output is easy to clip and share. Remote unmoderated tests run overnight, so you wake up to five narrated videos and a list of obvious friction points.

The flip side is that it's enterprise pricing with a learning curve on test design, and testers are aware they're being recorded, so some behaviors skew artificial. Pricing is on request. G2 rates it 4.5/5 and Capterra 4.5/5.

3. Applause

Applause is a premium usability and QA testing platform that pairs a global community of testers with real devices and a dedicated expert to help design studies. It's the right fit for cross-device journey testing where you need to hit specific regions, OS versions, or carrier combos, and the hands-on support makes it solid for regulated or global products.

Where it struggles is daily, iterative cadence. Scheduling and availability constraints with the tester pool mean it's not the right choice if you want to run something quick tomorrow morning. Pricing is on request, and G2 rates it 4.4/5.

4. Lyssna (formerly UsabilityHub)

Lyssna is the tool I recommend to small teams who need fast, cheap directional feedback. It covers first-click tests, five-second tests, preference tests, and card sorts, and it has a 530,000+ person panel built in when you need recruits. The UI is clean and the range of test types is wide, which makes it a good generalist for early-stage work.

The jump from free to the $75/month Basic plan is steep, and panel recruitment fees add up fast if you need a niche audience. G2 rates it 4.5/5 and Capterra 4.7/5.

5. Hotjar

Hotjar is a web-focused analytics tool covering heatmaps, session recordings, on-site surveys, and funnel analytics for websites and responsive web apps. It's easy to set up, works well for marketing sites and e-commerce, and plugs into a wide integration library.

The catch is that it isn't built for mobile apps, and session sampling on lower tiers means you miss edge cases. Customers also regularly cite data overload with no prioritization layer on top. Pricing starts at $32/month. G2 rates it 4.3/5 and Capterra 4.7/5. For a deeper look at alternatives, see our Hotjar alternatives breakdown.

6. Survicate

Survicate runs contextual surveys in-product, on websites, and in mobile apps. You can trigger surveys on specific events, which is far more useful than a generic NPS popup. Targeting is good, customization is flexible, and it supports multiple languages out of the box.

The pricing starts at $119/month, which is steep if you're only sending a handful of surveys a month. A free trial is available. G2 rates it 4.6/5 and Capterra 4.5/5.

Best wireframing and prototyping UX design tools

A quick definition check, because this still trips up new PMs. Wireframes are low-fidelity structural sketches, where the point is to argue about layout rather than pixel color. Prototypes are high-fidelity, clickable representations of the final product that you can user-test.

7. Balsamiq

Balsamiq commits hard to the low-fidelity bit. The deliberately hand-drawn aesthetic keeps stakeholders arguing about structure instead of button radius, which is exactly the argument you want at the wireframe stage. It's rapid, focused, and almost impossible to accidentally over-polish. The moment you need real visual fidelity, you've outgrown it. Pricing starts at $9/month. G2 rates it 4.2/5 and Capterra 4.4/5.

8. Adobe XD

Adobe XD still has loyal users, particularly in shops already on Creative Cloud. It handles component-based design and animated transitions well, and the Adobe ecosystem integration is genuinely deep if you need to work across Photoshop and Illustrator assets.

The honest downside is that real-time multi-editor collaboration was never its strength, and Adobe has clearly deprioritized the product. Release cadence shows it. Pricing starts at $9.99/month. G2 rates it 4.3/5 and Capterra 4.6/5.

9. Figma

Figma is the default. If you're setting up a new team stack in 2026, the question isn't whether to use Figma, it's whether you need anything else in this category at all.

Live multiplayer editing, a design system that actually scales, and prototyping that covers 90% of what most teams need is a hard combination to argue with. Interactive components, conditional logic, and layered overlays give you close-to-real simulations without bouncing to another tool, and dev handoff is strong enough that most teams no longer need a separate handoff product. The trade-offs are modest: advanced prototyping has a learning curve, and very large files can lag. Pricing starts at $12 per editor/month. G2 rates it 4.6/5 and Capterra 4.7/5.

10. Sketch

Sketch pioneered the vector-based design space and still has a clean, opinionated feel that macOS designers swear by. The plugin ecosystem is mature, native performance is fast, and community templates are strong.

What kills it as a team standard is that it's macOS only, which rules it out of any cross-OS org, and real-time collaboration still lags Figma. Pricing starts at $99/year. G2 rates it 4.5/5 and Capterra 4.7/5.

11. Axure RP

Axure RP is the tool enterprise UX teams reach for when they need prototypes that behave like software rather than slideshows. Conditional logic, working form fields, and data-driven interactions all sit inside the tool, and the output doubles as serious UX documentation alongside the prototype itself.

The price of that power is a steep learning curve, and for most consumer product teams it's overkill. Pricing starts at $25 per user/month. G2 rates it 4.2/5 and Capterra 4.4/5.

Best flowchart and user flow UX software

User flows are the bridge between research and design. If you can't map the current flow, you can't defend a change to it. These tools exist because trying to keep flows in Figma files quickly becomes unworkable.

12. Overflow

Overflow imports artboards from Sketch, Figma, and XD, wraps them in device skins, and lets you connect and annotate flows. The self-guided presentation mode makes it genuinely useful for async stakeholder review, and passphrase-protected sharing is a nice touch when you're sending early flows outside the team.

Occasional export format issues crop up, and the user base is small compared to Figma-native flow tools, so the community of plugins and templates is thinner. Pricing starts at $15 per user/month. G2 rates it 4.2/5.

13. FlowMapp

FlowMapp covers user flows, sitemaps, and customer journey maps in a low-fidelity wireframe-style view. It's affordable, the UX-specific templates save time in early-stage planning, and it collaborates reasonably well. Where it runs out of room is on complex diagrams, and the shape library is narrower than Lucidchart's. Pricing starts at $15/month. G2 rates it 4.7/5 and Capterra 4.7/5.

14. Lucidchart

Lucidchart is the grown-up diagramming tool. It isn't UX-specific, but it covers flows, swim lanes, ERDs, and system diagrams with real-time collaboration, and the integrations with Slack, Atlassian, and Google Workspace are genuinely strong. It can feel enterprise-heavy for a small UX team, and complex diagrams occasionally misformat, but the template library makes up for a lot. Pricing starts at $7.95/month. G2 rates it 4.5/5 and Capterra 4.5/5.

15. Gliffy

Gliffy is lightweight diagramming with a strong Atlassian story. If your team lives in Jira and Confluence, Gliffy diagrams embed natively and stay in sync, which is a real productivity win. It's browser-based with a Chrome app for offline editing. The shape library is smaller than Lucidchart's and the advanced features are thinner, so it suits teams whose diagrams are fundamentally simple but need to live next to their tickets. Pricing starts at $8 per user/month. G2 rates it 4.4/5 and Capterra 4.3/5.

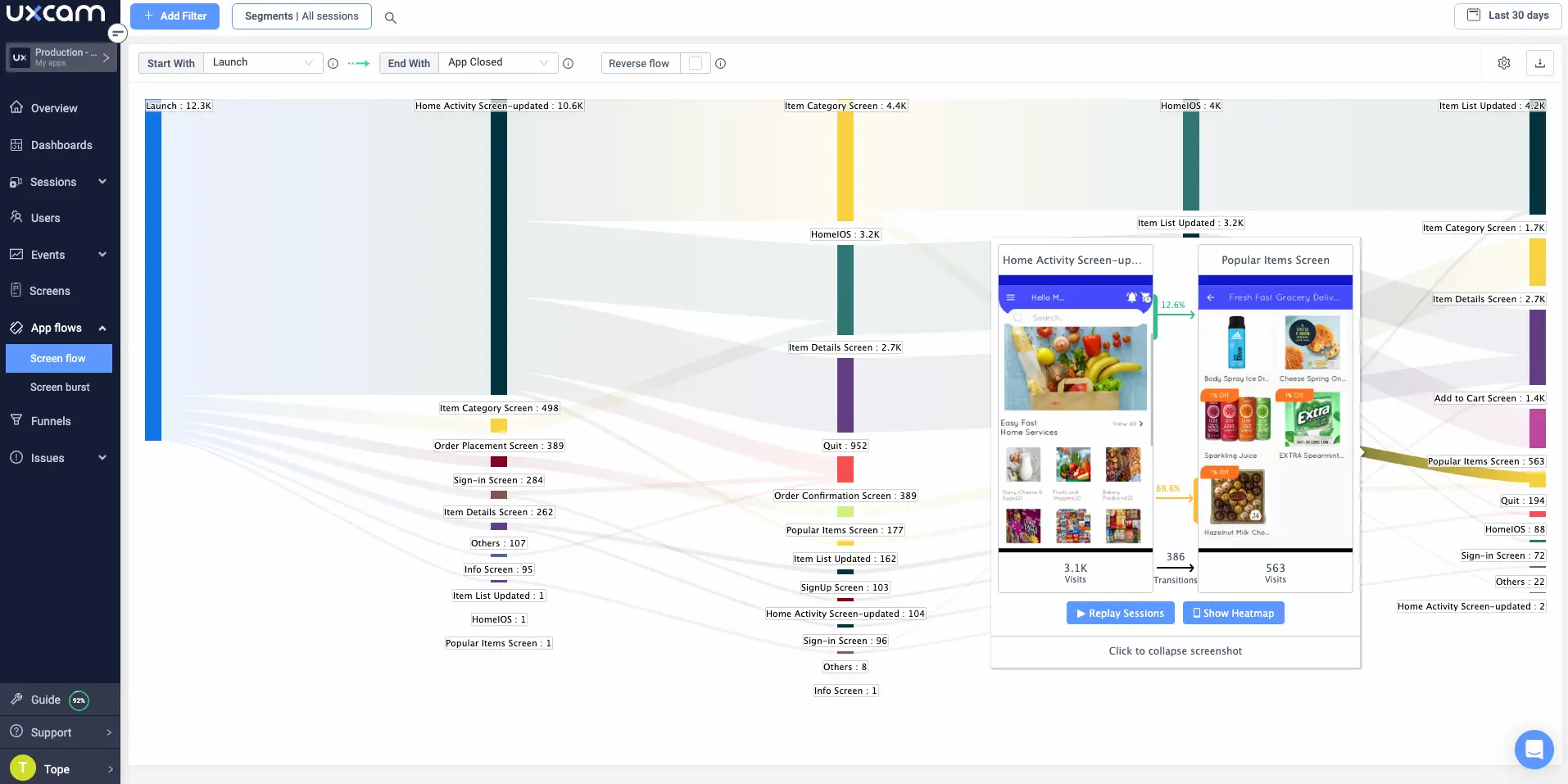

16. UXCam Screen Flow

This is the one flow tool on the list that doesn't ask you to draw anything. UXCam Screen Flow generates the actual user flow from real session data. You get to compare the flow you designed with the flow users are executing, and the gap between those two is usually where the roadmap lives.

Pair Screen Flow with session replay and you can click from any node in the flow straight into a replay of someone taking that path. That's the part of a UX workflow that used to be a guess. The trade-off is that it requires a UXCam SDK install, so it's not a throwaway tool for a single-sprint project. The free plan includes 10,000 monthly sessions, with paid plans on request. G2 rates it 4.7/5 and Capterra 4.6/5.

Best collaboration and UX design handoff tools

17. FigJam

FigJam is Figma's whiteboard, tuned for design sprints, journey maps, affinity diagrams, and voting widgets. If your team is already in Figma, FigJam is the path of least resistance for ideation sessions, with seamless handoff into Figma proper, multiplayer with 24-hour guest access, and a genuinely fun feel to it. The gaps are no offline editing and lighter version history than Miro. Pricing starts at $3 per editor/month. G2 rates it 4.5/5 and Capterra 4.8/5.

18. Zeplin

Zeplin is built for clean design-to-dev handoff: specs, measurements, color tokens, asset exports, and code snippets for iOS, Android, and web. The developer handoff experience is genuinely excellent, design system support is solid, and Jira and Slack integrations are strong.

The honest caveat is that Figma's built-in Dev Mode has eaten a lot of Zeplin's reason to exist. It's still worth it if you're on Sketch or XD, or if your engineering team specifically prefers Zeplin's format. Free for one project, paid from $19/month. G2 rates it 4.4/5 and Capterra 4.4/5.

19. Miro

Miro is the infinite whiteboard most cross-functional teams settle on. Empathy maps, journey maps, roadmapping, sprint planning, and workshops all fit comfortably. The template library is huge, it works across web, desktop, and mobile, and it integrates with most of your stack. Massive boards can lag, and it isn't a handoff tool, so pair it with Figma or Zeplin downstream. Free for three boards, paid from $8 per user/month. G2 rates it 4.7/5 and Capterra 4.7/5.

13 Patterns for a Working UX Stack

Picking good tools is the easy part. Getting a team to use them consistently, and to feed the outputs into real decisions, is where most stacks quietly die. These are the patterns I see working, and the traps that keep catching teams.

1. Start with the evidence gap, not the design tool

Most teams begin a tool audit by debating Figma versus Sketch. That's the wrong first question. Start by asking what decisions you couldn't defend last quarter. If the answer is "we don't actually know if the new onboarding moved activation," the gap is behavioral analytics, not design software. Reforge has a useful framing on evidence-driven product decisions that applies directly here.

2. One tool per job, not one tool per feature

Every category on this list has three or four overlapping options. Pick one per category and force it to earn its renewal. Running both Hotjar and UXCam, or both Miro and FigJam, is almost always a sign of two teams buying the same thing without talking.

3. Sample rate matters more than feature count

Cheap session-recording tiers often sample 10-25% of traffic. That sounds fine until a PM asks "show me the users who hit the crash on iOS 17," and the replay isn't there. UXCam captures 100% of sessions on its paid tiers, which is the difference between a debugging tool and a statistics exercise.

4. Prototype testing will always disagree with production behavior

Panel testers are in "test mode." Real users are distracted, on flaky networks, using a battered device with 2% battery. The Baymard Institute has years of evidence that production behavior consistently reveals friction that moderated tests miss. Treat prototype tests as directional, production sessions as truth.

5. Watch five replays before you open a design file

I ask every designer on my teams to do this before starting any feature: watch five session replays of users doing the adjacent flow. It takes 15 minutes and kills at least one bad assumption per sprint.

6. Tie every survey to an event

Generic "How satisfied are you?" surveys produce noise. Surveys triggered by a specific event, like "you just abandoned checkout" or "you just used the new filter three times," produce decisions. Survicate and UXCam both support event-triggered targeting.

7. Don't let handoff become the bottleneck

Design-to-dev handoff is where 20% of a sprint can disappear if the tool chain is wrong. Figma Dev Mode covers most teams now. If you're still exporting static Zeplin links and pasting them into Jira tickets, your stack is costing you days per release.

8. Quantify frustration signals, don't just count events

Traditional analytics tools count taps. Issue analytics counts frustrated taps: rage taps, repeated back-navigation, UI freezes. This is the signal Recora used to find the press-and-hold confusion that cut their tickets by 142%.

9. Make Tara AI or equivalent review a weekly ritual

The old model of "designer watches 40 replays on Friday" doesn't scale. Tara AI processes sessions at scale and surfaces what changed and why. Put a 30-minute weekly slot on the calendar to review its findings with PM and engineering.

10. Build a research repository, not a folder of PDFs

Orphaned Lookback or UserTesting videos are effectively garbage within six weeks. Tag findings by feature, persona, and flow in a single searchable system. Dovetail and Condens both do this well.

11. Design for the P95 device, not your MacBook

Mobile designers consistently test on flagship iPhones. Real users are on mid-range Androids with 3GB of RAM. Android Studio's device profile emulator and UXCam's device segmentation will both show you how the other half ships.

12. Kill tools that don't feed the next stage

If a tool produces output that doesn't directly inform the next stage of the workflow, it's waste. A whiteboarding session that ends in a photograph of a whiteboard has failed. A research report that ends as a PDF attachment has failed. Every stage should hand off a live, linkable, searchable artifact.

13. Budget for tool rituals, not just licenses

A tool without a ritual is shelfware. Put replay review on the sprint kickoff agenda. Put heatmap reads on every feature post-mortem. Put survey results in the Monday standup. The license fee is cheap; the missing calendar invite is what kills adoption.

Industry-specific considerations

The same stack doesn't fit every vertical. Here's how I think about the adjustments by industry.

Fintech and banking

Compliance and session-recording privacy are the first questions. You need a tool that masks PII by default, supports on-device masking, and gives you a clean audit trail. UXCam's automatic sensitive-data masking and SOC 2 posture matter more here than in most verticals. Expect higher scrutiny on moderated research consent flows too. FINRA's guidance on digital experience testing is worth reading before you pick a panel tool.

Healthcare and healthtech

HIPAA and the equivalent EU rules narrow the tool list sharply. Behavioral analytics has to be configurable to exclude PHI at the SDK layer, not after the fact. Prototype testing with real patients requires IRB-adjacent process. Most teams I've seen in this space run UXCam or similar with aggressive masking, plus a specialist research partner rather than an open panel.

E-commerce and retail

The dominant question is funnel friction, especially on mobile web and apps. Heatmaps, scroll maps, and funnel analytics matter more than prototyping sophistication. Costa Coffee's 15% registration lift came from exactly this kind of funnel work. The Baymard Institute's checkout usability benchmarks are the reference database for this vertical.

SaaS and B2B products

Activation and feature adoption are the money metrics. Housing.com grew feature adoption from 20% to 40% using UXCam to find the drop-off points in the new-user flow. Add to the stack a strong in-product survey tool for activation feedback, and a flow-mapping tool that can segment by plan tier.

Media, streaming, and gaming

Session length, engagement, and rage-tap data matter most. Inspire Fitness boosted time-in-app by 460% and cut rage taps by 56%, which is exactly the pattern I see repeatedly in engagement-driven products. Prototyping matters less here than production-behavior analytics, because the "feel" of the product is impossible to test accurately in a prototype.

Travel, mobility, and on-demand

These apps live or die by cross-device, cross-network, cross-region reliability. Applause's device diversity matters here in a way it doesn't for a pure SaaS product. Pair that with UXCam for production anomaly detection, because the edge cases in this space (weak signal, GPS drift, background app kills) are where users actually churn.

UX tools by category

A quick reference if you're building a shortlist.

Behavioral analytics and session replay: UXCam, Hotjar, FullStory, LogRocket.

Moderated and unmoderated user testing: UserTesting, Lyssna, Applause, Maze, UserZoom.

In-product surveys and feedback: Survicate, Sprig, Qualaroo.

Wireframing and design: Figma, Sketch, Balsamiq, Adobe XD, Penpot.

Advanced prototyping: Axure RP, ProtoPie, Framer.

Flow mapping and diagramming: Lucidchart, FlowMapp, Overflow, Gliffy, Whimsical, UXCam Screen Flow.

Whiteboarding and workshops: Miro, FigJam, Mural.

Design-to-dev handoff: Figma Dev Mode, Zeplin, Avocode.

Research repositories: Dovetail, Condens, EnjoyHQ.

Accessibility testing: axe DevTools, Stark, WAVE.

10 Common UX Stack Mistakes

Buying Figma and calling the stack done

Figma is necessary but not sufficient. Without a research layer and a behavioral analytics layer, you're shipping educated guesses. Add at least one panel tool and one production analytics tool.

Treating session replay as a debugging-only tool

Engineering reaches for replay when a bug report comes in. That's fine, but designers and PMs should be in the replay tool more often than engineering. The cultural shift is the win.

Confusing event analytics with UX analytics

Mixpanel and Amplitude tell you what users clicked. They don't tell you why users hesitated for 12 seconds before they clicked. You need both event data and session context to make a real decision.

Running surveys without a sample-size plan

Three NPS respondents is not a signal. Decide the minimum N per segment before you launch the survey, and don't make decisions below that threshold.

Letting tool sprawl hide ownership

If nobody can tell you which PM owns the UserTesting account, nobody is running the UserTesting account. Assign a DRI for every tool, and put a quarterly utilization review on their OKRs.

Skipping the accessibility audit

Designers and engineers both assume "the other team" handles accessibility. Build it into the tool chain: Stark in Figma, axe DevTools in the browser, manual screen-reader passes on critical flows. The WCAG 2.2 guidelines are the baseline, not a stretch goal.

Designing on desktop for a mobile-heavy user base

I still see teams designing 1440px screens for products where 70% of traffic is mobile. Set the default Figma frame to the actual P50 device in your analytics, and force every review to happen on a real phone.

Over-investing in prototyping, under-investing in production monitoring

A prototype that tests beautifully and a production flow with a 30% drop-off are a very common pairing. You found the issues that a tester could surface. You didn't find the ones that only appear at scale, on real devices, over real networks.

Ignoring the cost of switching

The cheapest tool in year one can be the most expensive over three years if you outgrow it. Budget for the stack you'll need at 3x current scale, not 1x.

Not closing the loop back to research

Shipping is not the end of the cycle. A feature that ships is a hypothesis to test. If you don't have a ritual for reviewing behavior 30 and 90 days post-ship, you're not doing UX research, you're doing UX opinion.

A maturity model for UX tooling

Here's the playbook I give leaders who ask "where should we be?" Match your situation to a level, then invest in the next one.

Level 1: Ad hoc

One designer, Figma, occasional user interviews over Zoom. No repeatable research process, no behavioral analytics. Fine for a pre-seed startup. The upgrade path is to add a free-tier behavioral analytics tool (UXCam or Hotjar) before you ship to paying customers.

Level 2: Instrumented

Figma for design, FigJam or Miro for ideation, a basic analytics tool in place, occasional Lyssna or UserTesting studies. You have data but no ritual for using it. The upgrade path is to add a research repository, install session replay, and assign a DRI for weekly replay reviews.

Level 3: Evidence-driven

Every feature ships with a hypothesis, a measurement plan, and a post-launch review. Session replay, heatmaps, and issue analytics are on every PM's weekly agenda. Panel research fills the gaps behavioral analytics can't reach. Tara AI or equivalent is processing sessions at scale. Most 50-500 person companies should be here.

Level 4: Operational

Research and analytics are infrastructure. Every designer and PM has on-demand access to real-user behavior. Frustration signals are tracked as first-class metrics alongside revenue and retention. Accessibility is automated in CI. The tool stack is consolidated, documented, and has an owner. This is where companies like Inspire Fitness, Housing.com, and Costa Coffee are operating when they post the kind of numbers they've posted.

How to choose the right UX tools for your team

Four questions I ask every team evaluating a UX stack. Where is the evidence gap? Most teams over-invest in design tools and under-invest in the research and analytics that tell them whether the designs worked. If you can't answer "how do we know this worked after ship?" the gap is behavioral analytics. Who else touches this work? Your tool has to survive PMs, engineers, and executives opening it, which usually means browser-based, real-time, and link-shareable. Does it close the loop? Research to design to prototype to ship to measure, back to research. If a tool doesn't feed the next stage, it adds friction. Figma to Zeplin to Jira works; Figma to PDF to email does not. And what's the cost of switching? Sketch-to-Figma migrations are expensive, so pick tools you can live with for three years, not three sprints.

For most product teams in 2026, the stack I'd recommend looking hard at is Figma for design, FigJam or Miro for ideation, Lyssna or UserTesting for panels, and UXCam for behavioral analytics, issue analytics, and Tara AI-driven insight synthesis across both your mobile apps and your web product. That combination gets you from whiteboard to validated, measured ship.

Frequently asked questions

What are UX tools?

UX tools are the software applications designers, researchers, and product teams use across the user experience workflow. That workflow typically covers five stages: user research, information architecture and user flow mapping, wireframing, prototyping, and post-launch optimization. Different tools specialize in different stages, and most mature teams run a stack of 4-7 tools rather than expecting one product to cover everything. The right combination depends on whether you're building web, mobile, or both, and whether your gap is in generating design artifacts or validating them against real user behavior.

Which UX tool is best for mobile app design?

For the design work itself, Figma has become the default for mobile app screens, components, and prototypes thanks to real-time collaboration and strong developer handoff. For understanding how real users actually interact with your shipped mobile app, UXCam is purpose-built for that job, with session replay, heatmaps, issue analytics, and Tara AI running natively on iOS, Android, React Native, and Flutter. Most mature mobile teams pair Figma for design with UXCam for behavioral validation, because prototype testing alone will never surface the rage taps, UI freezes, and edge-case crashes that happen in production.

How many UX tools does a typical team need?

Most effective teams I've worked with run between four and seven tools: one design tool (usually Figma), one whiteboarding tool (FigJam or Miro), one panel-based research tool (UserTesting or Lyssna), one behavioral analytics tool (UXCam for the full product, Hotjar for web-only sites), and optionally a dedicated flowchart tool and a handoff tool. Adding more than that usually means tool sprawl with overlapping features and nobody fully using any of them. The goal is one tool per distinct job, not one tool per feature.

What's the difference between user research tools and product analytics tools?

User research tools like UserTesting, Lyssna, and Applause rely on recruited participants who know they're being observed. You get narrated walkthroughs, survey responses, and stated preferences, which are excellent for exploring new concepts and catching obvious usability issues before launch. Product analytics tools like UXCam observe everyone who actually uses your live product, anonymously and at scale, so you see real behavior including frustration signals like rage taps and UI freezes. Research tools tell you what people say they'll do. Product analytics tell you what they actually did. Serious teams run both.

Are free UX tools good enough for professional work?

Free tiers have improved dramatically. Figma's free plan covers most solo and small-team design work. UXCam's free plan includes 10,000 monthly sessions with full feature access, which is enough for a team validating an MVP or a small app. Lyssna and Miro both offer usable free tiers. Where free plans break down is at scale: once you're onboarding a team, running weekly research, or analyzing hundreds of thousands of sessions, you'll hit collaboration limits, session caps, or integration walls. Start free, upgrade when the constraint actually starts slowing you down.

How do I justify UX tool spend to finance?

Tie each tool to a revenue or cost metric. Design tools reduce rework cycles. Research tools reduce the cost of shipping the wrong thing. Behavioral analytics tools like UXCam show up most directly in business metrics: Recora reduced support tickets by 142% after using session replay to spot a press-and-hold misunderstanding, Inspire Fitness boosted time-in-app by 460% and cut rage taps by 56%, Housing.com grew feature adoption from 20% to 40%, and Costa Coffee raised registrations by 15%. Frame every tool renewal around the decisions it enabled in the last quarter, not its feature list.

Can one tool replace a full UX stack?

No, and anyone selling you that is selling you a demo. Design tools, panel research, behavioral analytics, and handoff are genuinely different jobs with different data models and different primary users. Figma is the closest anyone has come to consolidating design plus prototyping plus handoff, and even Figma sits alongside a research tool and an analytics tool in every mature team I've seen.

How do I pick between UXCam and Hotjar?

If you're web-only and your priority is marketing site optimization, Hotjar is a reasonable default. If you ship a mobile app, or a product that spans mobile and web, UXCam is the right call because it was built from day one to handle both with equal depth. The other differentiators are issue analytics, Tara AI, and 100% session capture on paid tiers, all of which matter more as you scale past the first few thousand users.

How long does it take to roll out a new UX analytics tool?

SDK install is typically under an hour for mobile or a few lines of JS for web. Getting your team to actually use it daily takes three to six weeks: you need to assign an owner, run a kickoff training, bake replay review into a weekly ritual, and integrate the tool with Slack or Jira so findings surface where your team already works. The tools that die are the ones that get installed and then never attached to a ritual.

Should designers or PMs own the analytics tool?

Both, with a clear DRI. In practice, the product manager usually owns the account and the roadmap questions, while designers use it daily to inform design decisions. Engineering uses it for debugging. The key is avoiding the pattern where nobody owns it because everyone does.

Do I need a flow-mapping tool if I already have Figma?

For early-stage planning, Figma plus FigJam covers it. Where a dedicated flow tool earns its place is when you want flows synchronized with real user behavior. UXCam Screen Flow generates the actual flow users are executing, which is different from the flow you designed. Comparing the two is where the unexpected roadmap items come from.

What's the single best investment a small team can make?

Install a free behavioral analytics tool before you ship to your first 1,000 users. The cost is zero, the install is an hour, and the first time you watch a new user try to use the product you built, it will reshape how you think about the next sprint. Everything else on this list can wait until you have that evidence layer in place.

How do I deal with privacy and GDPR concerns when using session replay?

Every credible session-replay vendor now supports automatic masking of sensitive fields (passwords, card numbers, PII) at the SDK layer, before data leaves the device. Make sure your tool is SOC 2 compliant, supports data residency options if you're serving EU users, and can be configured to mask custom selectors. The ICO's guidance on web analytics and cookies is the baseline for UK teams; for EU teams, EDPB guidance applies.

How does AI change the UX tool stack in 2026?

Two main ways. First, AI analyst layers like Tara AI turn session-replay libraries from "40 videos I'll never watch" into "here are the three issues affecting your iOS users this week." Second, AI-assisted design tools are compressing the wireframe-to-prototype cycle. What hasn't changed: AI can synthesize evidence, but it can't generate evidence you didn't collect. A design team that skips instrumentation still has nothing for the AI to analyze.

What's the one tool I should add if I'm not using anything like it today?

Behavioral analytics with session replay and issue analytics. If you're not watching real sessions of real users, you're guessing. Every other tool on this list is in service of decisions that this one category lets you validate.

AUTHOR

Silvanus Alt, PhD

Founder & CEO | UXCam

Silvanus Alt, PhD, is the Co-Founder & CEO of UXCam and a expert in AI-powered product intelligence. Trained at the Max Planck Institute for the Physics of Complex Systems, he built Tara, the AI Product Analyst that not only analyzes user behavior but recommends clear next steps for better products.

TABLE OF CONTENTS

- Key takeaways

- How we evaluated these UX tools

- Overview: the 19 best UX tools for 2026

- Best user research UX tools

- Best wireframing and prototyping UX design tools

- Best flowchart and user flow UX software

- Best collaboration and UX design handoff tools

- 13 Patterns for a Working UX Stack

- Industry-specific considerations

- UX tools by category

- 10 Common UX Stack Mistakes

- A maturity model for UX tooling

- How to choose the right UX tools for your team

Related articles

UX design

User Experience Optimization: 10 Steps to Improve UX in 2026

User experience optimization is the iterative process of removing friction, improving clarity, and raising the conversion and retention signals on a...

Silvanus Alt, PhD

Founder & CEO | UXCam

UX design

We Reviewed the Top 19 UX Tools for 2026

The 19 best UX tools for 2026, tested across research, wireframing, prototyping, flowcharts, and handoff. Honest pros, cons, and pricing from a...

Silvanus Alt, PhD

Founder & CEO | UXCam

UX design

Customer Experience Dashboard Examples and the Metrics That Actually Matter

A customer experience dashboard turns scattered signals into one view. See examples, the metrics that matter, and how to build one that drives...

Silvanus Alt, PhD

Founder & CEO | UXCam