LogRocket vs Sentry: Full Comparison 2026

TABLE OF CONTENTS

- Key takeaways

- LogRocket vs Sentry at a glance

- How I evaluated LogRocket vs Sentry

- What is LogRocket?

- What is Sentry?

- Sentry pricing explained

- Key differences between LogRocket and Sentry

- 13 Patterns When Choosing LogRocket vs Sentry

- Industry-specific considerations

- Tools by category

- Common mistakes teams make

- Observability Maturity Model

- Where Both Tools Fall Short for Mobile

- The alternative: UXCam for mobile apps and the web

- Which tool should you pick?

- AI Session Analysis Alongside Both Tools

LogRocket and Sentry get compared a lot but solve genuinely different problems. LogRocket pairs session replay with frontend logs and network requests, which makes it strong for diagnosing the user-facing impact of frontend bugs. Sentry focuses on error tracking and performance monitoring across both frontend and backend, which makes it strong for catching the bugs themselves before they impact many users. Most mature production teams run both eventually. The question is sequencing.

Here's the comparison and how to think about the choice:

Direct feature-by-feature comparison, with pricing

8 use cases where each tool clearly wins

Migration considerations and how to combine them effectively

LogRocket and Sentry solve different parts of the production-issue problem: LogRocket pairs session replay with frontend logs and network requests; Sentry focuses on error tracking and performance monitoring across frontend and backend. If you have to pick one first, the answer depends on which problem hurts more right now — frontend bugs surface faster in LogRocket, system-level errors surface faster in Sentry.

Key takeaways

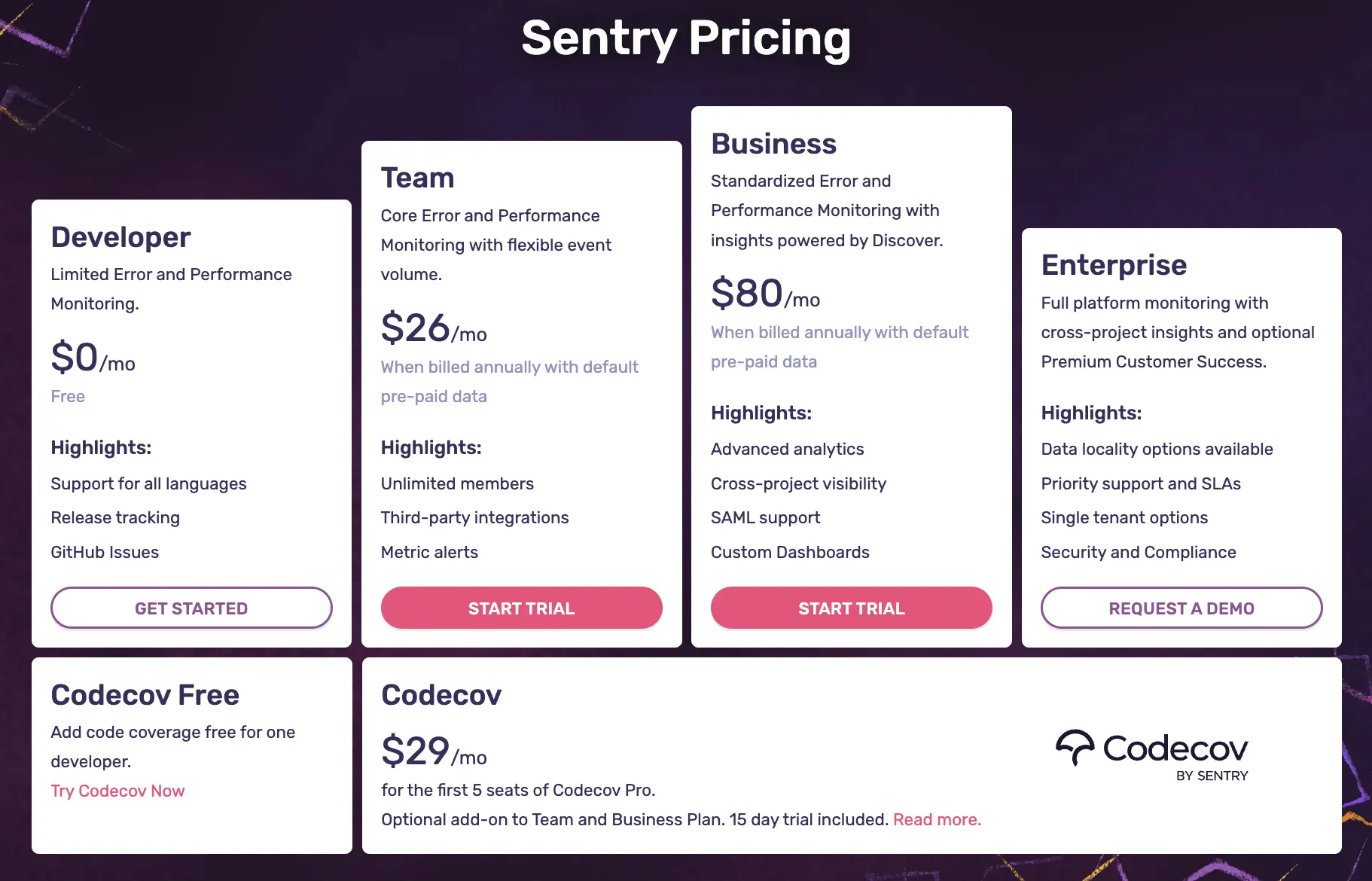

Sentry is an open-source error monitoring and APM tool. Best for backend and full-stack exception tracking. Sentry pricing starts at $26/month (Team) and $80/month (Business), with pay-as-you-go overages that can balloon fast.

LogRocket is a session replay and frontend monitoring tool for web and hybrid apps. Paid plans run from $99 to $460+ per month depending on sessions and seats.

The tools overlap less than the marketing suggests. Sentry is stronger at stack traces and exception fingerprinting. LogRocket is stronger at replay-driven UX debugging on the web.

UXCam is installed in 37,000+ products. Customer outcomes include Recora reducing support tickets by 142% after spotting a press-and-hold UI issue, and Costa Coffee lifting registrations by 15% after fixing a funnel drop.

LogRocket vs Sentry at a glance

| Criterion | LogRocket | Sentry |

|---|---|---|

| Primary use case | Frontend UX debugging via session replay | Error tracking and APM across the stack |

| Session replay | Yes, proprietary DOM capture | Yes, via rrweb library |

| Error tracking | Yes, with frontend focus | Yes, deep stack traces, fingerprinting |

| Backend monitoring | Limited | Core strength, 100+ SDKs |

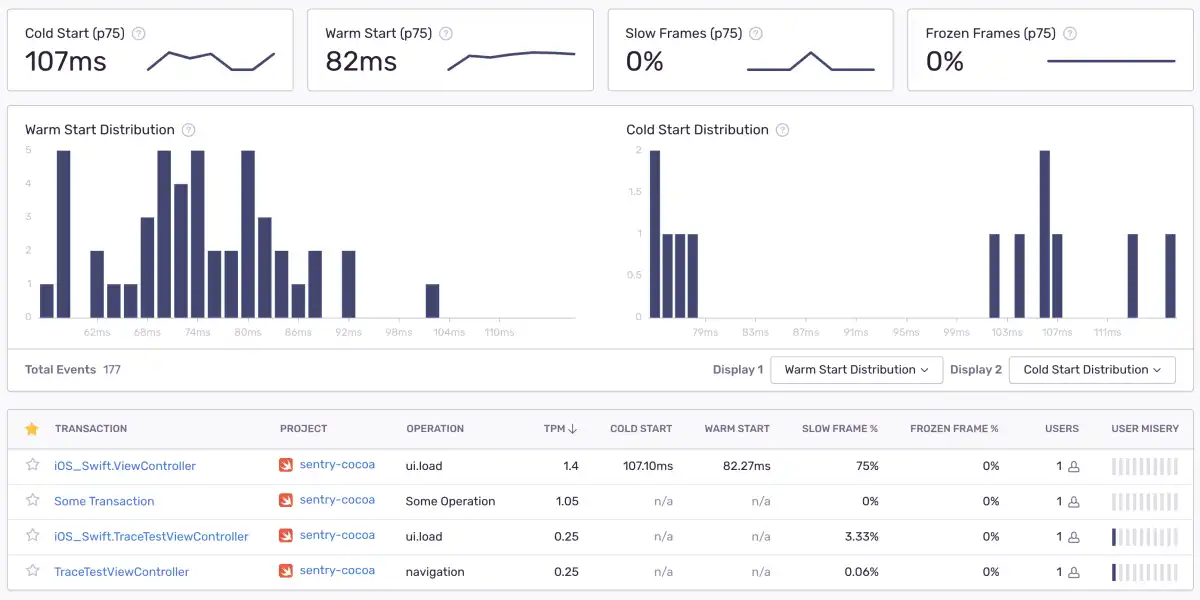

| Mobile SDKs | iOS, Android, React Native (basic) | iOS, Android, React Native, Flutter |

| Open source | No | Yes |

| Starting paid price | $99/month (Team) | $26/month (Team) |

| Free tier | Yes, limited | Yes, 5K errors, 50 replays |

| G2 rating | 4.6 / 5 | 4.5 / 5 |

How I evaluated LogRocket vs Sentry

I weighted five criteria based on the buying conversations I keep seeing in product and engineering teams:

Depth of insight (30%). Does the tool actually tell you why a user got stuck or why code failed, or does it just log an event?

Pricing transparency and scale economics (25%). Does the published price match what teams pay at 1M events or 100K sessions?

Mobile readiness (20%). Native SDKs, performance overhead, offline capture.

Integrations and workflow fit (15%). Issue trackers, Slack, data warehouses, CI/CD.

Time to insight (10%). How long from install to first actionable finding.

Everything below is measured against those five weights. I pulled pricing from public pages, cross-referenced G2 reviews and Gartner Peer Insights, and triangulated against invoices and usage data from teams I've worked with directly.

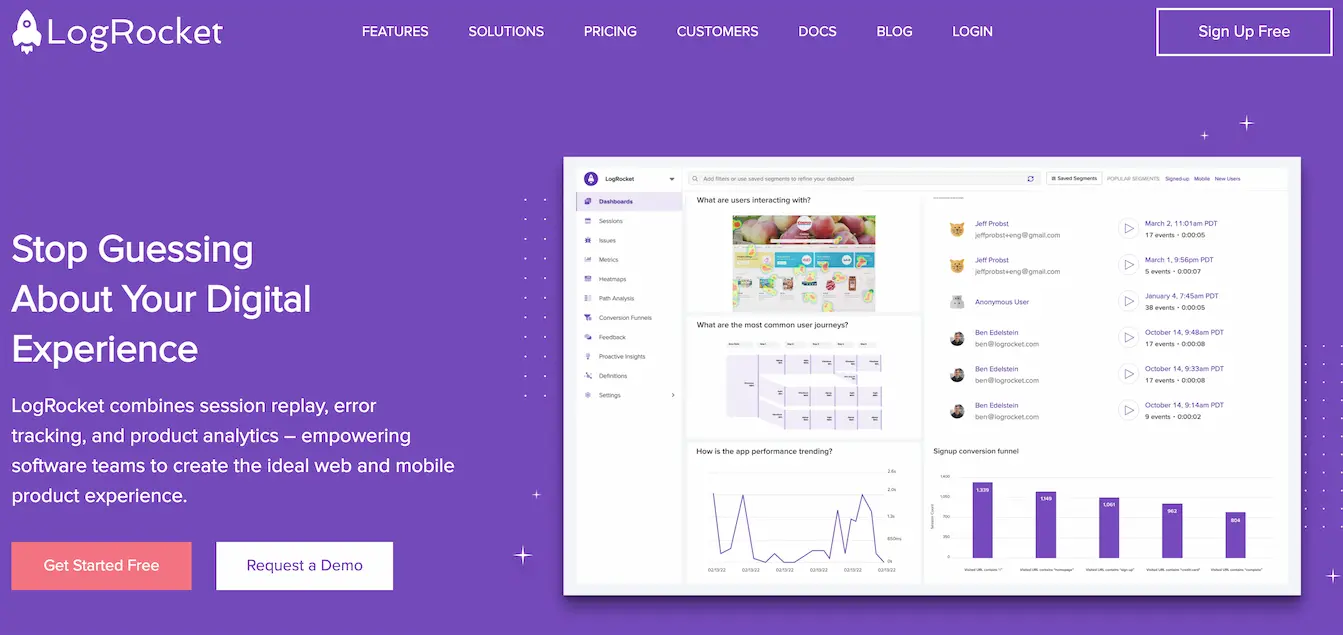

What is LogRocket?

LogRocket is a frontend monitoring and session replay platform founded in 2015. It records every user session on your site or app, syncs the replay with network requests, console logs, and Redux state, and lets developers scrub through the exact moment a user got frustrated. It raised a $25M Series C in 2022 co-led by Delta-v Capital and Battery Ventures.

LogRocket key features

Session replay for developers. Pixel-perfect replay with network, console, and Redux state stitched into the timeline. Useful when a bug reproduces only with specific state.

Error tracking and issue management. Groups frontend errors by impact so engineering can prioritize what actually hurts revenue.

Frontend performance monitoring. Core Web Vitals, slow API calls, long tasks, tied back to the session replay where they happened.

LogRocket pricing

LogRocket publishes three tiers plus a free plan and a custom Enterprise quote:

Free: 1,000 sessions/month, limited retention

Team: $99/month

Professional: $460/month

Enterprise: custom

Pros: best-in-class frontend replay on web, Redux and GraphQL integrations, strong error grouping.

Cons: mobile SDK is a second-class citizen, onboarding curve is real for teams coming from pure APM tools, and costs climb steeply once you enable full-session retention on a consumer product.

What is Sentry?

Sentry is an open-source application monitoring platform founded in 2008. It started as error tracking and has expanded into APM, session replay (via rrweb), profiling, and release health. Sentry is used by around 4 million developers across 90,000+ organizations.

Install the SDK, get exception alerts with full stack traces, device context, and release version. Sentry integrates natively with GitHub, Jira, Slack, and most CI systems.

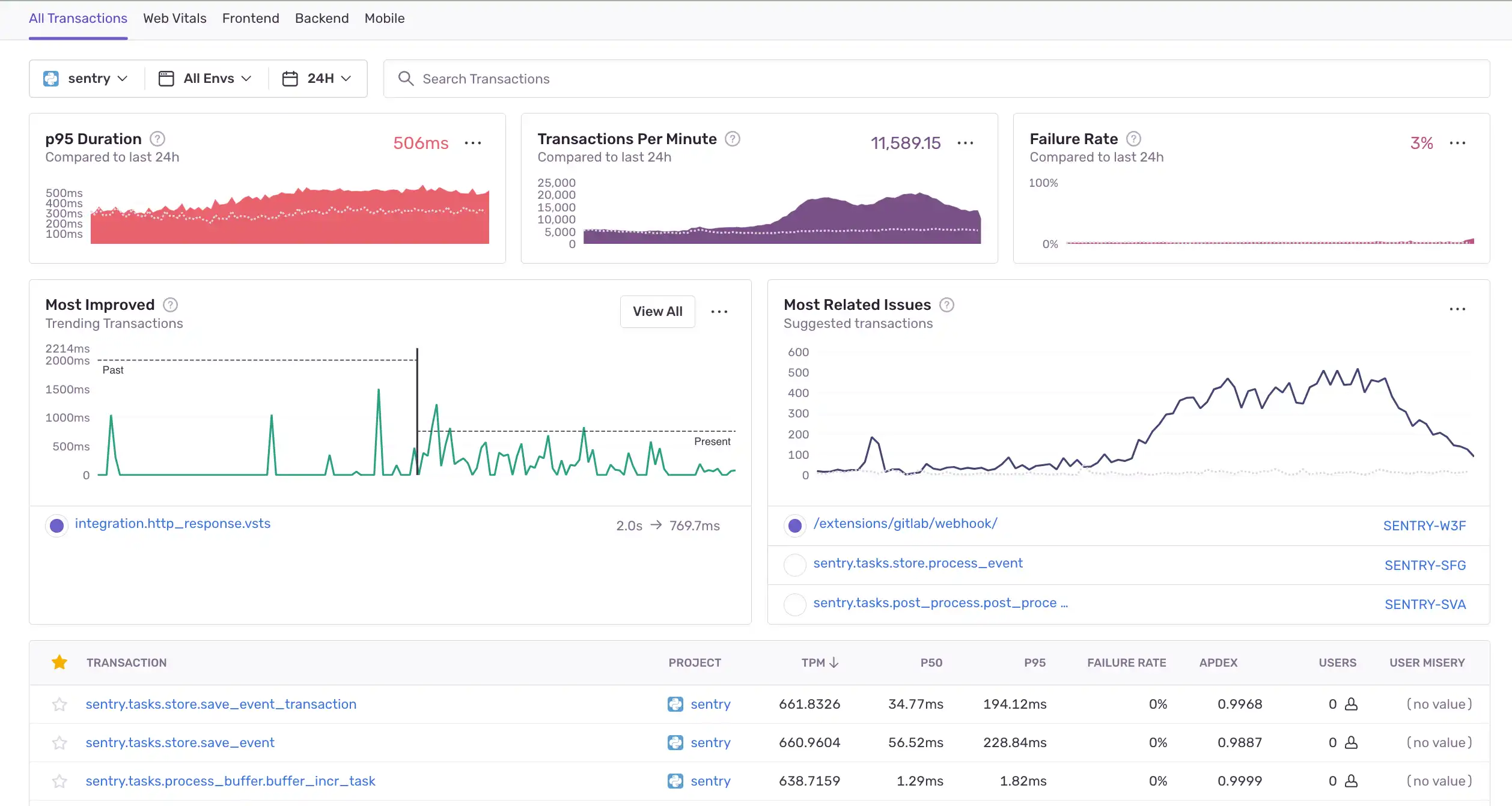

Sentry key features

Full-stack error monitoring. Catches exceptions across backend (Python, Node, Go, Rust, PHP, Ruby), frontend (JS, React, Vue), and mobile (iOS, Android, React Native, Flutter) with a single unified view.

Application performance monitoring. Distributed tracing, transaction spans, slow query detection, and N+1 query flags.

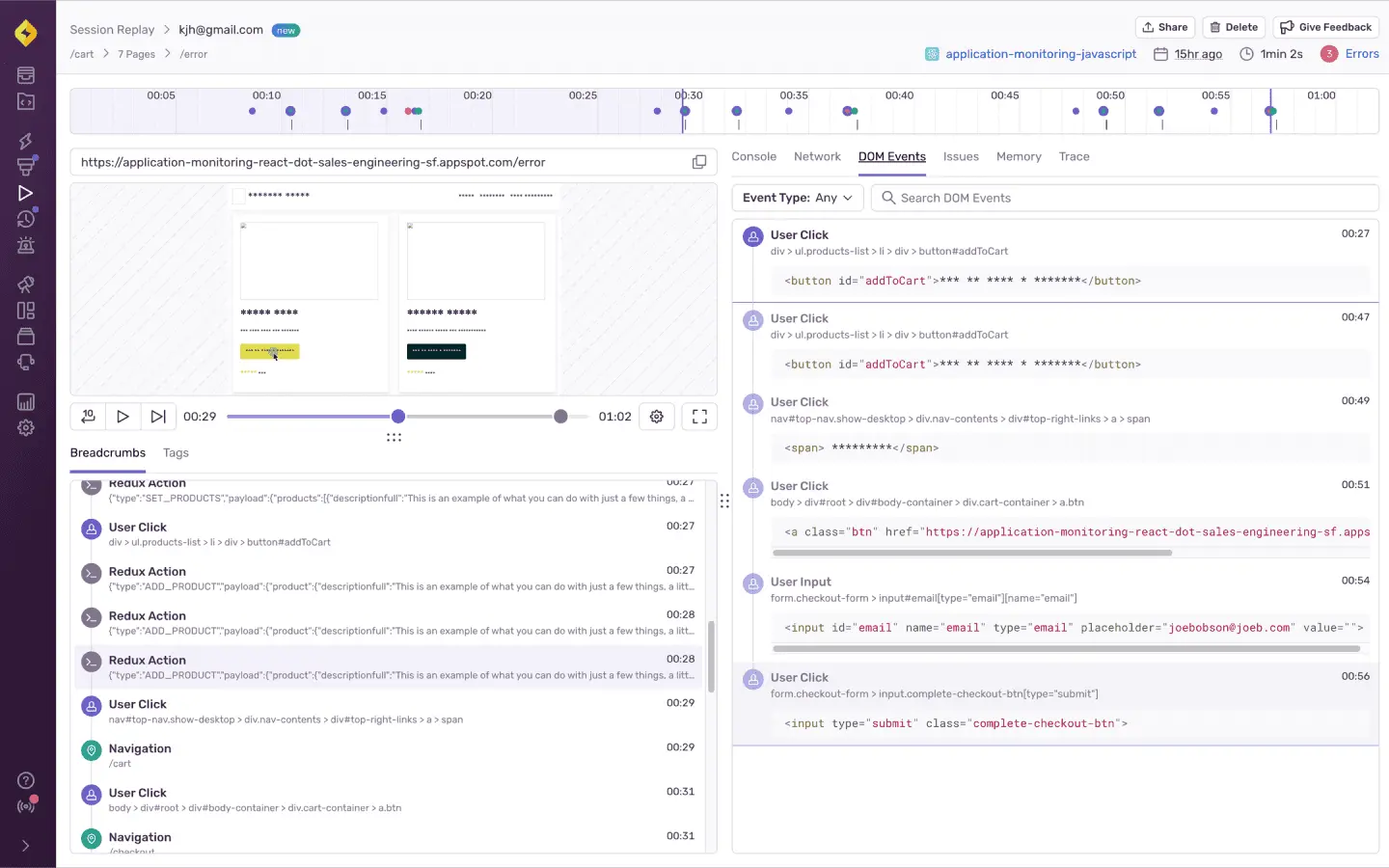

Session replay. Added in 2022 using rrweb. Replays are tied to error events so you see the 30 seconds before a crash or exception.

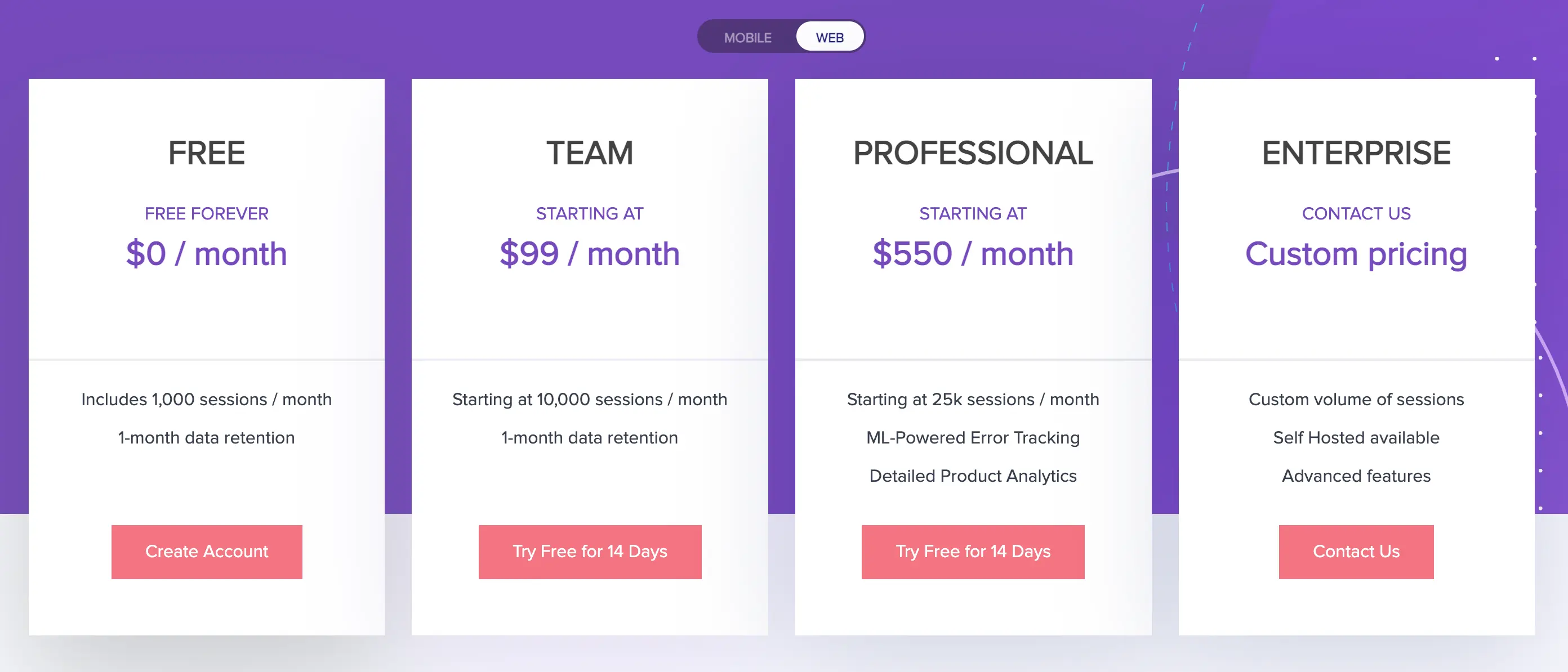

Sentry pricing explained

This is the section most teams underweight until the invoice lands. Sentry pricing in 2026 looks like this (current pricing page):

| Plan | Monthly price | Errors included | Replays included | Spans included |

|---|---|---|---|---|

| Developer | $0 | 5,000 | 50 | 10M |

| Team | $26 | 50,000 | 500 | 10M |

| Business | $80 | 50,000 | 500 | 10M |

| Enterprise | Custom | Negotiated | Negotiated | Negotiated |

What Sentry pricing actually costs at scale

The published numbers are base prices. Sentry uses a pay-as-you-go (PAYG) model where you set a monthly ceiling for reserved volume, then pay overage rates on anything beyond it. Real-world bills usually come from four places:

Error events above quota. Additional errors are roughly $0.00029 each at the Team tier, dropping slightly at Business.

Replays above 500/month. Extra replays are billed per unit and a noisy consumer app hits this limit in days, not weeks.

Spans for performance monitoring. A single user session can produce dozens of spans. Teams regularly blow past the 10M base allotment.

Attachments and profiling. Enabled by default on many SDKs, billed separately.

A Series B SaaS I audited last quarter was paying $2,400/month on a Business plan that started at $80, driven almost entirely by replay and span overages. The fix was capping PAYG, not a cheaper plan.

The Codecov add-on (acquired by Sentry in 2022) is another $29/month if you want pre-deployment test coverage.

Verdict on Sentry pricing: transparent on the surface, expensive in practice once you turn on replay and tracing. Budget 2-4x the headline number for any app with real traffic.

Sentry pros and cons

Pros: open source, deep stack-trace quality, strong release health and CI integration, easiest install in the category.

Cons: UX-level insight is thin compared to replay-first tools, and pricing scales with volume in ways that punish growth.

Key differences between LogRocket and Sentry

Approach to error management

LogRocket sits in the "user feedback and frontend experience" lane. It grades errors by user impact, catches rage clicks and dead clicks, and uses machine learning to cluster noisy frontend exceptions. Sentry sits in the "exception monitoring and APM" lane. It excels at stack traces, fingerprinting, and release-gated alerts across the entire stack.

If you need to answer "why did this user rage-click?", LogRocket wins. If you need "which deploy introduced this null pointer and who owns the service?", Sentry wins.

Source code and architecture

LogRocket is proprietary, hosted on Google Cloud, and uses the MutationObserver API for session capture. Sentry is open source on GitHub and can be self-hosted if you want to run it on your own infra, which changes the cost picture entirely but adds operational overhead.

Data scope and privacy

LogRocket's default capture is aggressive. It records almost everything by default, and the company documents its GDPR and SOC 2 compliance to reassure buyers. Sentry's default data scope is narrower (errors, not continuous sessions), and it scrubs sensitive data before it hits the ingest pipeline.

Technical footprint

LogRocket:

JavaScript SDK with MutationObserver-based DOM capture

50+ integrations (Intercom, Segment, Jira, etc.)

Works on any modern browser, Cordova/HTML5 hybrid apps, limited native mobile

Sentry:

100+ SDKs across languages and frameworks

rrweb-based replay tied to error events

Inbound filters for bots, extension noise, legacy browsers

Segment and Amazon SQS forwarding for data pipelines

13 Patterns When Choosing LogRocket vs Sentry

After enough evaluations, certain patterns repeat. Here are the ones I see most often when teams pick, combine, or migrate between these tools.

1. Double-paying for session replay

Teams add LogRocket for UX debugging, then turn on Sentry Replay for error context, and pay twice for the same capability. Pick one replay source of truth. If most replay use happens in engineering triage, lean on Sentry. If product and design consume replay daily, lean on LogRocket or a dedicated replay tool.

2. Ignoring span volume on mobile

A single mobile session can produce 30 to 80 performance spans once you instrument navigation, API calls, and custom transactions. Teams assume the 10M span allotment is generous, then hit it in week two. Audit your SDK sampling rates before production rollout.

3. Forgetting inbound filters

Bots, browser extensions, and legacy IE fragments generate a huge share of errors. Sentry's inbound filters drop them before ingest. LogRocket has similar controls. Teams that skip this step often pay overages on noise nobody will ever triage.

4. Confusing APM with product analytics

Sentry's APM answers "is the backend slow?" It does not answer "are users converting?" Product analytics platforms like Mixpanel, Amplitude, or UXCam answer conversion questions. Don't expect either tool to replace that layer.

5. Over-capturing PII

LogRocket's default is to record the DOM, which means form values, emails, and payment fields unless you explicitly mask them. Review the LogRocket privacy docs before a compliance team finds it first. UXCam masks PII by default.

6. Self-hosting Sentry without budgeting ops time

Sentry is open source and self-hostable, which looks free. The self-hosted install needs Postgres, Redis, Kafka, ClickHouse, and regular upgrades. A half-time SRE costs more than the SaaS bill for most teams under 100 engineers.

7. Skipping source map uploads

Minified stack traces in Sentry are useless. Wire source maps into your CI pipeline on day one or engineers will bounce off the tool within a sprint.

8. Not linking Sentry to deploys

Release health is one of Sentry's best features, and it only works if your CI tags releases with commit SHAs. Teams that skip this lose regression attribution.

9. Treating mobile crashes and mobile UX as the same problem

A crash is a stack trace. A UX issue is a user tapping five times on a dead button then uninstalling. Sentry handles the first, not the second. Pair it with mobile session replay to cover both.

10. Buying before instrumenting a pilot

Both tools offer generous free tiers. Instrument one product surface for two weeks, measure actual event volume, then pick a plan. Teams that buy based on the sales-quoted plan routinely overpay by 40% or under-provision and hit overages.

11. Overlooking rage and dead clicks in Sentry

Sentry introduced user frustration signals (rage clicks, dead clicks, slow clicks) in 2023. If you adopted Sentry before then and have not reviewed recently, you may be under-using what you already pay for.

12. Missing the integration with Codecov

Codecov now pipes uncovered lines into Sentry issue pages. If your team owns both, enable it. It changes triage by showing whether the failing path was tested before release.

13. Not measuring time-to-insight

The real metric is not dashboard count, it is how quickly a support ticket turns into a fix. Time three support tickets through each tool end-to-end during evaluation. The winner will be obvious.

14. Treating open source as free

Sentry's open-source license (BSL) restricts commercial resale and some production uses. Read the license before assuming "open source" means "no-strings-attached." For many organizations the SaaS version is the only practical path.

15. Over-indexing on G2 scores

Both tools sit in the high 4s on G2. The delta is noise. Reviews skew toward happy early adopters and miss the enterprise fatigue that builds at year two. Talk to reference customers at your scale, not just read tiles.

Industry-specific considerations

The right tool stack depends heavily on what kind of product you ship. Below are the patterns I see across six verticals.

Fintech and banking

Regulators care about PII exposure and session data residency. Sentry supports EU data residency and LogRocket offers EU hosting on Enterprise tiers. For mobile banking apps, crash reporting has to be paired with gesture-level replay to debug multi-step flows like KYC, money transfer, and card provisioning. Recora's 142% support ticket reduction is a typical fintech pattern: the issue was not a crash, it was a UI affordance users misread. Add a consent layer such as OneTrust or Cookiebot before enabling replay in regulated markets.

Ecommerce and retail

Funnel drop-off at checkout is the dominant problem. Sentry will tell you if the payment API threw. It will not tell you that 30% of users abandon on the shipping address step because the country dropdown is truncated on small phones. Pair error monitoring with funnel analytics and replay. Costa Coffee's 15% registration lift came from this kind of diagnosis. The Baymard Institute puts average cart abandonment near 70%, and most of that is UX, not infrastructure.

Health and wellness

HIPAA-adjacent apps need strict masking and a signed BAA. LogRocket's default capture is risky here. Sentry's narrower default scope is safer, but neither replaces a dedicated consent and masking layer. Inspire Fitness grew time-in-app 460% by using heatmap and replay evidence to rebuild onboarding, a workflow that requires a tool with native mobile gesture capture. Review HHS guidance on tracking technologies before enabling any replay on authenticated pages.

SaaS and B2B web apps

This is LogRocket's home turf. Redux state replay, GraphQL inspector, and session-scoped console logs pay for themselves when a CSM needs to show engineering exactly what a trial user saw. Pair with Sentry for backend exceptions and you have most of what a typical B2B stack needs, as long as you are mostly web-first. Integrations with Intercom and Zendesk let support paste replay URLs directly into tickets.

Media, streaming, and gaming

Session volume is the enemy here. Millions of sessions per month make full-replay tools financially untenable. Sample aggressively (1-5%) and lean harder on error monitoring plus targeted session replay for specific user cohorts. UXCam's screen tagging helps focus capture on high-value screens rather than everything. For gaming specifically, crash-free session rate is the metric that matters, and Firebase Crashlytics plus Sentry typically cover it.

Travel and marketplaces

Cross-platform parity matters. A user books on mobile and checks in on web. A tool stack that sees only half of that journey misses the actual friction points. Housing.com's 20 to 40% feature adoption lift came from funnel analytics that stitched both surfaces together. Identity stitching across anonymous and authenticated sessions is the harder problem here, and Segment or RudderStack is usually the glue.

Tools by category

Picking between LogRocket and Sentry is rarely the whole question. Here is how the broader category maps out.

Error monitoring and APM. Sentry, Datadog, New Relic, Bugsnag, Rollbar, Raygun, and Honeybadger. Sentry leads on developer experience and open source. Datadog wins in large enterprises already on its platform.

Web session replay. LogRocket, FullStory, Hotjar, Microsoft Clarity, PostHog, and Smartlook. Clarity is free and useful for marketing teams. FullStory is the enterprise-grade alternative to LogRocket.

Mobile session replay and product intelligence. UXCam, Mixpanel, Amplitude, Smartlook, and Pendo. UXCam is the only one with mobile-native replay, heatmaps, issue analytics, funnels, retention, and an AI analyst in one platform.

Crash reporting specifically for mobile. Firebase Crashlytics, Instabug, Embrace, and Bugsnag. Crashlytics is free and standard for teams on Firebase. Embrace goes deeper on performance.

Product analytics. Amplitude, Mixpanel, Heap, PostHog, and UXCam. Amplitude and Mixpanel are the incumbents. PostHog is the open-source challenger.

Customer data and identity. Segment, RudderStack, and mParticle. These sit upstream of all the tools above and pay for themselves once you have more than two destinations.

Test coverage and CI. Codecov (Sentry), Coveralls, and SonarQube.

Feature flags and experimentation. LaunchDarkly, Statsig, Split, and PostHog. Release-gated rollouts pair well with Sentry's release health for safe deploys.

Common mistakes teams make

1. Buying on the base price

Both tools advertise low entry prices. The real cost is 2-4x that once you turn on replay, spans, profiling, and attachments. Model cost at 3x projected event volume before signing.

2. Assuming mobile is a checkbox

"Has a mobile SDK" and "designed for mobile" are not the same thing. An SDK that ports web capture patterns to iOS will miss gestures, offline sessions, and UI freezes. Test with a real app for two weeks before committing.

3. Layering three tools when two would do

A common stack I see: Sentry + LogRocket + Mixpanel + Hotjar. That is four tools and overlapping replay. Audit overlaps quarterly. Most teams can consolidate to two platforms without losing signal.

4. Skipping data governance until a lawyer asks

Masking, PII scrubbing, data retention, and DPAs are boring until they are urgent. Configure masking on day one using LogRocket's privacy controls or Sentry's data scrubbers.

5. Not defining who owns which tool

Sentry is usually owned by engineering. LogRocket often sits in a gray zone between product, support, and engineering. Without a clear owner, nobody maintains sampling rules, integrations, or alert thresholds.

6. Alert fatigue from day one

Both tools let you alert on every new error. Do not. Start with P0 crashes and release regressions, add more only after triage latency proves you can handle them. Chronic alert fatigue is why good tools get abandoned.

7. Ignoring session sampling

Full capture is rarely necessary. A 10-25% sample plus 100% capture of error sessions usually delivers the same insight at a fraction of the cost. Both tools support dynamic sampling.

8. Treating session replay as QA tape

Session replay is not a compliance recording. It is a diagnostic tool. Watching every session is a waste. Filter by rage clicks, errors, or specific funnel steps and watch only those.

9. Forgetting to measure what improved

Install a tool, ship fixes, never measure whether conversion or retention actually moved. Pick two metrics before rollout (e.g., crash-free sessions, funnel completion) and review monthly.

10. Not considering mobile plus web parity

If your product spans mobile and web, running different tools on each half creates blind spots at the handoff. Unified platforms that cover both, like UXCam, eliminate this.

Observability Maturity Model

Not every team needs every tool on day one. Here is a staged playbook I use when advising teams.

Stage 1: Survival (weeks 0-8). Install one error monitoring tool and one crash reporter. For most teams this is Sentry plus Firebase Crashlytics. Wire source maps, releases, and Slack alerts. Define a P0 versus P1 rubric for crash severity so on-call has a decision tree. Goal: no production crash goes unseen for more than an hour, and the mean time to acknowledge stays under 15 minutes.

Stage 2: Diagnosis (months 2-4). Add session replay for the highest-friction surface. If you are web-first, that means LogRocket or similar. If mobile, that means UXCam. Start linking replay URLs into support tickets and bug reports, and measure the reduction in back-and-forth between support and engineering. Goal: cut average bug reproduction time by 50%.

Stage 3: Product intelligence (months 4-9). Layer in funnel analytics, retention cohorts, and heatmaps. At this stage, the question shifts from "what broke?" to "why are users churning?" Tara AI or a similar analyst layer starts paying for itself here by surfacing patterns across thousands of sessions without requiring an analyst to scrub video. Define the three metrics you will review weekly: activation rate, week-four retention, and top friction event.

Stage 4: Optimization (month 9+). Experimentation, A/B testing, predictive churn, and cross-platform journey analytics. Feature flagging via LaunchDarkly or Statsig typically joins the stack here, paired with Sentry release health for safe rollbacks. Most teams never reach this stage cleanly because they skipped governance at Stage 1. Revisit data masking, sampling, and ownership before scaling.

The common failure mode is jumping to Stage 3 without Stage 1. You cannot analyze funnels reliably if half your production errors silently break the funnel. Fix the floor before decorating the ceiling.

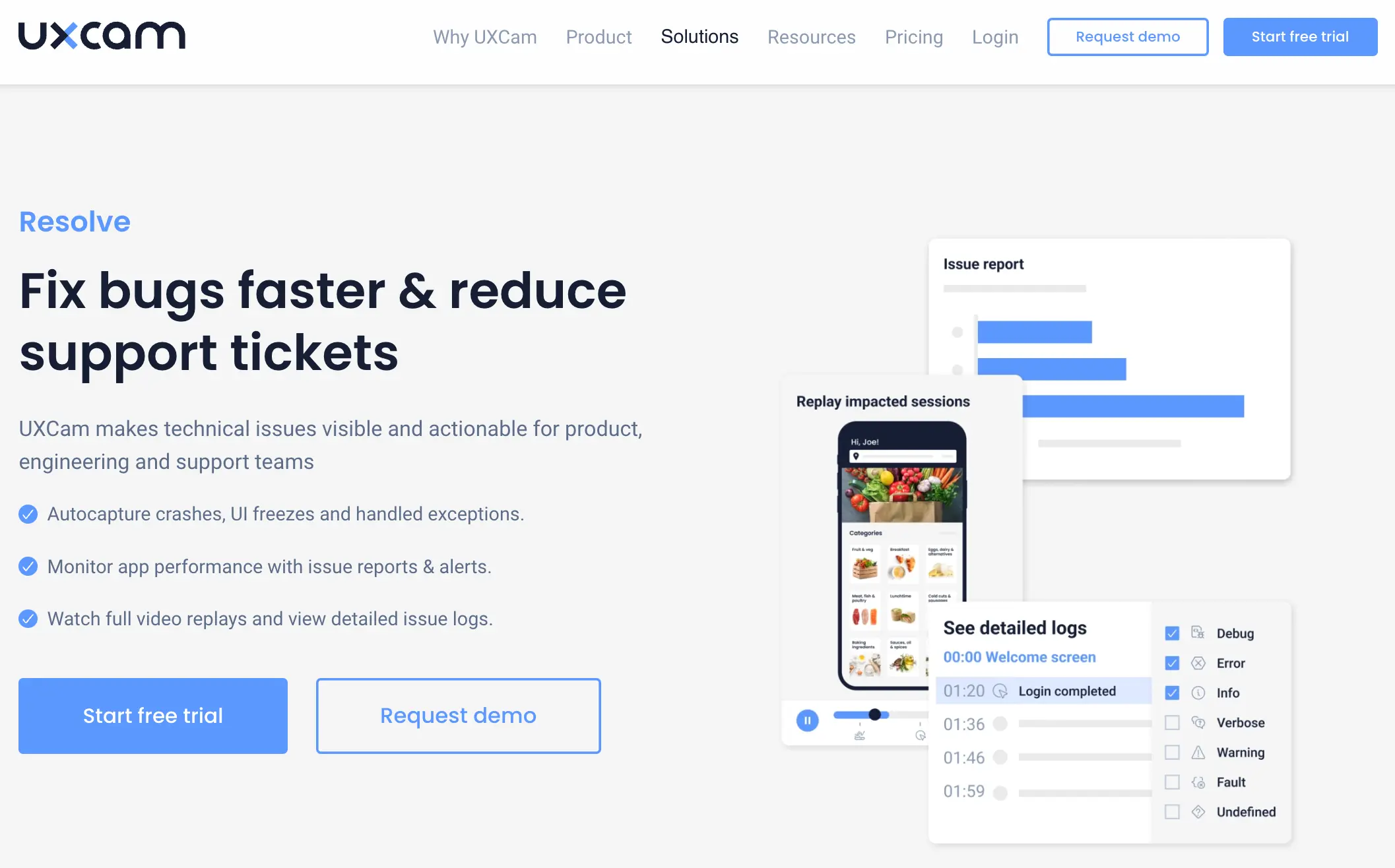

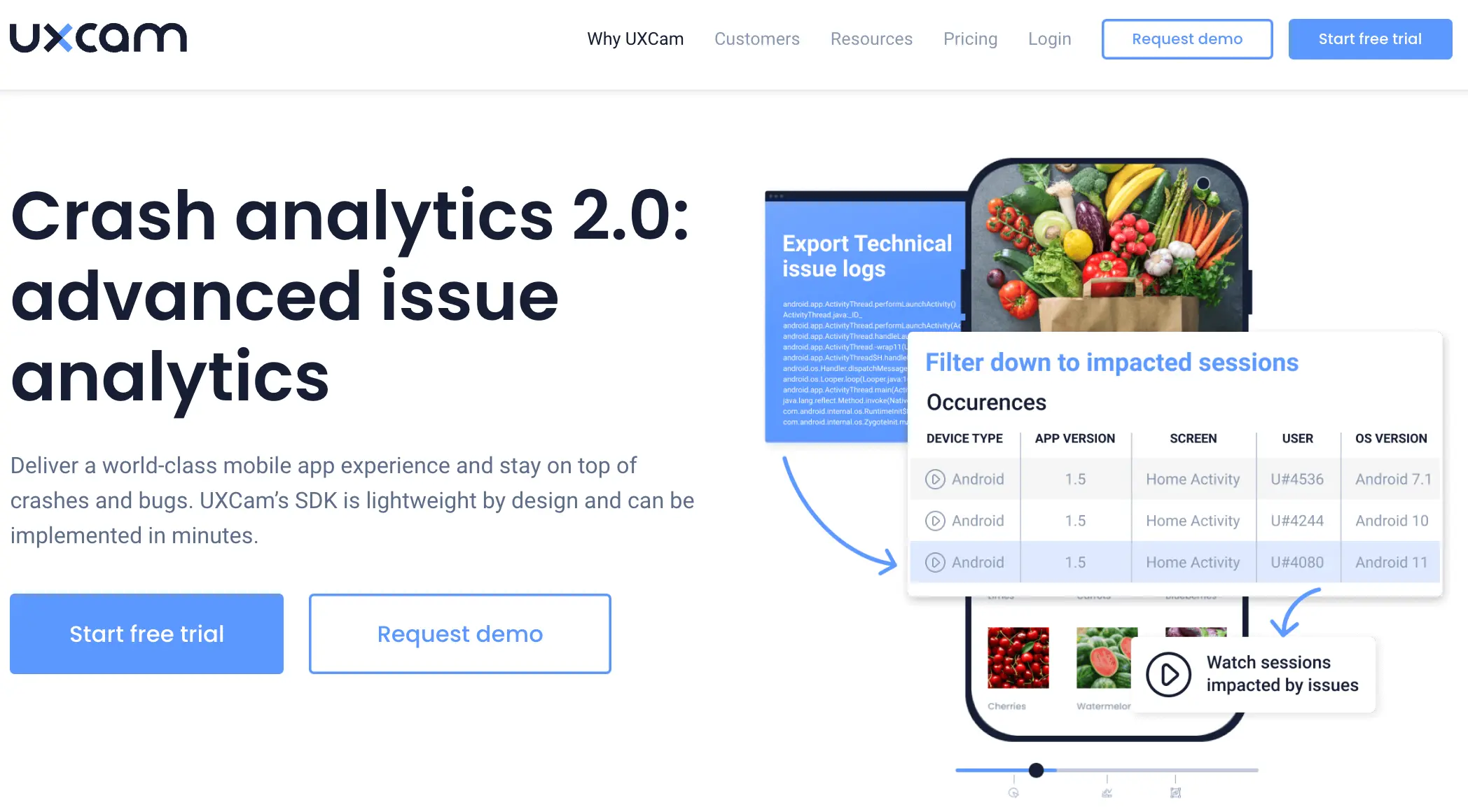

Where Both Tools Fall Short for Mobile

Both tools were born on the web. Their mobile SDKs exist, but neither was designed from the ground up for the mobile app experience, and that shows in three places:

No gesture-level heatmaps. Neither product shows you where users actually tap, scroll, and press-hold on a native screen.

Weak issue analytics for mobile UX. Rage taps, UI freezes (not crashes, but frozen frames), and navigation loops are surfaced poorly or not at all.

No unified retention and funnel analysis. You still need a separate product analytics tool alongside them.

That is the gap teams keep hiring a third tool to fill. Which brings us to the alternative.

The alternative: UXCam for mobile apps and the web

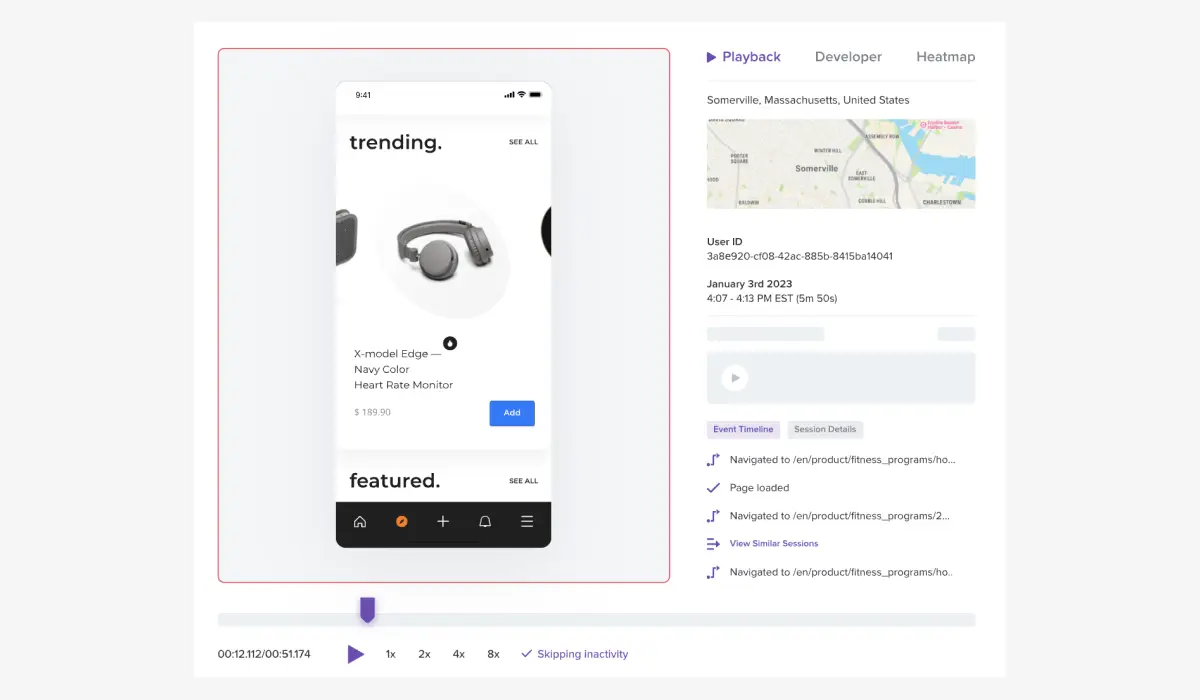

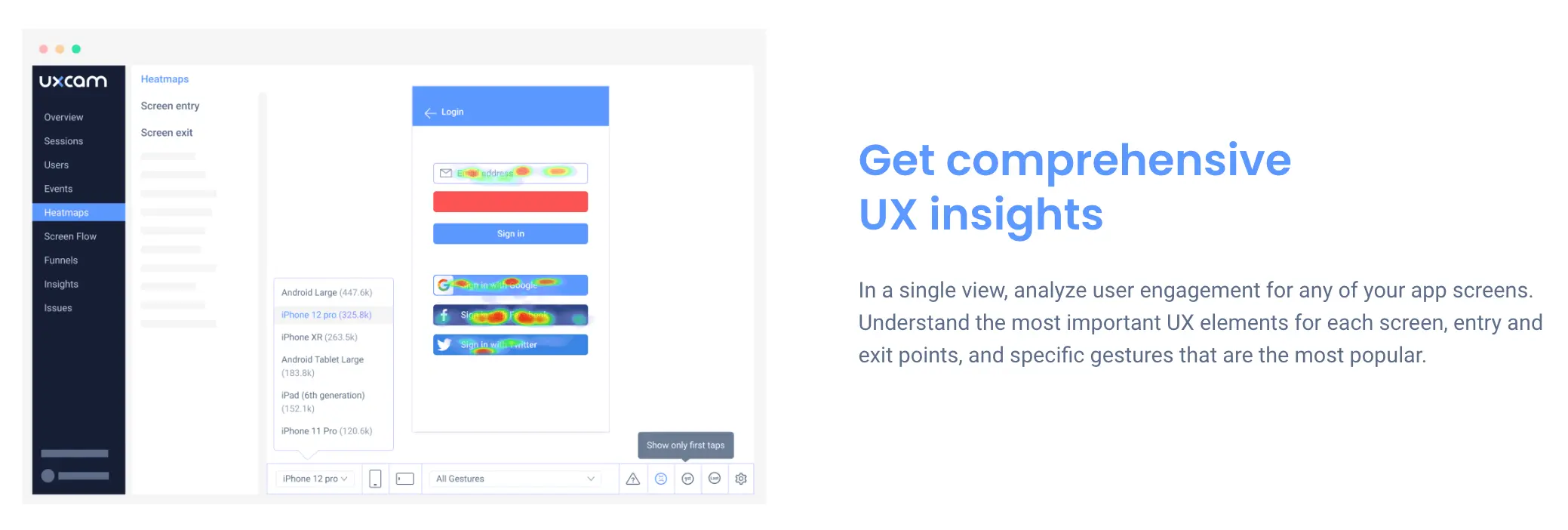

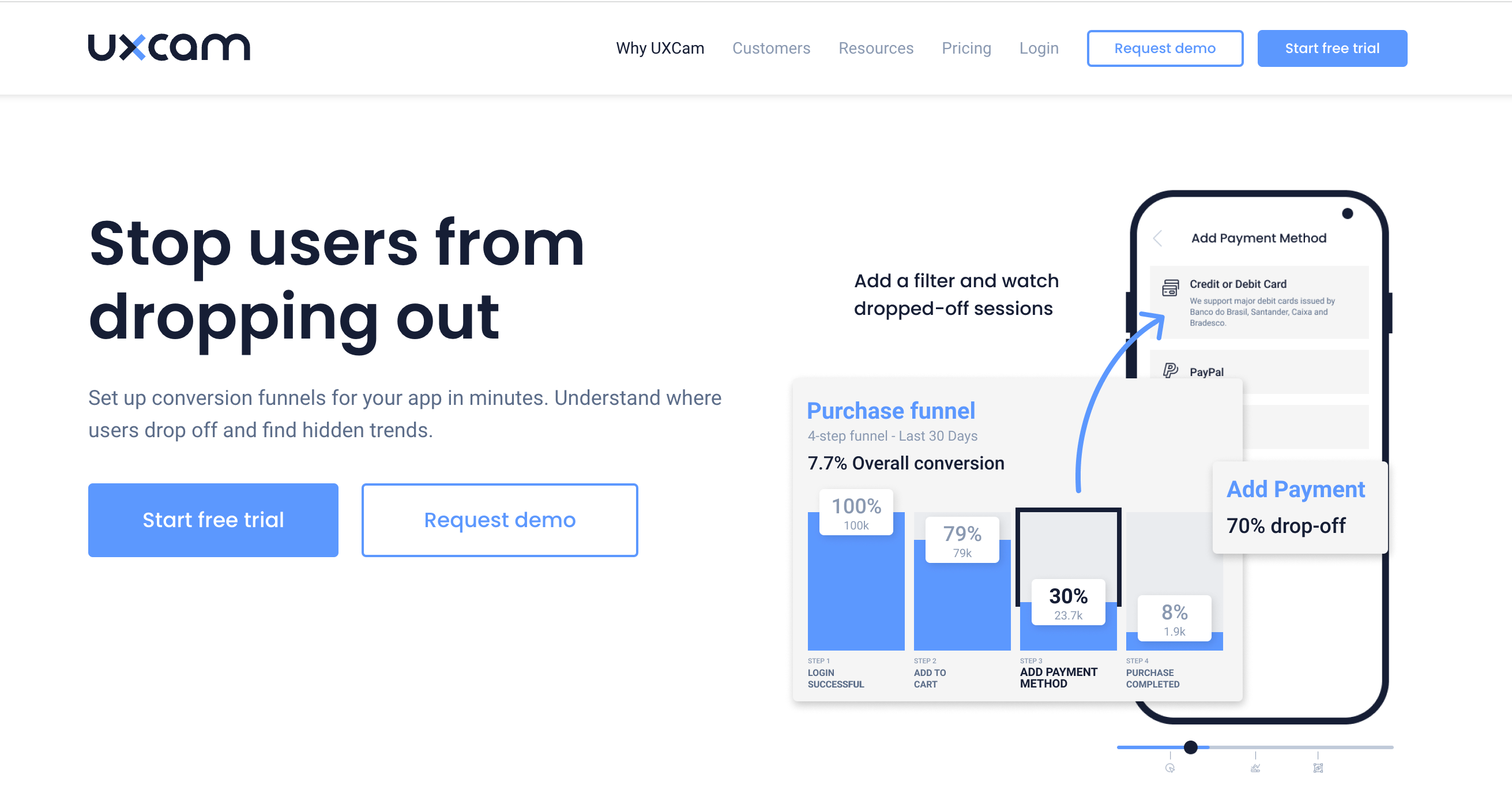

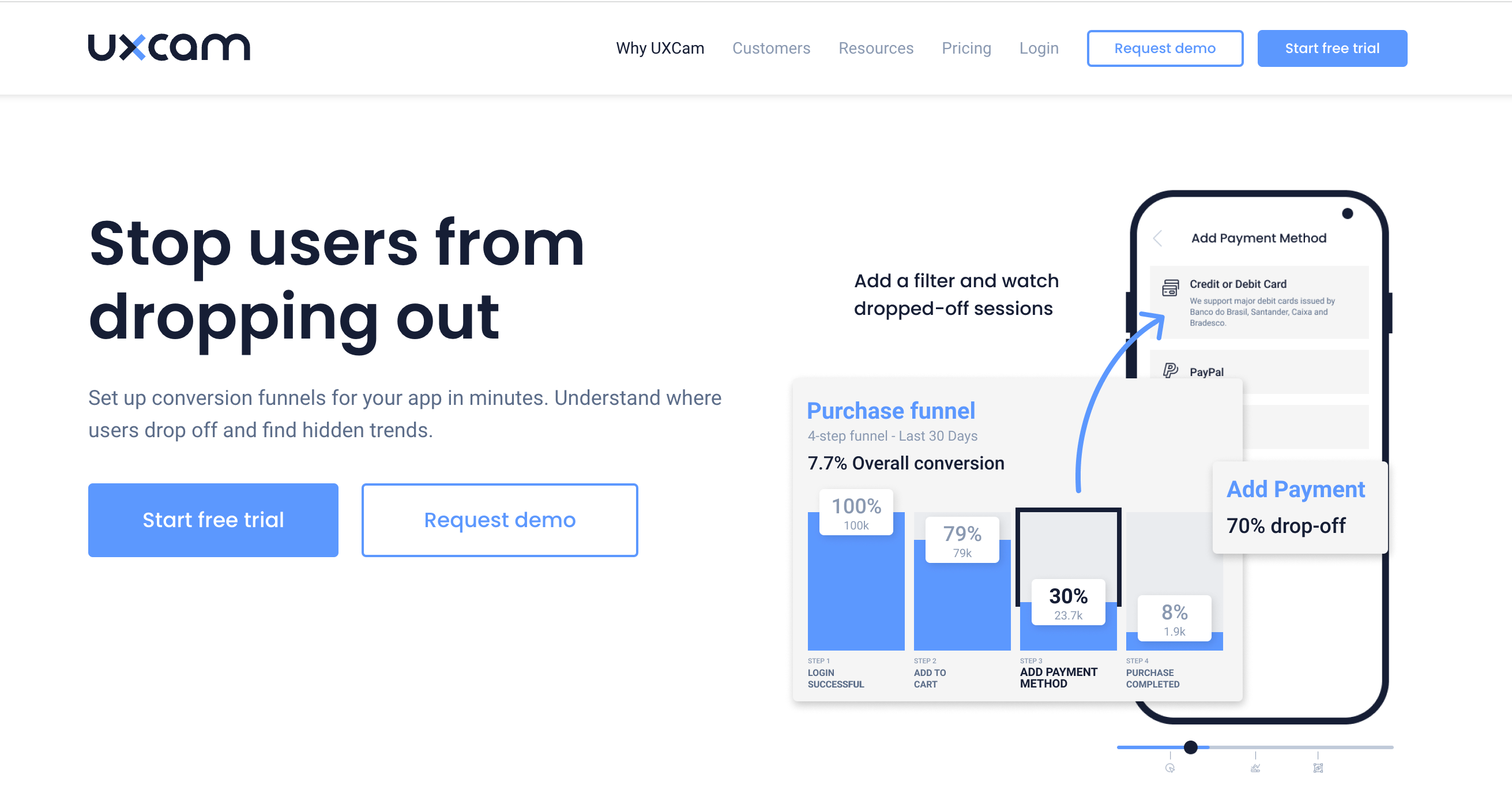

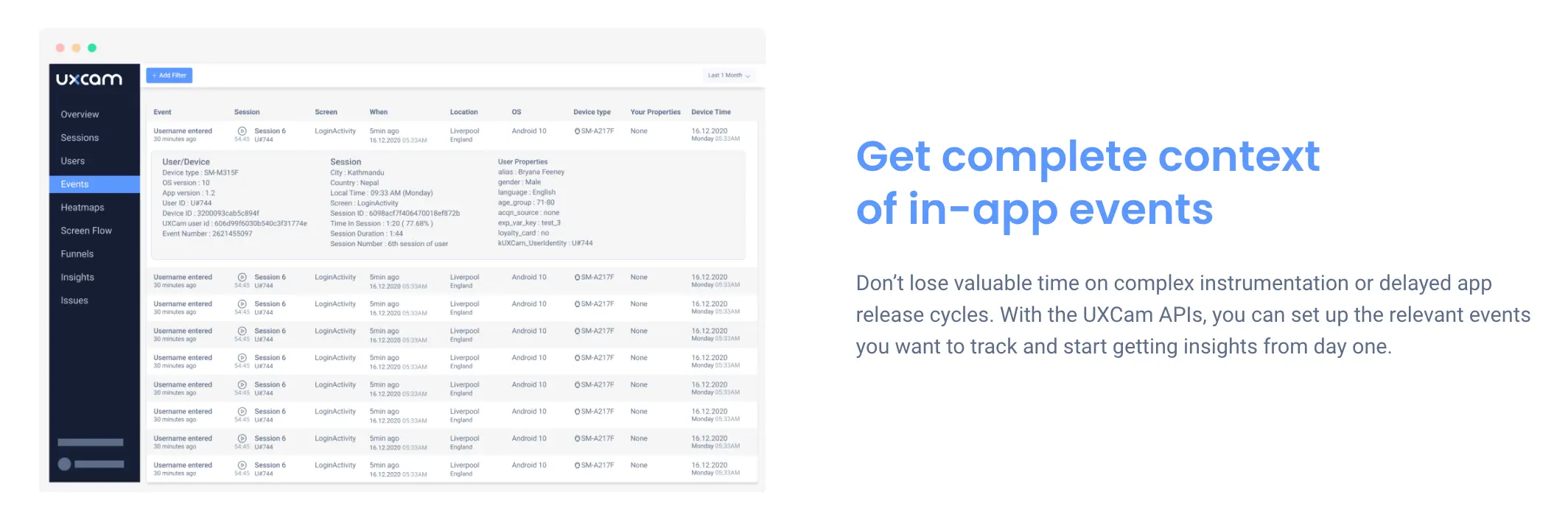

UXCam is a product intelligence and product analytics platform built to cover mobile apps and the web. It combines session replay, heatmaps, issue analytics, funnels, and retention analytics in one place, with Tara AI as the AI analyst that processes sessions and tells you what to fix next.

It is installed in 37,000+ products and rated 4.7 on G2.

What UXCam does that LogRocket and Sentry cannot

Mobile-native session replay. Every tap, swipe, and pinch captured with PII masking by default. Works offline and syncs later.

Heatmaps for every screen. Tap, scroll, and gesture heatmaps out of the box.

Issue analytics. Rage taps, UI freezes, and unresponsive gestures surfaced automatically, with replay evidence attached.

Funnel analytics. Find the exact step where users drop off and replay the sessions behind the drop.

Embedded event analytics. Track in-app events without re-instrumenting for every new question.

Outcomes from real UXCam customers

Recora reduced support tickets by 142% after UXCam surfaced that users were pressing and holding a button designed for a single tap.

Inspire Fitness grew time-in-app by 460% and cut rage taps by 56% after rebuilding key screens with heatmap and replay evidence.

Housing.com lifted feature adoption from 20% to 40% using funnel analytics to find and fix friction.

Costa Coffee increased registrations by 15% after identifying a 30% drop between app install and loyalty sign-up.

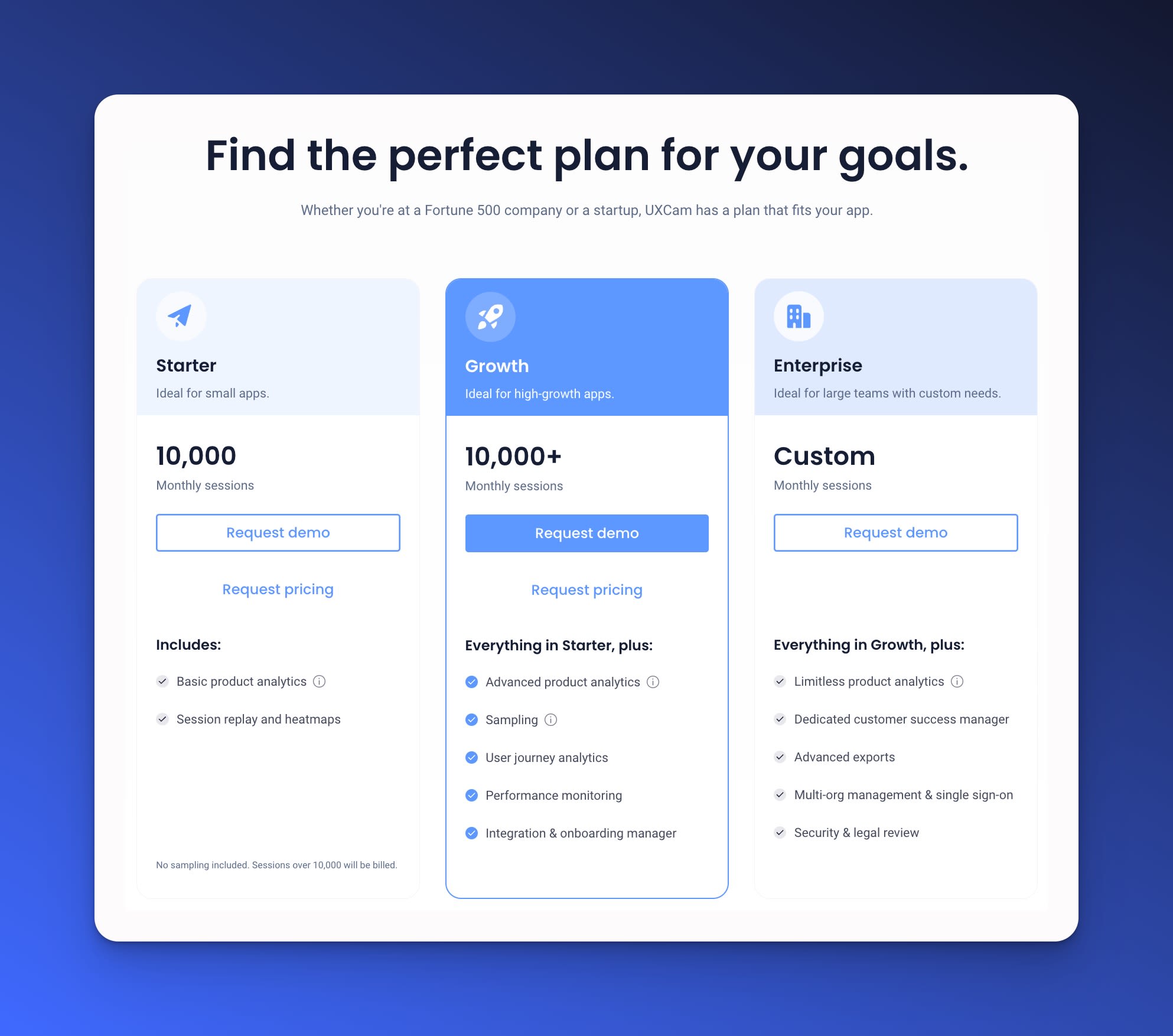

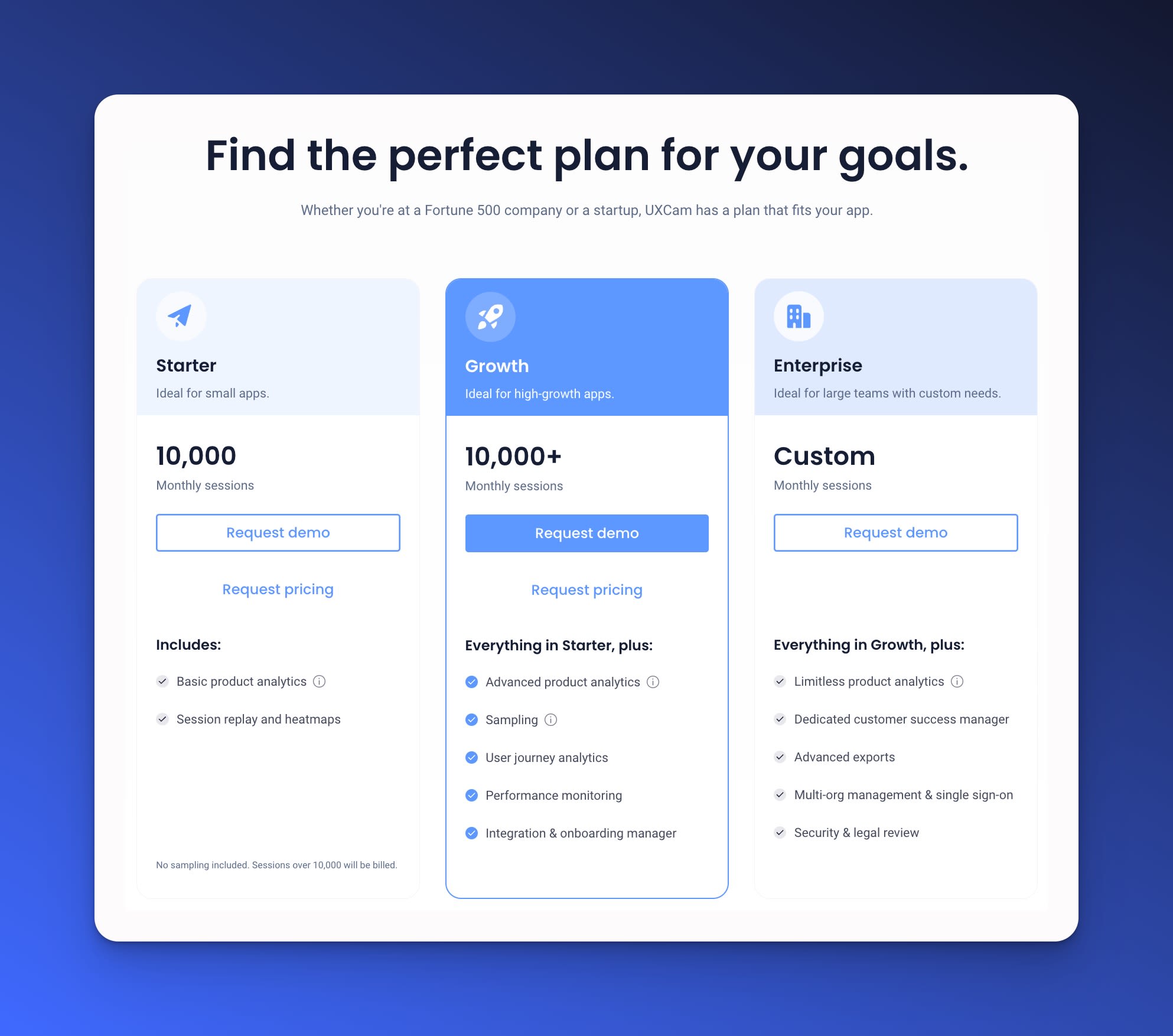

UXCam pricing

UXCam uses usage-based pricing tailored to your session volume and team size, with a free trial and no credit card required to start. Talk to the team for a quote calibrated to your app.

Which tool should you pick?

Pick Sentry if your primary problem is backend exceptions, release health, and giving engineers fast stack traces. Budget 2x the base price once you turn on replay and APM.

Pick LogRocket if you ship a web-heavy or Redux-heavy frontend and want replay tied to state. Expect pricing to climb fast at scale.

Pick UXCam if you ship a mobile app or a mobile plus web product and want product intelligence, not just error logs. You get session replay, heatmaps, issue analytics, funnels, retention, and an AI analyst in one platform.

Many teams run Sentry for crashes and UXCam for everything product-related. That combination covers more ground than LogRocket + Sentry and usually costs less.

AI Session Analysis Alongside Both Tools

Sentry tells you an error occurred. LogRocket shows you the session that caused it. Neither tool tells you which of the hundred friction patterns surfacing this week is most worth your engineering hours.

Tara AI inside UXCam is the layer that ranks the queue. It reads sessions across mobile and web, clusters the friction by impact, and returns ranked recommendations. Teams running Sentry, LogRocket, and UXCam together get the full pipeline: error capture, session evidence, and AI-driven prioritization.

Frequently asked questions

How much does Sentry really cost at scale?

Sentry pricing starts at $26/month for the Team plan and $80/month for Business, but the published price is the floor, not the ceiling. Every plan uses a pay-as-you-go model where errors, replays, spans, attachments, and profiling are metered separately. Teams running session replay on a mid-size consumer product routinely pay $500 to $3,000+ per month once overages kick in. Before committing, set a PAYG cap and model cost at 3x your current event volume to avoid surprises.

Is Sentry or LogRocket better for mobile apps?

Neither is purpose-built for mobile. Sentry has broader native SDK coverage (iOS, Android, React Native, Flutter) and is strong for crash reporting, but its replay and UX features lag on mobile. LogRocket's mobile story is weaker still. For a mobile-native stack, teams typically pair Sentry for crashes with a product intelligence platform like UXCam for session replay, heatmaps, issue analytics, and funnels. That combination usually delivers more insight for less total cost than layering LogRocket on top of Sentry.

Can I use LogRocket and Sentry together?

Yes, and many teams do. LogRocket handles frontend user experience debugging while Sentry covers backend exceptions and APM. The two integrate directly so a Sentry error can link to the LogRocket session where it occurred. The downside is cost: you are paying two platforms with overlapping session replay features, and neither is optimized for mobile. Audit your actual usage after 60 days. Most teams find one tool dominant and the other underused.

Does Sentry have session replay?

Yes. Sentry added session replay in 2022, built on the open-source rrweb library. Replays are tied to error events, so you see roughly 30 seconds of context before a crash or exception. It works well for bug reproduction but is not designed for continuous UX analysis the way dedicated replay tools are. Replay volume is also metered: the Business plan includes 500 replays per month, with overages billed per unit.

What is the best alternative to LogRocket and Sentry for mobile product teams?

For product and engineering teams on mobile and web, UXCam is the strongest alternative. It combines session replay, heatmaps, issue analytics (rage taps, UI freezes), funnels, retention, and an AI analyst (Tara AI) in one platform, installed in 37,000+ products. Customers including Recora, Inspire Fitness, Housing.com, and Costa Coffee have used it to cut support tickets, lift feature adoption, and raise registrations. Unlike Sentry and LogRocket, UXCam was built to cover mobile apps and the web.

How do I reduce my Sentry bill without losing coverage?

Four levers work. First, set an explicit PAYG cap so overages cannot spiral. Second, use inbound filters to drop bot traffic, browser extension errors, and known noisy exceptions before they hit your quota. Third, sample performance transactions rather than capturing 100%. Fourth, audit which SDKs have profiling and attachments enabled by default and disable them where you don't need them. Teams applying all four usually cut Sentry spend by 30-50% with no loss of signal.

Should I self-host Sentry to save money?

Only if you already have SRE capacity. Self-hosted Sentry needs Postgres, Redis, Kafka, and ClickHouse, plus upgrade discipline. For teams under 100 engineers, the total cost of ownership usually exceeds the SaaS bill once you factor in engineering time. For larger teams with data residency requirements, self-hosting can make sense.

Does LogRocket work for React Native and Flutter apps?

LogRocket has a React Native SDK but its mobile capture is less mature than its web capture. Flutter support is limited. Teams shipping native mobile typically find LogRocket underpowered compared to dedicated mobile platforms.

How does Tara AI compare to manual session review?

Manual session review does not scale past a few hundred sessions per week. Tara AI processes every session, clusters similar behaviors, flags anomalies, and surfaces the top issues by user impact. Think of it as a permanent research analyst reading every session so your team can focus on fixing the top three findings instead of scrubbing through videos.

What data governance controls does UXCam offer?

UXCam masks PII by default (text fields, forms, images configurable per screen), supports GDPR and CCPA workflows, and offers data residency options. See the UXCam security page for the full list.

Can I run UXCam alongside Sentry?

Yes. Many teams use Sentry for crash and exception tracking (what broke in the code) and UXCam for product intelligence (what users did before, during, and after). The two do not overlap meaningfully. UXCam replay links can also be attached to Sentry issues for richer triage context.

How long does UXCam take to install?

Most teams ship the SDK in under 30 minutes. First session data appears within minutes of install. Tara AI starts producing actionable findings within 48 to 72 hours once enough sessions have been captured. Start a free trial to see timing on your own app.

Is there a free tier for UXCam?

Yes. UXCam offers a free tier and a free trial with no credit card required. Pricing beyond that is usage-based and calibrated to session volume and seats.

What metrics should I track after installing any of these tools?

Pick two leading indicators before rollout. Common pairs: crash-free session rate plus funnel completion rate, or time to bug resolution plus feature adoption. Review monthly. If neither metric moves within 90 days, the tool is not being used, not that it does not work.

How do I decide between Sentry and Datadog for error monitoring?

Datadog makes sense when you are already paying for its infrastructure and APM products and want a single pane of glass. Sentry wins on developer experience, release health, and price at small to mid scale. A 50-engineer team typically pays less for Sentry than the incremental Datadog error module, and the UI is built for debugging rather than dashboards.

What is the right replay sampling rate?

Start at 100% in staging and 10-25% in production, with 100% capture for sessions that include errors or high-value events (checkout, signup, payment). Review the distribution monthly. If your replay library is 90% read-only sessions with no interactions, sample harder. If rage-click sessions are rare in your library, sample more. Start a free UXCam trial or browse our customer case studies to see how mobile teams are moving beyond traditional error monitoring.

AUTHOR

Silvanus Alt, PhD

Founder & CEO | UXCam

Silvanus Alt, PhD, is the Co-Founder & CEO of UXCam and a expert in AI-powered product intelligence. Trained at the Max Planck Institute for the Physics of Complex Systems, he built Tara, the AI Product Analyst that not only analyzes user behavior but recommends clear next steps for better products.

TABLE OF CONTENTS

- Key takeaways

- LogRocket vs Sentry at a glance

- How I evaluated LogRocket vs Sentry

- What is LogRocket?

- What is Sentry?

- Sentry pricing explained

- Key differences between LogRocket and Sentry

- 13 Patterns When Choosing LogRocket vs Sentry

- Industry-specific considerations

- Tools by category

- Common mistakes teams make

- Observability Maturity Model

- Where Both Tools Fall Short for Mobile

- The alternative: UXCam for mobile apps and the web

- Which tool should you pick?

- AI Session Analysis Alongside Both Tools

Related articles

Conversion Analysis

React Native Crash Reporting + Best Tools and Techniques

Learn how to improve your mobile app's stability with effective React Native crash reporting. Explore the top techniques and tools for monitoring and managing...

Tope Longe

Product Analytics Expert

Conversion Analysis

Flutter Performance Monitoring - Best Tools and Techniques

In this article, we'll introduce you to the top Flutter performance monitoring tools and demonstrate how UXCam can help you monitor your app's performance with...

Tope Longe

Product Analytics Expert

Conversion Analysis

How to measure, analyze, and reduce app churn

All the user acquisition in the world won't matter if you've got a high churn rate on your app. If you want to know why users are uninstalling or unsubscribing, tools like screen flow, heatmaps, and screen recordings can get users loving — instead of leaving your...

Tope Longe

Product Analytics Expert