Digital Analytics: The Complete 2026 Guide

TABLE OF CONTENTS

- Key takeaways

- What is digital analytics?

- Digital vs Product vs Web Analytics

- Understanding the four types of digital analytics

- How to implement digital analytics (step-by-step)

- Common pitfalls in digital analytics

- The role of digital analytics in modern business

- Building a data-driven culture

- Future trends in digital analytics

- Improve your digital analytics with UXCam

Digital analytics is the collection, measurement, and analysis of quantitative and qualitative data from digital channels (websites, mobile apps, connected devices, marketing campaigns) used to improve user experience, marketing performance, and business outcomes. Everything else in this guide is a clarification of that definition.

The reason I'm leading with the textbook answer: the term "digital analytics" gets used loosely enough that teams end up arguing about tools when they really have different jobs to do. Web analytics, product analytics, marketing analytics, and customer experience analytics are all subsets of digital analytics, and each uses a different part of the same data. Knowing which part of the pie you're working on saves weeks of confusion.

In this guide I'll walk through the four kinds of digital analytics (descriptive, diagnostic, predictive, prescriptive), the tools I recommend for each, the pitfalls I see teams fall into, and how to actually build a culture that uses the data instead of just collecting it.

Key takeaways

Digital analytics covers four jobs (describing what happened, diagnosing why, predicting what's next, prescribing what to do). Most teams stop at the first two.

The biggest value from digital analytics comes when quantitative data (GA4, Mixpanel, Amplitude) is paired with qualitative signals (session replay, heatmaps, user interviews). Either one alone gives you half the picture.

For most product teams in 2026, the stack is: GA4 for marketing-level analytics, a product analytics tool (Mixpanel, Amplitude, or UXCam) for in-app behavior, a session replay tool for behavioral diagnosis, and your data warehouse for cross-source analysis.

The most common mistake I see: collecting data nobody uses. Every event you track costs engineering time, vendor cost, and analyst attention. Instrument for specific decisions, not "in case we need it later."

AI-powered product intelligence tools like Tara automate much of the diagnostic layer. Instead of manually scrubbing through replays or writing complex funnel queries, you ask a question in natural language and get a ranked list of behavioral patterns with session evidence.

What is digital analytics?

Digital analytics is the collection, measurement, and analysis of data from digital channels to improve business and user outcomes. The channels: websites, mobile apps, connected devices, email, paid media, social, anything with a digital touchpoint. The goal: replace guesswork with informed decisions about what to build, what to change, and where to spend.

The scope is broader than web analytics (which focuses on websites) and narrower than business intelligence (which covers everything from HR to supply chain). In practice, digital analytics is what a product or growth team uses daily to answer questions like "which feature drove this month's retention lift?" or "why did our signup conversion drop on mobile last week?"

Digital vs Product vs Web Analytics

These terms get used interchangeably and the differences matter.

Web analytics focuses on websites: pageviews, bounce rate, session duration, traffic sources, conversion events. The canonical tool is Google Analytics 4. The typical user is a marketer or SEO analyst.

Product analytics focuses on what users do inside a product, web or mobile. Events, funnels, retention cohorts, feature adoption. The canonical tools are Mixpanel, Amplitude, and UXCam. The typical user is a product manager.

Marketing analytics focuses on campaign performance across paid media and CRM. The canonical tools are HubSpot, Salesforce Marketing Cloud, and bespoke BI dashboards. The typical user is a marketing ops person.

Customer experience (CX) analytics focuses on the qualitative and quantitative signals of how users feel: surveys, NPS, session replay, heatmaps, support ticket analysis. The canonical tools are UXCam, Hotjar, and Qualtrics. The typical user is a UX researcher or CX leader.

Digital analytics is the umbrella that covers all four. Most organizations have all four at varying levels of maturity.

Understanding the four types of digital analytics

This is the framework that I lean on whenever a team asks "what should our digital analytics roadmap be?"

1. Descriptive analytics: what happened?

Describes what occurred in the past. Your pageview reports, your monthly active user count, your funnel conversion rates. This is the foundation layer. Every analytics tool does this well.

Example: "We had 12,000 signups in March, down 8% from February."

2. Diagnostic analytics: why did it happen?

Explains the causes behind descriptive metrics. This is where session replay and behavioral analytics earn their keep, because a drop in signups doesn't tell you why. Watching 20 replays of churned signup attempts usually does.

Example: "Signups dropped because 34% of new iOS users hit a permission prompt timeout when we added the ATT screen, and many never came back."

3. Predictive analytics: what will happen next?

Uses historical data to model likely future outcomes. Churn prediction models, lifetime value forecasts, anomaly detection. This layer needs more data and more technical depth. Most small and mid-size teams don't do this at all; large teams run it selectively on high-leverage metrics (revenue, churn, LTV).

Example: "This cohort's day-30 retention is trending 15% below the same-sized cohort from six months ago. Based on historical patterns, we expect 22% churn in the next quarter unless we intervene."

4. Prescriptive analytics: what should we do about it?

Recommends specific actions based on descriptive + diagnostic + predictive inputs. This is where AI product intelligence lives in 2026. Instead of watching replays manually and reasoning through a backlog, tools like Tara AI ingest the behavioral data and propose prioritized actions.

Example: "Your top 3 recommended fixes this month: (1) simplify the 5-step signup on iOS to reduce ATT-prompt abandonment, (2) add retry logic for the failed payment flow on Android, (3) investigate the 3x rage-tap rate spike on the new onboarding screen."

Most teams operate strongly in descriptive and diagnostic, dabble in predictive, and barely touch prescriptive. The gap is where Tara and similar AI tools are meaningfully changing what small teams can do.

How to implement digital analytics (step-by-step)

Step 1: define what you're measuring and why

Before instrumenting a single event, answer three questions for each metric you'll track:

What decision will this metric inform?

Who is the decision-maker?

How often will they look at it?

If you can't answer all three, you're about to collect data that won't be used. I've audited enough analytics implementations to say confidently that at least 40% of tracked events in a typical production app are never read by anyone.

Step 2: pick your stack

A minimal stack for most 2026 product teams:

Most teams over-invest in the marketing side and under-invest in product + qualitative. If I had to rank by ROI: product analytics > session replay > marketing analytics > data warehouse, in that order, for most 0-to-500-person teams.

Step 3: instrument events systematically

Use a taxonomy. Don't let engineers name events ad hoc. A simple convention like

(, , ) scales better than "ItemAddedWhenClickedOnTheAddButton".Track properties with every event: platform (iOS, Android, web), version, user ID, cohort. These properties are what let you segment later.

Set up a tracking plan in a shared doc or tool (Iteratively, Rudderstack, or a simple Notion doc). The plan is the source of truth that everyone refers to before adding new tracking.

Step 4: build your first dashboards

Start with three dashboards:

Acquisition: traffic sources, signup rate, cost per acquisition

Activation and retention: day-1, day-7, day-30 retention, time to first meaningful action

Revenue: conversion rate, revenue per user, churn

Each dashboard should have at most 6 to 8 metrics. More than that and people stop reading them. Review cadence: daily glance, weekly deep-dive, monthly cohort review.

Step 5: layer in qualitative signals

Numbers alone don't explain user behavior. Set up session replay on your highest-intent pages (checkout, signup, the core feature). Watch at least 10 sessions a week. Run rage-click detection on key flows. Integrate surveys at high-drop-off moments.

This is the step most teams skip, and it's where the biggest insights come from. I've watched teams run A/B tests for weeks to get statistical significance on changes that 20 minutes of replay-watching would have told them not to bother with.

Common pitfalls in digital analytics

Collecting data nobody uses

If nobody has opened the dashboard in 30 days, remove the metric. Every event costs engineering time and vendor money. Analytics bloat is real and expensive.

Confusing correlation with causation

A metric going up at the same time as another doesn't mean one caused the other. Without an experiment or a strong causal mechanism, correlations are hypotheses, not answers. I've seen teams attribute revenue lifts to features that happened to ship the same week as a seasonal bump, which led to building more features on that pattern that predictably failed.

Over-indexing on vanity metrics

Pageviews, followers, signups. These are easy to move but don't obviously map to revenue or retention. A 50% lift in signups that comes entirely from low-quality traffic is worse than the same lift from qualified traffic because it drags down your ratios across the funnel. Always pair growth metrics with quality metrics (activation rate, retention).

Under-investing in data hygiene

Events firing twice, events misfiring after a release, cohorts defined inconsistently across dashboards. Data problems compound silently. Set up alerts for unexpected event-rate changes and do a quarterly audit of your top 20 events to confirm they still match their definitions.

Treating GA4 as the only tool

GA4 is good for marketing analytics and basic web conversion tracking. It's not built for product analytics (the querying is limited, cross-session user identity is brittle, event costs scale non-linearly). If you rely on GA4 for product questions, you'll eventually hit a wall. Have a product analytics tool in the stack from year one.

The role of digital analytics in modern business

Every meaningful product and marketing decision at a serious company is informed by digital analytics. That wasn't true a decade ago. It's close to universally true now.

What's changed in the last two years: AI product intelligence layers have made prescriptive analytics accessible to small teams. I can ask Tara "what are the three highest-impact UX issues in the last 7 days?" and get a ranked list backed by session evidence, without needing a dedicated analyst to run the query. That kind of workflow used to require a data science team. Now a solo PM can do it.

What hasn't changed: the analytics are only as good as the decisions they drive. A team that looks at dashboards daily but ignores them when prioritizing their roadmap is worse off than a team without analytics, because they're spending the cost without getting the value.

Building a data-driven culture

Culture is where most analytics programs fail. You can have the best tools on the planet and still have a team that makes decisions by gut feel. Three things that reliably shift culture in my experience:

Force every feature proposal to include a measurable success criterion. If nobody can name what "success" looks like as a number, the feature shouldn't ship. This single rule has changed more team cultures than any tool.

Make replays part of your sprint ritual. Pick one session replay per sprint retro, watch it as a team, discuss what it reveals. This creates shared context about what users actually experience and builds the muscle of pairing numbers with behavior.

Publish the metric every week. Whatever your north star is, publish it weekly in a format everyone sees (email, Slack, whiteboard). Visibility creates accountability.

Future trends in digital analytics

Three shifts worth watching in 2026 and 2027:

AI-native analytics workflows. Tools like Tara that let you ask questions in natural language instead of building funnels are changing how small teams work. The skill of "writing complex SQL to segment users" is being commoditized. The skills that matter more: asking good questions, interpreting ambiguous results, designing experiments worth running.

Privacy-first measurement. Apple's ATT, Google's Privacy Sandbox, and GDPR enforcement have made cross-session, cross-device tracking harder. Teams are moving toward first-party event data and server-side tagging. If your analytics stack still assumes cookies and IDFAs work, you'll hit attribution problems within a year.

Behavioral + quantitative fusion. The old divide between "quant tools" (GA4, Mixpanel) and "qual tools" (session replay, surveys) is dissolving. Modern platforms like UXCam bundle both, with AI that correlates the two automatically. I expect this consolidation to continue, which means fewer tools but deeper integration per tool.

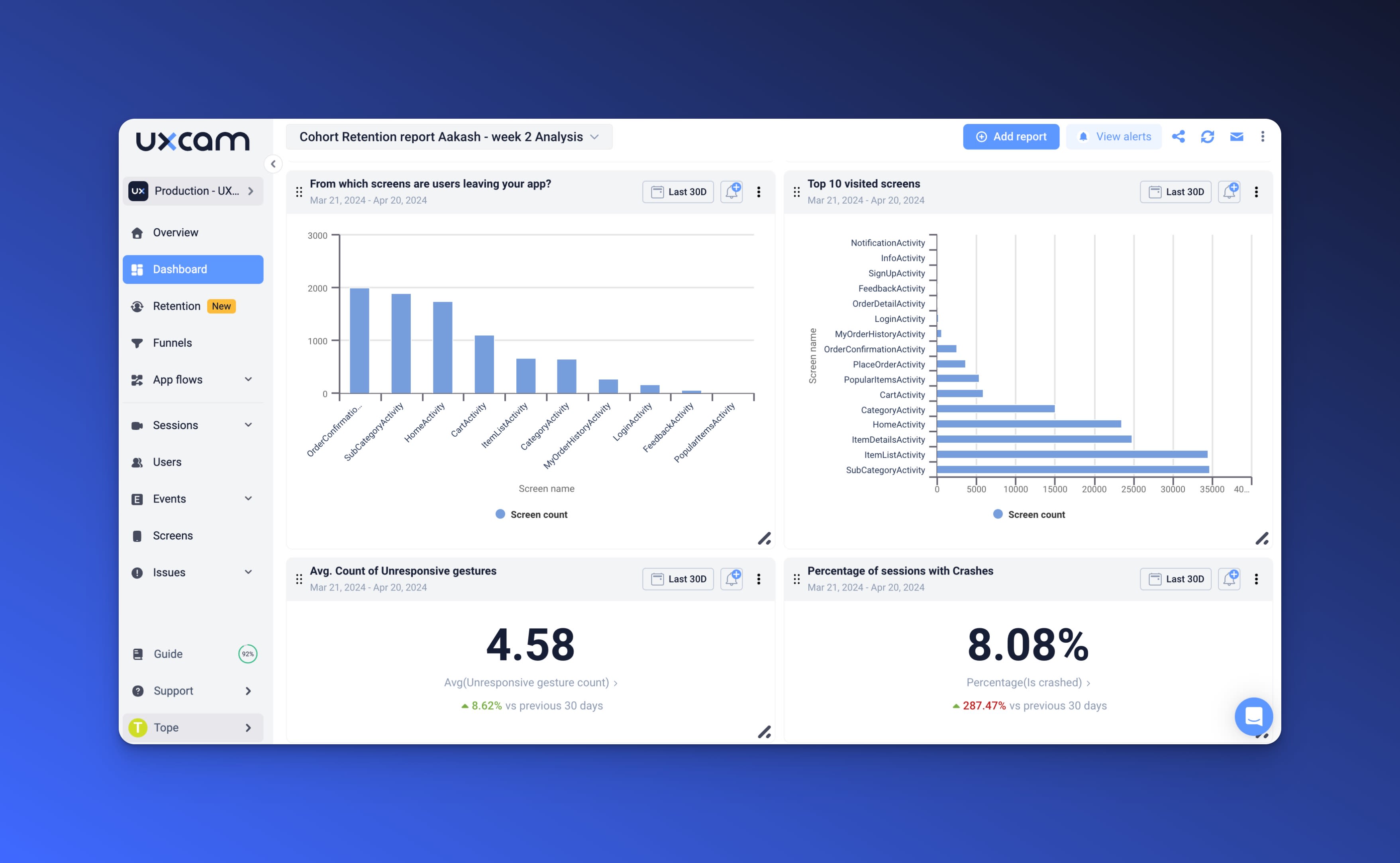

Improve your digital analytics with UXCam

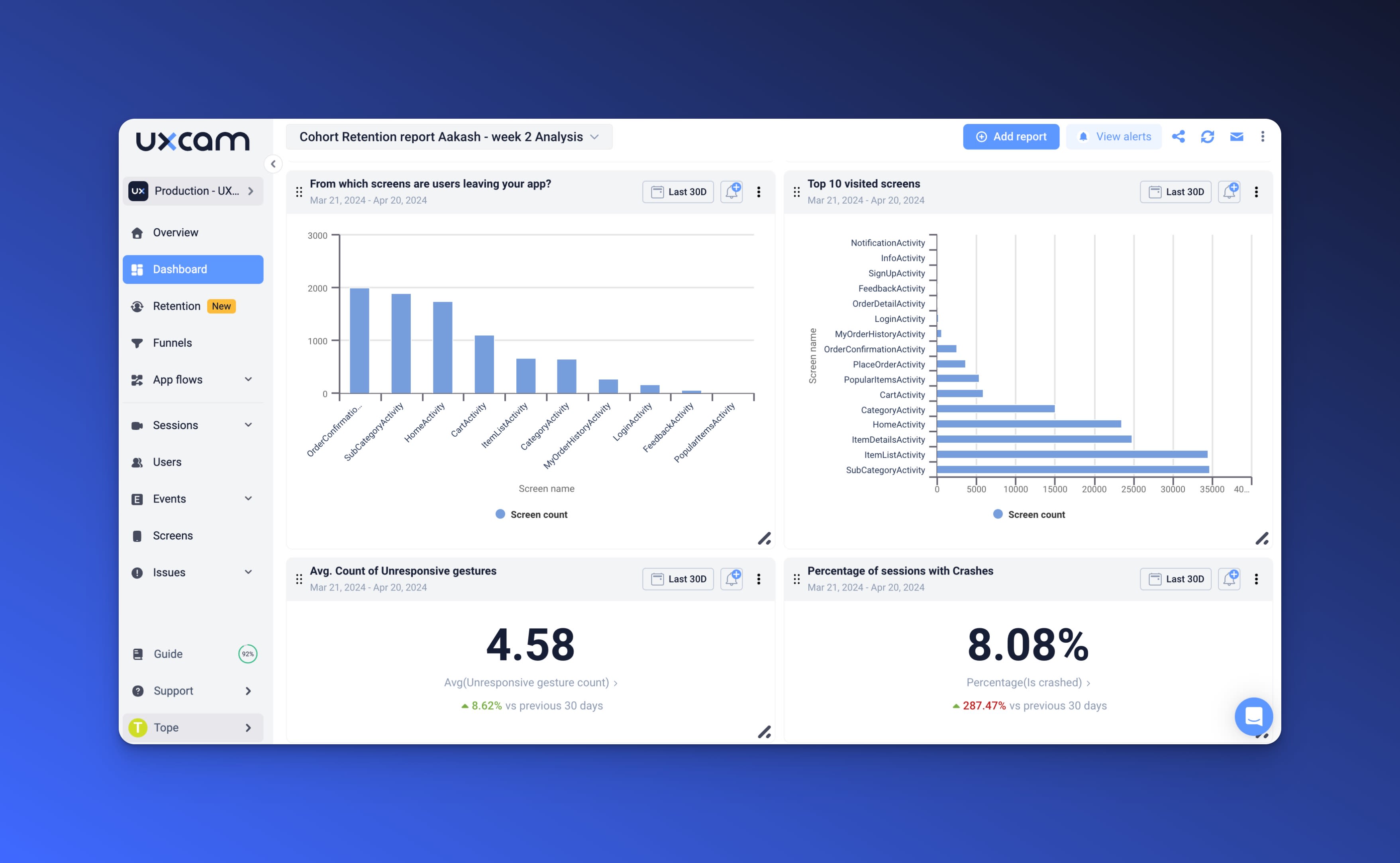

UXCam is a product intelligence and product analytics platform that automatically captures every user interaction on mobile apps and websites, with no manual event tagging. It combines qualitative analytics (session replay, heatmaps), quantitative analytics (funnels, retention, segmentation), and Tara, an AI analyst that processes sessions to deliver evidence-based insights and recommend actions.

For product teams that need all four layers of digital analytics (descriptive, diagnostic, predictive, prescriptive) in a single platform, UXCam collapses what used to require three separate tools into one. Every metric is backed by real user sessions. See a drop-off? Click to watch the sessions where users left.

Mobile-first, web-ready, installed in 37,000+ products. Request a demo to see it on your product.

Frequently asked questions

What is digital analytics?

Digital analytics is the collection, measurement, and analysis of data from digital channels (websites, mobile apps, marketing campaigns, connected devices) to improve user experience, marketing performance, and business outcomes. It spans web analytics, product analytics, marketing analytics, and customer experience analytics. The goal is to replace guesswork with measured, informed decisions about what to build, change, and prioritize.

What's the difference between digital analytics and product analytics?

Product analytics is a subset of digital analytics. Product analytics focuses on what users do inside a specific product (signups, feature use, retention, churn), typically using tools like Mixpanel, Amplitude, or UXCam. Digital analytics is the broader umbrella that includes product analytics plus web analytics, marketing analytics, and customer experience analytics.

What are the four types of digital analytics?

Descriptive (what happened), diagnostic (why it happened), predictive (what will happen next), and prescriptive (what to do about it). Most teams do descriptive and diagnostic well, dabble in predictive, and barely touch prescriptive. AI-powered product intelligence tools like Tara are changing how accessible the prescriptive layer is for small teams.

What tools do I need for digital analytics?

A minimal 2026 stack: GA4 for marketing analytics, a product analytics tool (Mixpanel, Amplitude, or UXCam), a session replay tool for behavioral diagnosis, and a data warehouse for cross-source analysis when you outgrow single-tool reports. Most teams over-invest in the marketing side and under-invest in product and qualitative tools.

How do I know if my digital analytics are working?

Three signals. First, your team can answer important product and growth questions from the data in under a day (instead of "let me ask the analyst"). Second, every feature proposal includes a measurable success criterion that gets reviewed after launch. Third, you've removed at least as many metrics from dashboards in the last quarter as you added, because focus matters more than collection.

What's the biggest mistake teams make with digital analytics?

Collecting data nobody uses. Every event tracked costs engineering time and vendor money. If nobody has opened a dashboard in 30 days, delete the metric. If you can't name a decision that a metric informs, don't track it. Most analytics bloat comes from "let's track this in case we need it later," and "later" rarely comes.

AUTHOR

Silvanus Alt, PhD

Founder & CEO | UXCam

Silvanus Alt, PhD, is the Co-Founder & CEO of UXCam and a expert in AI-powered product intelligence. Trained at the Max Planck Institute for the Physics of Complex Systems, he built Tara, the AI Product Analyst that not only analyzes user behavior but recommends clear next steps for better products.

TABLE OF CONTENTS

- Key takeaways

- What is digital analytics?

- Digital vs Product vs Web Analytics

- Understanding the four types of digital analytics

- How to implement digital analytics (step-by-step)

- Common pitfalls in digital analytics

- The role of digital analytics in modern business

- Building a data-driven culture

- Future trends in digital analytics

- Improve your digital analytics with UXCam

Related articles

Website Analysis

Digital Analytics: The Complete 2026 Guide

A practical guide to digital analytics in 2026. The four types (descriptive, diagnostic, predictive, prescriptive), how each actually gets used,...

Silvanus Alt, PhD

Founder & CEO | UXCam

Website Analysis

Website Visitor Tracking: Tools, Metrics, How-To (2026)

Website visitor tracking is the practice of capturing how individual users behave on your site (clicks, scrolls, form interactions, page paths) so you...

Silvanus Alt, PhD

Founder & CEO | UXCam

Website Analysis

What Is Web Analytics? Definition, Metrics, and Top 10 Tools (2026)

Web analytics is the collection, measurement, and analysis of data from website visitors to understand behavior, optimize conversions, and inform...

Silvanus Alt, PhD

Founder & CEO | UXCam